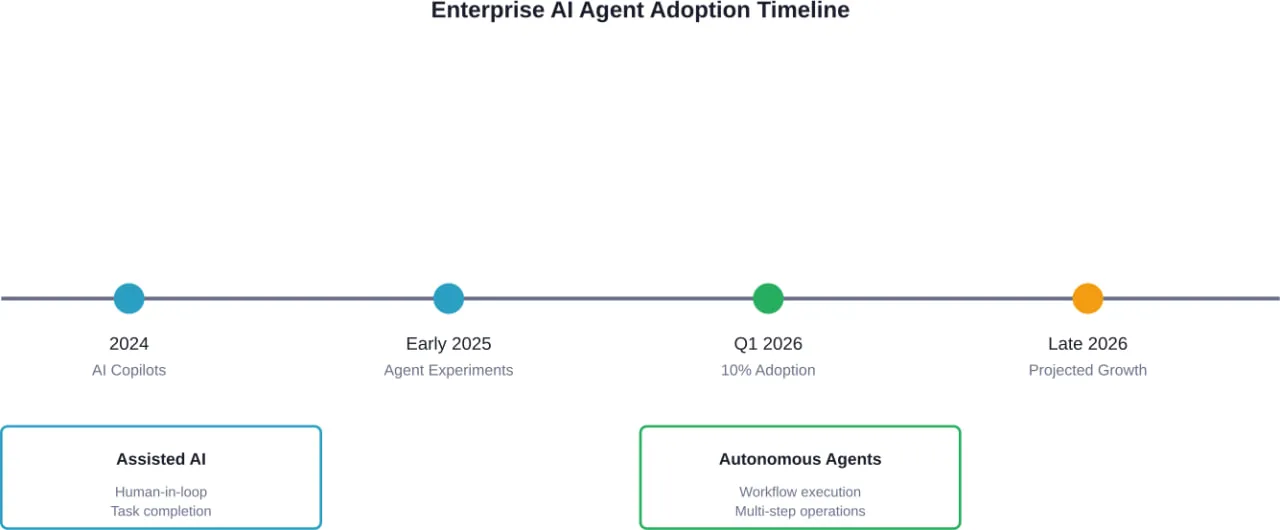

סיכום קצר: בשנת 2026, סוכני בינה מלאכותית (AI) ארגוניים יעברו משלב של כלים ניסיוניים למערכות ייצור, כאשר חברות טכנולוגיה מובילות כמו NVIDIA, Oracle ו-OpenAI ישיקו פלטפורמות ברמה ארגונית. על פי ממצאי מקנזי שפורסמו במרץ 2026, כ-10% מהפונקציות הארגוניות משתמשות כיום בסוכני AI, אם כי קצב האימוץ משקף את דפוסי הצמיחה המוקדמים של מחשוב הענן. יוזמות התקנים הפדרליות של NIST קובעות מסגרות ממשל, בעוד שמערכות AI אוטונומיות עוברות מ"טייסי משנה" מסייעים לסוכנים תפעוליים אוטונומיים לחלוטין.

תחום ה-AI הארגוני הגיע זה עתה לנקודת מפנה. לאחר שנים שבהן עוזרי AI ו"טייסים משניים" סייעו בביצוע משימות נקודתיות, סוכנים אוטונומיים המסוגלים לבצע תהליכי עבודה מורכבים ללא התערבות אנושית נכנסים סוף סוף לסביבות הייצור.

אבל הנה העניין: האימוץ עדיין מרוכז. רוב הארגונים עדיין מנסים להבין היכן יש לשלב את הסוכנים, כיצד צריכה להיראות מסגרת הניהול, והאם התשתית מסוגלת לתמוך במערכות אלה בקנה מידה נרחב.

בואו נבחן מה באמת קורה כרגע בתחום הסוכנים המונעים על ידי בינה מלאכותית בארגונים, בהתבסס על נתונים עדכניים והשקות פלטפורמות מצד השחקנים הגדולים ביותר בענף.

הטמעה ארגונית כיום: נתוני מקנזי

על פי ממצאי מקנזי שפורסמו במרץ 2026, כ-10% מתפקידי הארגון משתמשים כיום בסוכני בינה מלאכותית. אמנם אין מדובר בחדירה נרחבת, אך מדובר בהישג משמעותי אם לוקחים בחשבון את המצב שבו הייתה הטכנולוגיה הזו לפני 18 חודשים בלבד.

עקומת האימוץ משקפת את מסלול ההתפתחות המוקדם של מחשוב הענן. זוכרים את שנת 2010? על פי נתוני התעשייה שציטטה חברת מקנזי, AWS ייצרה באותה שנה הכנסות של 1.45 מיליארד דולר בלבד. Azure רק הושקה. Google App Engine הייתה עדיין ניסוי של מפתחים.

אם נדלג קדימה לשנת 2025, נראה שתשתית הענן הפכה לסטנדרט המוביל בפעילות הארגונית. אם הבינה המלאכותית הסוכנתית תלך באותו נתיב — והיסודות הטכניים מצביעים על כך — נתוני האימוץ הנוכחיים מהווים רק את נקודת ההתחלה, ולא את התקרה.

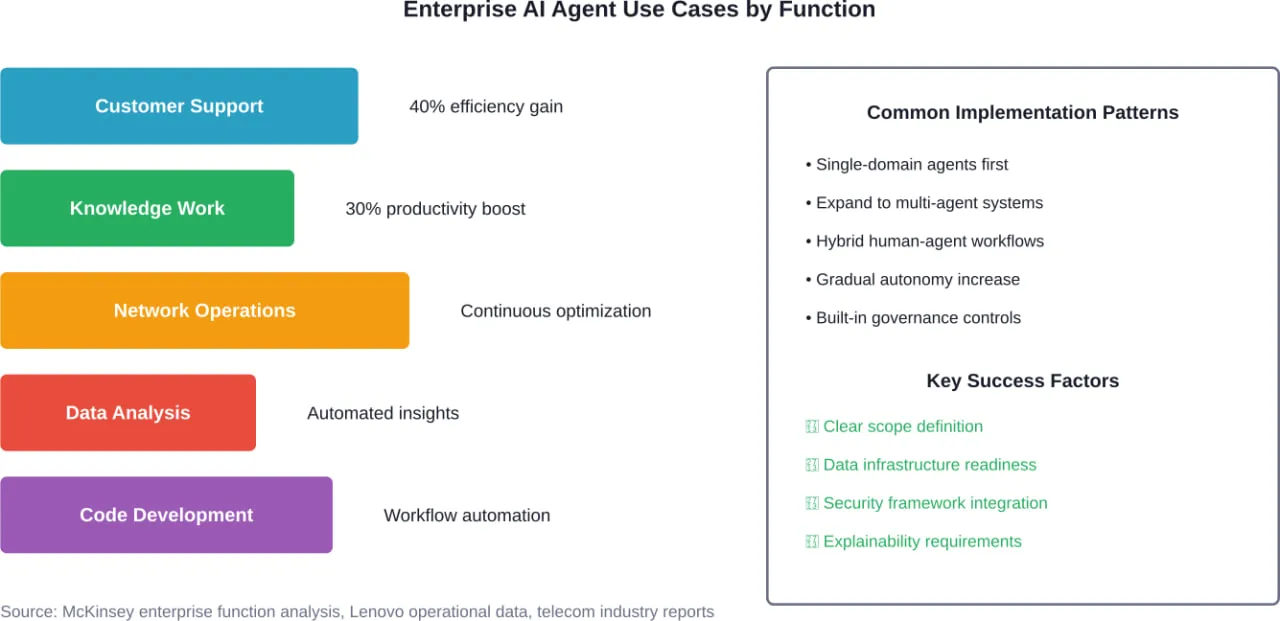

בואו נדבר בכנות: על פי ניתוח תפעולי של Lenovo, ארגונים מדווחים על שיפור בפריון של עד 301% בעבודת ידע ועל עלייה ביעילות של עד 401% בקרב צוותי התמיכה והתפעול. אלה אינם שיפורים שוליים. מדובר במדדים שמאלצים את מנהלי הכספים להקדיש להם תשומת לב.

השקות פלטפורמות מרכזיות שיעצבו את שנת 2026

שלוש פלטפורמות סוכנים ארגוניות משמעותיות הושקו או הורחבו בתחילת 2026, כשכל אחת מהן נוקטת בגישה שונה לפריסת בינה מלאכותית אוטונומית.

ערכת הכלים של NVIDIA Agent

NVIDIA הכריזה על ערכת הכלים Agent Toolkit ב-16 במרץ 2026, והציגה אותה כפלטפורמת פיתוח פתוחה לבניית והפעלת סוכני בינה מלאכותית בסביבות ארגוניות. ערכת הכלים כוללת את NVIDIA OpenShell, סביבת ריצה בקוד פתוח שנועדה לבניית סוכנים המתפתחים באופן עצמאי, עם בקרות בטיחות ואבטחה משופרות.

ארכיטקטורת AI-Q Blueprint של הפלטפורמה, שנבנתה באמצעות LangChain, משתמשת במודלים מתקדמים לתזמור, תוך הפעלת מודלים פתוחים של NVIDIA Nemotron למשימות מחקר. לפי NVIDIA, גישה היברידית זו יכולה לצמצם את עלויות השאילתות ביותר מ-50%, תוך שמירה על רמת דיוק מהשורה הראשונה.

מערכת ההערכה המובנית מסבירה כיצד נוצרת כל תשובה של הבינה המלאכותית — דבר חיוני בסביבות ארגוניות שבהן תיעוד ביקורת ויכולת הסבר אינם תכונות אופציונליות.

סוכני הארגון הפרואקטיביים של Oracle

הגישה של Oracle משלבת תהליכים מבוססי סוכנים ישירות ב-Oracle Cloud Infrastructure (OCI), באמצעות בונה סוכנים חדש שמבסס מערכות בינה מלאכותית על נתוני הארגון כבר מהשלב הראשוני. הדגש כאן הוא על התאמה אישית ועל מקומיות הנתונים — סוכנים שמבינים את ההקשר הארגוני מכיוון שהם נבנים על גבי מערכות עסקיות קיימות.

הדבר נותן מענה לאחת הדאגות המרכזיות של ארגונים: סוכנים הפועלים ביעילות זקוקים לגישה לנתונים קנייניים, אך הדבר יוצר אתגרים בתחום האבטחה והממשל. ההימור של Oracle הוא שהאינטגרציה המובנית ב-OCI פותרת את הבעיה הזו על ידי שמירה על כל הנתונים בתוך גבולות הענן הקיימים.

פלטפורמת הסוכנים הארגוניים של OpenAI

OpenAI השיקה את פלטפורמת הסוכנים הארגונית שלה, ‘Frontier’, ב-5 בפברואר 2026, והיא מציעה הן את הפלטפורמה הטכנולוגית והן שירותי הנדסת אנוש כדי לסייע לארגונים בפריסת סוכני בינה מלאכותית. הדבר משקף את ההכרה בכך שכלי עבודה בלבד אינם מספיקים כדי להניע אימוץ — למומחיות ביישום יש חשיבות רבה.

על פי דיווחים מחודש ינואר 2026, שרה פריאר, מנהלת הכספים הראשית של OpenAI, אמרה ל-CNBC כי החברה צופה שהיקף הפעילות של לקוחות עסקיים יגדל מ-40% ל-50% מסך הפעילות העסקית עד סוף השנה. שינוי זה מחייב פיתוח מוצרים המותאמים לרוכשים ארגוניים, ולא רק למפתחים פרטיים.

תקנים פדרליים ומסגרות ממשל

עם התגברות אימוץ הטכנולוגיה בארגונים, גופים רגולטוריים וגופי תקינה קובעים מסגרות ליישום בטוח. המרכז לתקני בינה מלאכותית וחדשנות (CAISI) של המכון הלאומי לתקנים וטכנולוגיה (NIST) השיק ב-17 בפברואר 2026 את "יוזמת תקני סוכני בינה מלאכותית", שמטרתה להבטיח מערכות סוכניות אמינות, תואמות ומאובטחות.

ה-NIST ערך את הסדנה השנייה בנושא פרופיל ה-AI הסייבר של ה-NIST (פורסם ב-23 במרץ 2026), שבמסגרתה נבחן כיצד על ארגונים לשלב בינה מלאכותית בפעילותם תוך צמצום סיכוני אבטחת הסייבר. אין מדובר בהנחיות תיאורטיות, אלא במודלים מעשיים המיועדים למנהלי מערכות מידע (CIO) המבקשים לפרוס מערכות אוטונומיות מבלי ליצור נקודות תורפה חדשות.

הטיוטה של הנחיות ה-NIST שפורסמה ב-16 בדצמבר 2025 מציגה גישה מחודשת לאבטחת סייבר, המותאמת במיוחד לעידן הבינה המלאכותית, מתוך הכרה בכך שמודלים מסורתיים לאבטחה אינם מתייחסים באופן מלא למערכות המקבלות החלטות עצמאיות ומשנות את התנהגותן לאורך זמן.

מבחינה מדינית, הבית הלבן פרסם ב-23 ביולי 2025 צו נשיאותי העוסק ב-AI במערכות הפדרליות, וב-24 ביולי 2025 פורסמו הודעות נלוות. בעוד שחלק מההנחיות התמקדו בשיקולים אידיאולוגיים, המסגרת הרחבה יותר קבעה עקרונות להטמעת AI בסוכנויות ממשלתיות – עקרונות המשפיעים לעתים קרובות על שיטות העבודה המומלצות בארגונים.

אתגר התשתיות

הנה נושא שלא מככב בכותרות, אך חשיבותו עצומה: התשתית. הפעלת סוכנים אוטונומיים בקנה מידה ארגוני דורשת ארכיטקטורות מחשוב שונות בתכלית מאלה המשמשות לטיפול בבקשות API ל"קופילוטים".

מניתוח שערך לאחרונה חברת Lenovo עולה כי מערכות בינה מלאכותית אוטונומיות נדרשות לטפל בפעולות מורכבות ורציפות באופן מקומי, תוך הפגנת ביצועים גבוהים וקיבולת זיכרון גדולה. הפעלת עומסי עבודה של בינה מלאכותית באופן מקומי מפחיתה את התלות בממשקי API חיצוניים, משפרת את זמן התגובה ומעניקה לארגונים שליטה רבה יותר על נתונים רגישים.

זו הסיבה שמערכות כמו תחנות העבודה ThinkStation של Lenovo מיועדות במיוחד לפריסת סוכני בינה מלאכותית מקומיים. זה לא רק עניין של כוח מחשוב גולמי — אלא של קיומה של ארכיטקטורה שתאפשר להפעיל את המערכות הללו במקום שבו נמצאים הנתונים.

| מודל פריסה | יתרונות | אתגרים | הכי מתאים ל |

|---|---|---|---|

| סוכנים מבוססי ענן | מדרגיות, עדכונים קלים, עלות ראשונית נמוכה יותר | תלות ב-API, זמן השהיה, עלויות שוטפות | צוותים מבוזרים, עומסי עבודה משתנים |

| סוכנים מקומיים | בקרת נתונים, זמן תגובה קצר, עלויות צפויות | השקעה בתשתיות, הוצאות תחזוקה | ענפים מפוקחים, מידע רגיש |

| ארכיטקטורה היברידית | גמישות, יחס עלות-תועלת מיטבי | מורכבות, אתגרי אינטגרציה | ארגונים גדולים בעלי צרכים מגוונים |

כיווני מחקר אקדמיים

המחקר האקדמי ממהר להדביק את הקצב של היישום המעשי. סקירות מקיפות רבות שפורסמו ב-arXiv בחודשים האחרונים מנסות לקבוע סיווגים ומסגרות להבנת מערכות בינה מלאכותית בעלות יכולת פעולה עצמית.

סקירה שיטתית אחת מבחינה בין סוכני בינה מלאכותית עצמאיים לבין מערכות אקולוגיות של סוכנים הפועלים בשיתוף פעולה — הבחנה מכרעת, שכן ארגונים עוברים מסוכנים בעלי ייעוד יחיד למערכות שבהן סוכנים רבים מתאמים ביניהם בין תחומי פעילות עסקיים שונים.

מועצת התקנים של IEEE SA אישרה ב-12 בפברואר 2026 תקנים חדשים, בהם תקנים הנוגעים לדרישות היכולות של סוכני בינה מלאכותית במחקר חומרים (P3933), מודלים לשוניים גדולים בתחום האודיו (P3936) והערכת אבטחת IoT (P2994). גופי התקינה למעשה ממהרים לקבוע קווים מנחים בעוד הטכנולוגיה מתפתחת בזמן אמת.

יישומים ספציפיים לתעשייה

חברות תקשורת מטמיעות בינה מלאכותית מבוססת סוכנים לצורך אופטימיזציה של הרשת וניהול מחזור החיים בתשתיות ה-RAN, התמסורת והליבה. המורכבות וההיקף של רשתות ה-5G דחקו את האוטומציה המסורתית אל קצה גבול היכולת שלה — סוכנים המסוגלים לאבחן תקלות, לייעל תצורות ולנהל משאבים באופן אוטונומי הופכים לצורך תפעולי הכרחי, ולא לפרויקטים ניסיוניים.

Alibaba International השיקה את Accio Work, פלטפורמת סוכנים עסקיים המיועדת לפעילות עסקית גלובלית. ההתמקדות בפריסה בינלאומית משקפת את האופן שבו הסוכנים מתמודדים עם המורכבות הכרוכה בפעילות רב-אזורית, המרת מטבעות, עמידה בדרישות הרגולטוריות ולוקליזציה בקנה מידה נרחב.

מה יקרה בהמשך

12 החודשים הקרובים יקבעו אם סוכני ה-AI הארגוניים ימשיכו במסלול הצמיחה המהיר של הענן או שיישארו ברמת אימוץ נישתית. מספר גורמים ישפיעו על התוצאה הזו.

ראשית, יש להביא את מסגרות הניהול לבשלות. ארגונים לא יטמיעו מערכות אוטונומיות באמת בקנה מידה נרחב, כל עוד לא תהיה להם ביטחון במנגנוני הבקרה, במסלולי הביקורת ובמנגנוני הבטיחות. לעבודת התקינה של ה-NIST יש חשיבות רבה, שכן היא מספקת את השפה המשותפת ואת אמות המידה הדרושות לצוותי הרכש.

שנית, על התשתית להוכיח שהיא מסוגלת להתמודד עם פעילות אוטונומית רציפה מבלי ליצור מצבי כשל חדשים. פריסות מוקדמות מהוות למעשה מעבדת ניסויים לדפוסים ארכיטקטוניים, אשר יאשרו או יפסלו גישות ספציפיות.

שלישית, יש להפוך את החזר ההשקעה (ROI) לניתן לחיזוי. עלייה בפריון בשיעור של 30–40% נשמעת מפתה, אך מנהלי הכספים צריכים להבין את עלויות היישום, את ההוצאות התפעוליות השוטפות ואת לוחות הזמנים הריאליים. ספקי הפלטפורמות מתחילים לפרסם מחקרי מקרה הכוללים נתונים מספריים אמיתיים — ושקיפות זו מאיצה את קצב האימוץ.

תראו, הטכנולוגיה כבר מוכנה. הפלטפורמות קיימות. המשתמשים הראשונים מדווחים על הישגים של ממש. מה שעדיין לא ברור הוא באיזו מהירות תרבות הארגון, תהליכי הרכש ומסגרות ניהול הסיכונים יתאימו את עצמם למערכות הפועלות באוטונומיה אמיתית.

להפוך את מגמות ה-AI למערכות שפועלות בפועל

בחדשות בתחום הבינה המלאכותית הארגונית מדגישים לעתים קרובות פלטפורמות ושינויים בשוק, אך רוב הצוותים נתקלים בבעיות מעשיות – חיבור בין כלים, טיפול בנתונים בין מערכות שונות, ושמירה על יציבות המערכת עם הגידול בהיקף השימוש.

A-listware תומכת בחברות בשלב זה באמצעות צוותי פיתוח ייעודיים. הדגש הוא על ה-backend, אינטגרציות ותשתית התומכות ביוזמות בינה מלאכותית, ומסייעות לעסקים לעבור מהחלטות המונעות על ידי טרנדים למערכות הפועלות במסגרת הפעילות השוטפת.

אם אתם עוברים משלב האסטרטגיה של בינה מלאכותית לשלב היישום, צרו קשר רשימת מוצרים א' כדי לתמוך בפיתוח, באינטגרציה ובתמיכה שוטפת במערכת.

שאלות נפוצות

- מה ההבדל בין טייסי משנה מבוססי בינה מלאכותית לסוכנים מבוססי בינה מלאכותית?

טייסי משנה מבוססי בינה מלאכותית מסייעים לבני אדם במשימות ספציפיות וזקוקים לאישור אנושי לביצוע פעולות. סוכנים מבוססי בינה מלאכותית יכולים לבצע תהליכי עבודה שלמים באופן אוטונומי, לקבל החלטות ולבצע פעולות ללא התערבות אנושית מתמדת. הסוכנים מטפלים בתהליכים רב-שלביים, מתאמים בין מערכות ופועלים ברציפות, במקום להגיב לפקודות בודדות.

- אילו ענפים מאמצים את סוכני ה-AI הארגוניים בקצב המהיר ביותר?

על פי נתוני מקנזי, תחומי התקשורת, תמיכת הלקוחות ועבודת הידע הם אלה שבהם נרשמת כיום רמת האימוץ הגבוהה ביותר. ענפי השירותים הפיננסיים והבריאות בוחנים את האפשרות להטמיע בוטים, אך פועלים בזהירות רבה יותר בשל דרישות רגולטוריות. חברות טכנולוגיה וחברות ייעוץ מיישמות בוטים לצורך פעילות פנימית, ובמקביל מפתחות פתרונות המיועדים ללקוחות.

- מהן הסוגיות העיקריות בתחום האבטחה הקשורות לסוכני בינה מלאכותית אוטונומיים?

החששות העיקריים כוללים גישה בלתי מורשית לנתונים רגישים, קבלת החלטות על ידי סוכנים המפרות את דרישות הציות, קושי בביקורת פעולות אוטונומיות, והאפשרות לסוכנים להיות נתונים למניפולציה באמצעות הזרקת הנחיות או קלט עוין. הנחיות אבטחת הסייבר של NIST מטפלות ברבים מהסיכונים הללו באמצעות מסגרות לפיקוח על סוכנים, דרישות רישום ובקרות אבטחה.

- כמה עולה הטמעת סוכני בינה מלאכותית בארגון?

העלויות משתנות באופן משמעותי בהתאם לגישת הפריסה. פלטפורמות מבוססות ענן גובות בדרך כלל תשלום לפי שאילתה או לפי משתמש, כאשר חלקן מדווחות על חיסכון בעלויות של 50%+ באמצעות ארכיטקטורות היברידיות עם מודלים פתוחים. פריסות מקומיות מצריכות השקעה בתשתית, אך מציעות עלויות שוטפות צפויות. מומלץ לבדוק את אתרי הספקים לקבלת מחירים עדכניים, שכן שוק זה נותר דינמי.

- האם עסקים קטנים ובינוניים יכולים להשתמש בסוכני בינה מלאכותית, או שמא הם מיועדים רק לארגונים גדולים?

אמנם ההשקות הנוכחיות של הפלטפורמות מכוונות ללקוחות עסקיים, אך הטכנולוגיה הופכת לנגישה יותר ויותר. פלטפורמות סוכנים מבוססות ענן מורידות את מחסום הכניסה על ידי ביטול הדרישות התשתיתיות. עסקים קטנים יכולים להתחיל עם סוכנים בעלי פונקציה אחת לתמיכה בלקוחות או לניתוח נתונים, לפני שהם מרחיבים את הפעילות ליישומים מורכבים יותר.

- אילו כישורים נדרשים לצוותים כדי לפרוס ולנהל סוכני בינה מלאכותית?

ארגונים זקוקים למומחיות בתפעול בינה מלאכותית ולמידת מכונה (AI/ML), בארכיטקטורת אבטחה, ובתחום העסקי הספציפי שבו יפעלו הסוכנים. ספקים רבים של פלטפורמות מציעים כיום שירותים מקצועיים ותמיכה ביישום, מתוך הכרה בכך שכלי עבודה בלבד אינם מספיקים. צוותים רב-תחומיים המשלבים מומחיות טכנית ומומחיות בתחום משיגים תוצאות טובות יותר מאשר יישומים טכניים גרידא.

- כיצד מודדים את החזר ההשקעה (ROI) של הטמעת סוכני בינה מלאכותית?

עקבו אחר מדדים ספציפיים כגון חיסכון בזמן בביצוע משימות שגרתיות, הפחתת טעויות ידניות, השלמת תהליכי עבודה מורכבים במהירות רבה יותר ושיפור בניצול המשאבים. ארגונים המדווחים על הצלחה מודדים את ביצועי הבסיס לפני פריסת הנציגים, ולאחר מכן עוקבים אחר אותם מדדים לאחר היישום. עלייה בפריון של 30% בעבודת ידע ושיפור ביעילות של עד 40% בתפעול מהווים אמות מידה, אך התוצאות בפועל תלויות במקרה השימוש ובאיכות היישום.

התקדמות בתחום סוכני ה-AI הארגוניים

בשנת 2026, סוכני בינה מלאכותית ארגוניים עברו משלב הטכנולוגיה הניסיונית לשלב היישום בפועל. הפלטפורמות כבר קיימות. מסגרות התקנים מתחילות להתגבש. המשתמשים הראשונים מדווחים על עלייה ממשית בפריון.

אך אנחנו עדיין נמצאים בשלב מוקדם. שיעור אימוץ של 10% פירושו ש-90% מהפונקציות הארגוניות עדיין לא הטמיעו סוכנים. פער זה מהווה הן הזדמנות והן אתגר — הזדמנות לארגונים שיפעלו בנחישות, ואתגר בניהול תהליכי ממשל, תשתית וניהול שינויים ללא מדריכים קבועים.

האנלוגיה לענן עדיין תקפה. מי שהבין את כיוון ההתפתחות של הענן בשנת 2010, התכונן כראוי למהפכת התשתית שבאה בעקבותיה. ארגונים הבוחנים כיום את הבינה המלאכותית הסוכנתית ניצבים בפני נקודת מפנה דומה. הטכנולוגיה עובדת. השאלה היא עד כמה מהר הארגון שלכם יוכל להסתגל למערכות שלא רק מסייעות – אלא גם מבצעות.

למנהיגים עסקיים וצוותי טכנולוגיה הבוחנים את השימוש בסוכני בינה מלאכותית ארגוניים: התחילו עם תרחישי שימוש מוגדרים היטב, קבעו מסגרות ניהול כבר מהיום הראשון, ובחרו בפלטפורמות המתאימות לאסטרטגיית התשתית שלכם. חלון ההזדמנויות להשגת יתרון תחרותי באמצעות אימוץ מוקדם לא יישאר פתוח לנצח.