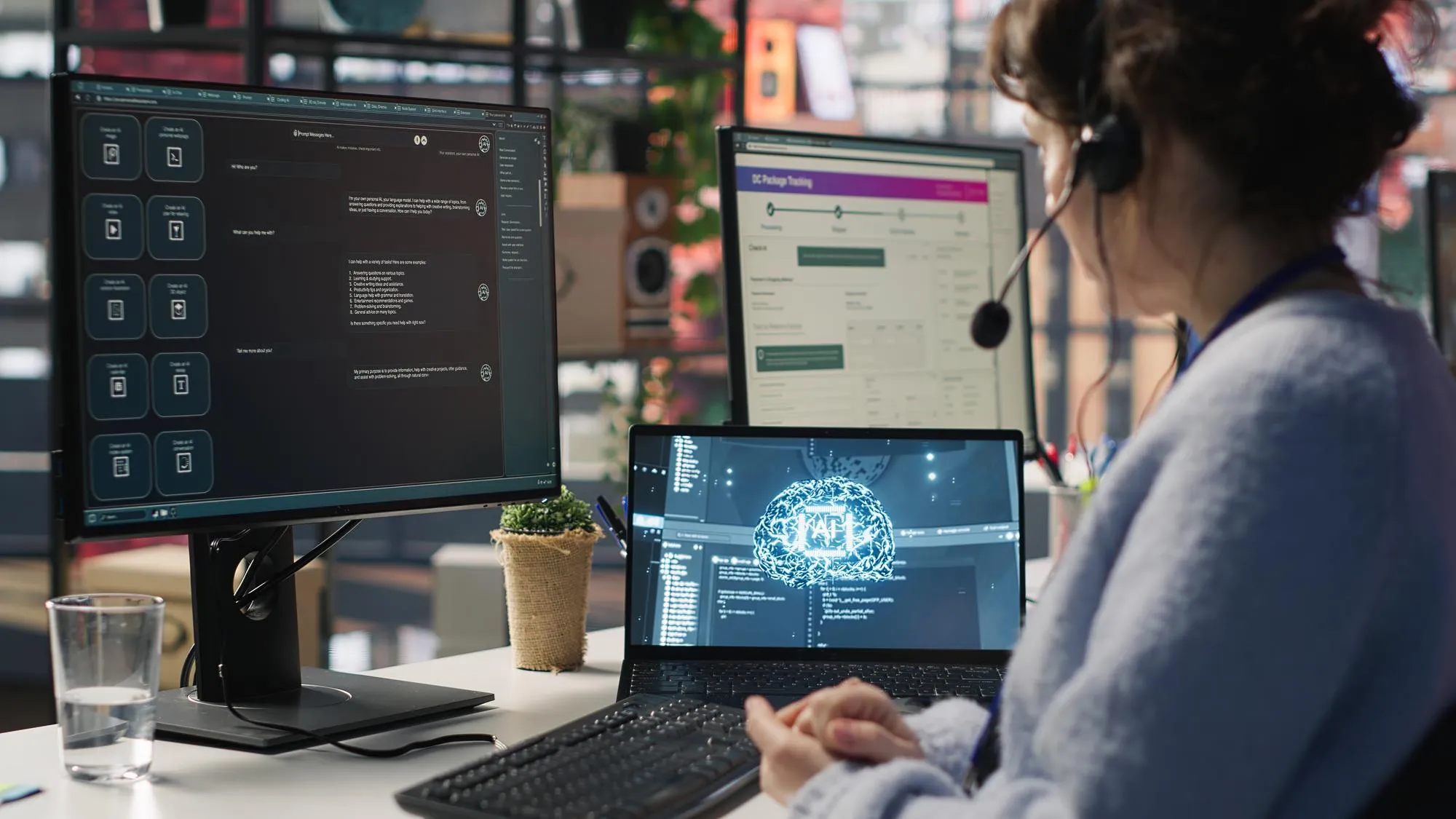

KI-Agenten tauchen allmählich an Stellen auf, die früher die ständige Aufmerksamkeit von Menschen erforderten - Warteschlangen beim Kundensupport, interne Arbeitsabläufe, Datenabfragen, sogar Teile der Entscheidungsfindung. Nicht als großer Ersatz, sondern als etwas, das den Menschen im Stillen Arbeit abnimmt.

Dennoch stoßen die meisten Teams recht schnell auf die gleiche Frage: Wo machen diese Agenten tatsächlich Sinn?

Es gibt eine Vielzahl von Plattformen, die behaupten, alles zu automatisieren“, aber in der Praxis liegt der Wert eher in engeren, klar definierten Aufgaben, die Mustern folgen, sich häufig wiederholen und nicht auseinanderfallen, wenn sie weitergegeben werden.

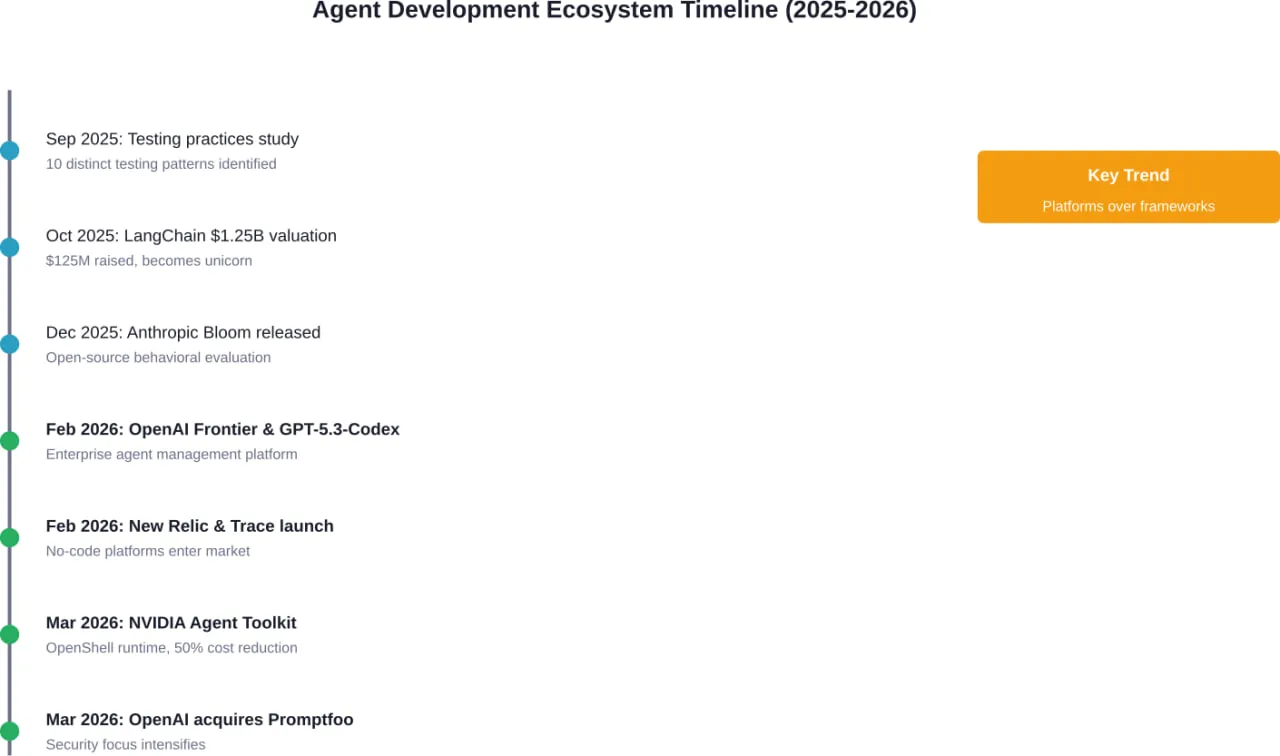

Im Folgenden finden Sie einen Überblick über die aktuelle Landschaft der KI-Agententools und -Plattformen. Es handelt sich dabei nicht um eine Rangliste oder einen Leitfaden für die Auswahl, sondern lediglich um einen Überblick über das Angebot und die Entwicklung der verschiedenen Ansätze.

KI-Agenten in realen Unternehmenssystemen arbeiten lassen

KI-Agenten arbeiten selten allein. Sie sind auf Backend-Systeme, APIs, Integrationen und eine stabile Infrastruktur angewiesen, um in einer Geschäftsumgebung zuverlässig zu funktionieren.

Das ist der Ort, an dem A-listware ins Spiel kommt. Das Unternehmen konzentriert sich auf die Softwareentwicklung und engagierte Ingenieurteams, die sich um die Architektur, die Entwicklung und den laufenden Support kümmern und so die Grundlage für KI-gesteuerte Funktionen bilden, sobald diese über das Prototypenstadium hinausgehen.

Wenn Sie an KI-Agenten arbeiten, kann A-listware Ihnen helfen:

- Dienste, APIs und interne Systeme um Ihre Agenten herum verbinden

- Verwaltung von Datenflüssen und Integrationen zwischen Ihren Geschäftswerkzeugen

- Stabilität und Leistung über einen längeren Zeitraum zu erhalten

Machen Sie KI-Agenten zu einem funktionierenden Teil Ihres Unternehmens mit A-listware.

![]()

1. Cognigy

Cognigy präsentiert sich als eine Plattform, die sich auf den Aufbau und den Betrieb von KI-Agenten in kundenorientierten Umgebungen konzentriert, hauptsächlich im Bereich Support und Contact Center. Das Produkt konzentriert sich auf die Abwicklung von Konversationen über Kanäle wie Sprache, Chat und Messaging, während es gleichzeitig menschliche Agenten mit Tools wie Echtzeithilfe und Zugang zu internem Wissen unterstützt. Es setzt auf strukturierte Automatisierung - Weiterleitung von Anfragen, Lösung allgemeiner Probleme und Verringerung der Notwendigkeit der manuellen Bearbeitung in sich wiederholenden Fällen.

Was auffällt, ist, wie die Plattform verschiedene Teile der Kundeninteraktion in ein System einbindet. Der Schwerpunkt liegt auf der Kombination von Sprachverständnis und der Integration in die bestehende Infrastruktur, sodass KI-Agenten tatsächlich Aufgaben erledigen können, anstatt nur zu reagieren. Gleichzeitig bleiben die menschlichen Agenten durch Copiloten und gemeinsamen Kontext in der Schleife, was darauf hindeutet, dass die Plattform nicht dazu gedacht ist, Support-Teams vollständig zu ersetzen, sondern die Belastung zu verringern und die Arbeitsabläufe besser zu verwalten.

Wichtigste Highlights:

- KI-Agenten für Sprach-, Chat- und Messaging-Kanäle

- Schwerpunkt auf Kundenservice und Contact Center-Betrieb

- Echtzeit-Support-Tools für menschliche Agenten (Copilot)

- Integration in bestehende Unternehmenssysteme

- Mehrsprachige Unterstützung mit Übersetzungsfunktionen

- Kombiniert Automatisierung mit menschlich unterstützten Arbeitsabläufen

Für wen es am besten geeignet ist:

- Teams, die große Mengen von Kundenanfragen bearbeiten

- Unternehmen, die Kundenkommunikation über mehrere Kanäle betreiben

- Organisationen, die sich wiederholende Supportaufgaben reduzieren möchten

- Unternehmen mit bestehender Contact Center-Infrastruktur

Kontaktinformationen:

- Website: www.cognigy.com

- E-Mail: info-us@cognigy.com

- Facebook: www.facebook.com/cognigy

- Twitter: x.com/cognigy

- LinkedIn: www.linkedin.com/company/cognigy

- Adresse: 2400 N Glenville Drive, Gebäude B, Suite 400, Richardson , Texas 75082

- Telefon: +1 972 301 1300

2. Mitstreiter

Fellow ist auf Besprechungen und alles, was damit zusammenhängt, ausgerichtet. Es zeichnet auf, transkribiert und fasst Gespräche zusammen und verwandelt diese Informationen dann in etwas Brauchbares - Notizen, Aktionspunkte, Folgemaßnahmen oder Updates in anderen Systemen. Die KI-Agentenschicht setzt darauf auf und ermöglicht es den Nutzern, vergangene Meetings zu durchsuchen oder Ergebnisse auf der Grundlage der besprochenen Themen zu generieren.

Der Schwerpunkt liegt eindeutig auf Kontrolle und Datenschutz. Aufzeichnungen und Notizen werden zentral aufbewahrt, aber der Zugriff wird streng verwaltet, was sinnvoll ist, wenn man bedenkt, wie sensibel interne Meetings sein können. Die Lösung lässt sich auch mit bereits verwendeten Tools verbinden, sodass die Erkenntnisse aus Meetings nicht nur als Notizen gespeichert werden, sondern in Workflows wie CRM-Updates oder Aufgabenverwaltung einfließen.

Wichtigste Highlights:

- AI-Sitzungsaufzeichnung, Transkription und Zusammenfassungen

- Durchsuchbarer Besprechungsverlauf mit generierten Ergebnissen

- Zentralisierte Speicherung mit Zugriffskontrolle

- CRM- und Workflow-Integrationen

- Planung vor der Sitzung und Tagesordnungen

- Funktioniert auf allen wichtigen Meeting-Plattformen

Für wen es am besten geeignet ist:

- Teams mit häufigen internen und Kundenbesprechungen

- Organisationen, die auf Dokumentation und Folgemaßnahmen angewiesen sind

- Vertrieb, Kundenerfolg und Führungsteams

- Unternehmen, die strukturierte Sitzungsunterlagen benötigen

Kontaktinformationen:

- Website: fellow.ai

- Facebook: www.facebook.com/fellowmeetings

- Twitter: x.com/FellowAInotes

- LinkedIn: www.linkedin.com/company/fellow-ai

- Instagram: www.instagram.com/FellowAInotes

- Anschrift: 532 Montréal Rd #275, Ottawa, ON K1K 4R4, Kanada

3. Überprüfen Sie

Glean basiert auf internem Unternehmenswissen und der Art und Weise, wie Mitarbeiter damit umgehen. Es stellt eine Verbindung zu verschiedenen Tools im gesamten Unternehmen her und macht diese Informationen durchsuchbar. Anschließend werden KI-Agenten darüber gelegt, die dabei helfen, Aufgaben zu automatisieren oder Ergebnisse auf der Grundlage dieser Daten zu generieren. Anstatt sich auf einen einzigen Arbeitsablauf zu konzentrieren, erstreckt sich die Lösung über mehrere Funktionen wie Technik, Support, Personalwesen und Vertrieb.

Was auffällt, ist die Art und Weise, wie es Daten als gemeinsame Ressource behandelt. Das System greift auf Dokumente, Konversationen und Tools zurück und nutzt dann diesen Kontext, um Fragen zu beantworten oder Aktionen auszulösen. Es können Agenten für bestimmte Aufgaben erstellt werden, die jedoch alle auf der gleichen Wissensschicht basieren, wodurch die Konsistenz zwischen den Teams gewahrt bleibt.

Wichtigste Highlights:

- Einheitliche Suche über alle Tools und Daten des Unternehmens hinweg

- KI-Agenten für die Automatisierung interner Arbeitsabläufe

- Anschlüsse für eine breite Palette von Anwendungen

- Erstellung von Inhalten und Zusammenfassungen

- Unterstützung für mehrere Abteilungen und Anwendungsfälle

- Zentralisierte Wissensschicht

Für wen es am besten geeignet ist:

- Unternehmen mit fragmentierten internen Tools und Daten

- Teams, die sich auf Dokumentation und gemeinsames Wissen stützen

- Organisationen, die interne Prozesse automatisieren wollen

- Mittlere bis große Teams mit funktionsübergreifenden Arbeitsabläufen

Kontaktinformationen:

- Website: www.glean.com

- App Store: apps.apple.com/us/app/glean-work/id1582892407

- Google Play: play.google.com/store/apps/details?id=com.glean.app

- Twitter: x.com/glean

- LinkedIn: www.linkedin.com/company/gleanwork

- Instagram: www.instagram.com/gleanwork

- Anschrift: 634 2nd Street, San Francisco, CA 94107, Vereinigte Staaten

4. Zehneck

Decagon ist auf kundenorientierte KI-Agenten ausgerichtet, die sich auf die Bearbeitung von Interaktionen über verschiedene Kanäle wie Chat, Sprache und E-Mail konzentrieren. Die Plattform basiert auf der Idee, dass Agenten mehr wie eine Frontschicht für die Kundenkommunikation agieren - sie beantworten nicht nur Fragen, sondern führen Aktionen wie Umbuchungen, Kontoaktualisierungen oder Anfragen durch, die normalerweise einen menschlichen Operator erfordern.

Anstatt sich auf eine starre Konfiguration zu verlassen, führt das System Workflows ein, die in einer natürlicheren Sprache definiert sind, was die Iteration etwas weniger technisch macht. Außerdem liegt der Schwerpunkt eindeutig auf der laufenden Anpassung - dem Testen, Beobachten und Verfeinern des Verhaltens der Agenten im Laufe der Zeit. Die Einrichtung deutet darauf hin, dass von den Agenten erwartet wird, dass sie sich zusammen mit dem Unternehmen weiterentwickeln und nicht nach der Bereitstellung fixiert bleiben.

Wichtigste Highlights:

- KI-Agenten für Chat, Sprache und E-Mail

- Fokus auf Kundeninteraktion und Aufgabenerfüllung

- Workflow-Definition mit natürlicher Sprache

- Integrierte Test- und Iterationswerkzeuge

- Analytik in Verbindung mit Gesprächen und Verhalten

- Omnichannel-Unterstützung aus einem einzigen System

Für wen es am besten geeignet ist:

- Kundenbetreuung und Serviceleistungen

- Unternehmen, die Anfragen über mehrere Kanäle bearbeiten

- Teams, die flexible, sich entwickelnde Arbeitsabläufe benötigen

- Unternehmen, die sich wiederholende Interaktionen automatisieren wollen

Kontaktinformationen:

- Website: decagon.ai

- Twitter: x.com/DecagonAI

- LinkedIn: www.linkedin.com/company/decagon-ai

5. HubSpot Breeze Data Agent

HubSpot Breeze Data Agent ist ein KI-Agent, der auf Kundendaten und nicht auf direkten Gesprächen basiert. Er zieht Informationen aus verschiedenen Quellen wie CRM-Datensätzen, E-Mails, Anrufen und Dokumenten und nutzt dann diesen Kontext, um Fragen zu beantworten oder Erkenntnisse zu gewinnen. Ziel ist es, den Zeitaufwand für die manuelle Suche in verschiedenen Tools zu reduzieren, wenn man versucht, Kunden zu verstehen oder zu verfolgen, was vor sich geht.

In der HubSpot-Umgebung funktioniert es als Teil bestehender Arbeitsabläufe, anstatt separat zu arbeiten. Die Ausgaben sind so strukturiert, dass sie in das System zurückfließen - zur Aktualisierung von Datensätzen, zum Füllen von Datenlücken oder zur Unterstützung von Teams bei der Bearbeitung von Informationen, die bereits vorhanden, aber auf verschiedene Stellen verteilt sind.

Wichtigste Highlights:

- KI-Agent für die Analyse von Kundendaten

- Abrufen von Informationen aus CRM, E-Mails, Anrufen und Dokumenten

- Beantwortet kundenspezifische Geschäftsfragen auf der Grundlage verfügbarer Daten

- erstellt und aktualisiert strukturierte Kundendatensätze

- Funktioniert innerhalb bestehender HubSpot-Workflows

- Verbindet fragmentierte Daten zu einer einheitlichen Ansicht

Für wen es am besten geeignet ist:

- Teams, die eng mit CRM-Systemen zusammenarbeiten

- Marketing- und Vertriebsmaßnahmen

- Unternehmen, deren Daten über mehrere Tools verteilt sind

- Teams, die schnellen Zugang zu Kundeninformationen benötigen

Kontaktinformationen:

- Website: www.hubspot.com

- Facebook: www.facebook.com/hubspot

- Twitter: x.com/HubSpot

- LinkedIn: www.linkedin.com/company/hubspot

- Instagram: www.instagram.com/hubspot

- Anschrift: 2 Canal Park, Cambridge, MA 02141, Vereinigte Staaten

- Telefon: +1 888 482 7768

6. ClickUp-Super-Agenten

ClickUp betrachtet KI-Agenten als Teil einer breiteren Arbeitsumgebung und nicht als separates Werkzeug. Super Agents sind so konzipiert, dass sie ein breites Spektrum an Aufgaben übernehmen können - Schreiben, Analysieren, Verwalten von Workflows, Aktualisieren von Datensätzen und mehr - und das alles innerhalb desselben Arbeitsbereichs, in dem Teams bereits Projekte und Kommunikation verwalten.

Der Schwerpunkt liegt auf der Flexibilität. Agenten können für fast jede Art von Arbeit erstellt werden, und sie können direkt mit Aufgaben, Dokumenten und Personen interagieren. Das System ermöglicht es auch, dass mehrere Agenten zusammenarbeiten, wodurch es sich weniger wie ein einzelner Assistent anfühlt, sondern eher wie eine Automatisierungsebene für den gesamten Arbeitsablauf.

Wichtigste Highlights:

- KI-Agenten eingebettet in einen Projektmanagement-Arbeitsbereich

- Erledigt Aufgaben wie Schreiben, Analyse und Koordination

- Zollagenten für verschiedene Arten von Arbeiten

- Zusammenarbeit mehrerer Agenten in Arbeitsabläufen

- Integration mit Aufgaben, Dokumenten und Kommunikation

- Kontinuierliches Lernen und Kontextbewusstsein

Für wen es am besten geeignet ist:

- Teams verwalten Projekte und Arbeitsabläufe auf einer Plattform

- Organisationen, die ihre täglichen Abläufe automatisieren möchten

- Funktionsübergreifende Teams mit vielfältigen Aufgaben

- Benutzer, die KI in ihrem bestehenden Arbeitsbereich einsetzen möchten

Kontaktinformationen:

- Website: clickup.com

- Facebook: www.facebook.com/clickupprojectmanagement

- Twitter: x.com/clickup

- LinkedIn: www.linkedin.com/company/12949663

- Instagram: www.instagram.com/clickup

7. Devin

Devin ist ein KI-Agent, der sich auf die Softwareentwicklung konzentriert. Anstatt bei kleinen Aufgaben zu helfen, soll er größere Teile der Entwicklungsarbeit übernehmen - das Schreiben von Code, Debugging, Testen und die Verwaltung von Teilen des Entwicklungsprozesses. Die Idee ist eher die eines autonomen Mitarbeiters, der eine Aufgabe übernimmt und sie Schritt für Schritt abarbeitet.

Der Unterschied ist der Umfang. Es beschränkt sich nicht auf die Erstellung von Snippets oder Vorschlägen, sondern umfasst den gesamten Arbeitsablauf - Planung, Ausführung und Verfeinerung von Code. Gleichzeitig fügt es sich in bestehende Entwicklungsumgebungen ein und interagiert mit den Tools und Prozessen, die Ingenieure bereits verwenden.

Wichtigste Highlights:

- KI-Agent für Softwareentwicklungsaufgaben

- Kodierung, Fehlerbehebung und Tests

- Arbeitet über den gesamten Entwicklungsworkflow hinweg

- Arbeitet mit einem gewissen Maß an Selbstständigkeit

- Integration in Entwicklerwerkzeuge und -umgebungen

- Konzentration auf die Ausführung von Aufgaben, nicht nur auf Vorschläge

Für wen es am besten geeignet ist:

- Ingenieurteams und Entwickler

- Unternehmen, die Softwareprodukte entwickeln

- Teams mit sich wiederholenden oder strukturierten Kodierungsaufgaben

- Organisationen, die KI-unterstützte Entwicklung erforschen

Kontaktinformationen:

- Website: devin.ai

- Twitter: x.com/cognition

- LinkedIn: www.linkedin.com/company/cognition-ai-labs

8. Gegensprechanlage (Fin AI Agent)

Intercom integriert seinen KI-Agenten Fin direkt in eine Kundensupport-Plattform. Anstatt KI als separate Schicht hinzuzufügen, ist sie Teil des Helpdesks selbst und arbeitet mit menschlichen Agenten im selben System zusammen. Gespräche, Tickets und Kundendaten befinden sich alle an einem Ort, was bedeutet, dass der Agent und das Team mit demselben Kontext arbeiten.

Ein weiterer Teil der Einrichtung besteht darin, wie sich das System im Laufe der Zeit verbessert. Interaktionen werden analysiert, Muster werden verfolgt, und der Agent passt sich auf der Grundlage früherer Gespräche und menschlicher Eingaben an. Es gibt auch eine starke Verbindung zwischen Automatisierung und manueller Unterstützung, bei der Aufgaben zwischen KI und menschlichen Agenten wechseln können, ohne den Kontext zu verlieren.

Wichtigste Highlights:

- KI-Agent integriert in eine Helpdesk-Plattform

- Gemeinsamer Arbeitsbereich für KI und menschliche Agenten

- Omnichannel-Kommunikation in einem System

- Automatisiertes Ticketing und Routing

- Einblicke aus Gesprächsdaten

- Kontinuierliche Verbesserung auf der Grundlage von Interaktionen

Für wen es am besten geeignet ist:

- Kundenbetreuungsteams, die Helpdesk-Systeme verwenden

- Unternehmen, die laufende Kundengespräche führen

- Teams, die sowohl Automatisierung als auch menschliche Unterstützung benötigen

- Organisationen, die sich auf strukturierte Unterstützungsabläufe konzentrieren

Kontaktinformationen:

- Website: www.intercom.com

- E-Mail: press@intercom.com

9. Tableau

Der Schwerpunkt von Tableau liegt auf der Datenanalyse und -visualisierung, wobei der Fokus zunehmend auf der so genannten agentischen Analyse liegt. Die Plattform stellt eine Verbindung zu verschiedenen Datenquellen her und verwandelt diese Daten in visuelle Einblicke, die Menschen erkunden und teilen können. Darüber hinaus werden KI-gesteuerte Funktionen eingeführt, die dabei helfen, Daten nicht nur zu betrachten, sondern auch zu nutzen, einschließlich Systemen, die auf der Grundlage von Erkenntnissen Aktionen vorschlagen oder auslösen können.

Die Einrichtung ist nicht auf eine bestimmte Umgebung beschränkt. Sie kann in der Cloud, auf einer privaten Infrastruktur oder als Teil eines breiteren Salesforce-Ökosystems ausgeführt werden. Anstatt Analysten zu ersetzen, unterstützt die Plattform die Art und Weise, wie Menschen bereits mit Daten arbeiten, und fügt eine Ebene hinzu, auf der KI bei der Interpretation, Exploration und in einigen Fällen bei der Automatisierung von Folgeschritten helfen kann.

Wichtigste Highlights:

- Plattform für Datenvisualisierung und -analytik

- KI-Funktionen für die Generierung von Erkenntnissen und Maßnahmen

- Funktioniert in Cloud- und selbst gehosteten Umgebungen

- Integration mit mehreren Datenquellen

- Unterstützt Datenexploration und Berichtsworkflows

- Teil eines umfassenderen Analyse- und CRM-Ökosystems

Für wen es am besten geeignet ist:

- Datenanalysten und Business Intelligence-Teams

- Organisationen, die mit großen Datenmengen arbeiten

- Teams, die visuelle Berichte und Dashboards benötigen

- Unternehmen bauen datengesteuerte Arbeitsabläufe auf

Kontaktinformationen:

- Website: www.tableau.com

- Facebook: www.facebook.com/Tableau

- Twitter: x.com/tableau

- LinkedIn: www.linkedin.com/company/tableau-software

- Anschrift: 415 Mission Street, 3rd Floor, San Francisco, CA 94105, Vereinigte Staaten

- Telefon: 1-800-270-6977

10. Hightouch

Hightouch positioniert sich um Marketing-Workflows, die von Daten und KI-Agenten gesteuert werden. Es setzt auf dem bestehenden Data Warehouse eines Unternehmens auf und nutzt diese Daten, um Kampagnen, Personalisierung und Zielgruppenmanagement zu betreiben. Die Agentenschicht wird verwendet, um Teile der Marketingausführung zu automatisieren, von der Erstellung von Segmenten bis zur Entscheidung, welche Nachricht an welchen Nutzer gesendet werden soll.

Anstatt Daten in ein separates System zu verschieben, arbeitet es direkt mit den bereits vorhandenen Daten. Dies verändert die Art und Weise, wie Marketingteams mit Daten interagieren - weniger Export und Synchronisierung, mehr direkte Nutzung. Die Plattform umfasst auch eine Entscheidungslogik, bei der KI Signale auswertet und das Messaging oder Timing auf der Grundlage des Nutzerverhaltens über alle Kanäle hinweg anpasst.

Wichtigste Highlights:

- KI-Agenten für Marketing-Workflows und -Kampagnen

- Aufbauend auf bestehenden Data Warehouses

- Tools zum Aufbau von Zielgruppen und zur Segmentierung

- Personalisierung in Echtzeit über alle Kanäle hinweg

- KI-basierte Entscheidungsfindung für Messaging und Timing

- Integration mit einer Vielzahl von externen Tools

Für wen es am besten geeignet ist:

- Marketing- und Lebenszyklus-Teams

- Unternehmen mit etablierten Data Warehouses

- Organisationen, die Multi-Channel-Kampagnen durchführen

- Teams, die sich auf Personalisierung im großen Maßstab konzentrieren

Kontaktinformationen:

- Website: hightouch.com

- Twitter: x.com/HightouchData

- LinkedIn: www.linkedin.com/company/hightouchio

11. Lindy

Lindy ist als universeller KI-Assistent konzipiert, der mit alltäglichen Geschäftstools wie E-Mail, Kalender und Messaging-Plattformen arbeitet. Sie übernimmt Aufgaben wie das Verfassen von E-Mails, das Planen von Meetings und das Abrufen von Informationen aus verschiedenen Quellen. Die Idee ist, kleine, sich wiederholende Aktionen zu reduzieren, die den Tag ausfüllen können.

Das Besondere an dieser Lösung ist ihr proaktives Verhalten. Er wartet nicht nur auf Anweisungen, sondern kann auch Erinnerungen einblenden, den Kontext für Meetings vorbereiten oder auf der Grundlage laufender Aktivitäten die nächsten Schritte vorschlagen. Im Laufe der Zeit passt er sich an die Vorlieben des Nutzers an, wodurch er sich von einem einfachen Assistenten zu einer leichtgewichtigen operativen Ebene in persönlichen Arbeitsabläufen entwickelt.

Wichtigste Highlights:

- KI-Assistent für E-Mail, Meetings und Terminplanung

- Verfasst Nachrichten und verwaltet die Kommunikation

- Verbindungen über mehrere Arbeitsmittel hinweg

- Bietet proaktive Erinnerungen und Kontext

- Lernt mit der Zeit die Vorlieben der Benutzer

- Unterstützt die Automatisierung alltäglicher Aufgaben

Für wen es am besten geeignet ist:

- Personen, die einen vollen Terminkalender haben

- Teams, die häufig miteinander kommunizieren

- Fachleute, die mit mehreren Werkzeugen jonglieren

- Rollen mit sich wiederholenden Koordinationsaufgaben

Kontaktinformationen:

- Website: www.lindy.ai

- E-Mail: support@lindy.ai

- Twitter: x.com/getlindy

- LinkedIn: www.linkedin.com/company/lindyai

![]()

12. Relevanz KI

Relevance AI konzentriert sich auf den Aufbau von KI-Agenten für die Go-to-Market-Arbeit, einschließlich Vertrieb, Marketing und Kundenbetrieb. Es stellt die Idee einer KI-Belegschaft vor, in der mehrere Agenten Aufgaben wie Recherche, Kontaktaufnahme, Lead-Qualifizierung und Nachfassaktionen übernehmen. Diese Agenten können durch Ereignisse ausgelöst werden, z. B. durch Änderungen in einer Vertriebs-Pipeline oder eingehende Leads.

Es gibt eine Progression in der Anwendung der Automatisierung. Sie kann mit einfacher Unterstützung beginnen und dann zu autonomeren Arbeitsabläufen übergehen, wenn die Prozesse klarer werden. Das System lässt sich mit gängigen Tools wie CRM-, E-Mail- und Messaging-Plattformen verbinden, so dass Agenten innerhalb bestehender Arbeitsabläufe arbeiten können, anstatt sie komplett neu zu erstellen.

Wichtigste Highlights:

- KI-Agenten für Vertriebs- und Go-to-Market-Workflows

- Automatisierung von Forschung, Kontaktaufnahme und Folgemaßnahmen

- Multi-Agenten-Setup für verschiedene Aufgaben

- Integration mit CRM- und Kommunikationswerkzeugen

- Ereignisbasierte Auslöser für die Automatisierung

- Schrittweiser Übergang von unterstützten zu autonomen Arbeitsabläufen

Für wen es am besten geeignet ist:

- Verkaufs- und Umsatzteams

- Unternehmen mit strukturierten Pipelines

- Organisationen, die Outbound- und Inbound-Maßnahmen skalieren

- Teams, die sich wiederholende GTM-Aufgaben automatisieren möchten

Kontaktinformationen:

- Website: relevanceai.com

- Twitter: x.com/RelevanceAI_

- LinkedIn: www.linkedin.com/company/relevanceai

13. CrewAI

CrewAI basiert auf der Idee, dass mehrere KI-Agenten als ein koordiniertes System zusammenarbeiten. Anstatt sich auf einen einzelnen Assistenten zu konzentrieren, können Benutzer Gruppen von Agenten erstellen, die Aufgaben über Arbeitsabläufe hinweg aufteilen und erledigen können. Diese Agenten können mit Tools interagieren, definierten Rollen folgen und mit einem gewissen Maß an Autonomie arbeiten.

Die Plattform bietet verschiedene Möglichkeiten zur Erstellung und Verwaltung dieser Systeme, von visuellen Schnittstellen bis hin zu APIs. Ein weiterer Schwerpunkt ist die Kontrolle und Überwachung - die Verfolgung der Leistung der Agenten, die Anpassung des Verhaltens und die Sicherstellung der Konsistenz der Ergebnisse. Die Plattform ist eher als Infrastrukturebene für den Aufbau agentenbasierter Arbeitsabläufe konzipiert als ein fertiges Tool für einen bestimmten Anwendungsfall.

Wichtigste Highlights:

- Multi-Agenten-System für komplexe Arbeitsabläufe

- Visueller Builder und API-basierte Einrichtung

- Agenten interagieren mit Tools und externen Systemen

- Verfolgung und Überwachung von Arbeitsabläufen

- Schulung und Leitplanken für das Verhalten von Agenten

- Skalierbarer Einsatz in verschiedenen Teams

Für wen es am besten geeignet ist:

- Ingenieurwesen und technische Teams

- Unternehmen, die individuelle KI-Workflows entwickeln

- Organisationen, die eine mehrstufige Automatisierung benötigen

- Teams, die mit agentenbasierten Systemen experimentieren

Kontaktinformationen:

- Website: crewai.com

- Twitter: x.com/crewaiinc

- LinkedIn: www.linkedin.com/company/crewai-inc

14. Sierra

Sierra konzentriert sich auf KI-Agenten für die Kundenerfahrung und deckt Interaktionen über Kanäle wie Chat, Sprache und Messaging ab. Die Plattform ist so konzipiert, dass sie Unterhaltungen abwickelt und sie mit Aktionen wie Buchungen, Kontoaktualisierungen oder Serviceanfragen verbindet. Ziel ist es, Interaktionen konsistent zu halten, unabhängig davon, wo sie stattfinden.

Ein weiterer Teil des Systems besteht darin, wie Agenten aufgebaut und verbessert werden. Es gibt Tools zur Definition von Verhalten, zum Testen von Szenarien und zur Anpassung der Leistung im Laufe der Zeit. Die Plattform verfolgt auch Interaktionen und extrahiert Erkenntnisse, die dazu beitragen, die Reaktion und das Verhalten der Agenten in zukünftigen Gesprächen zu verbessern.

Wichtigste Highlights:

- KI-Agenten für kanalübergreifende Kundenkommunikation

- Unterstützt Chat-, Sprach-, E-Mail- und Messaging-Plattformen

- Werkzeuge zum Erstellen und Testen des Agentenverhaltens

- Integration mit externen Systemen und Datenquellen

- Kontinuierliche Verbesserung auf der Grundlage von Interaktionsdaten

- Fokus auf konsistente Kundenerfahrung

Für wen es am besten geeignet ist:

- Kundenbetreuung und Serviceteams

- Unternehmen mit Multi-Channel-Kommunikation

- Organisationen mit häufigen Kundenkontakten

- Teams, die Service-Workflows automatisieren möchten

Kontaktinformationen:

- Website: sierra.ai

- E-Mail: security@sierra.ai

- Twitter: x.com/sierraplatform

- LinkedIn: www.linkedin.com/company/sierra

15. Moveworks

Moveworks ist als KI-Assistentenplattform für interne Geschäftsabläufe konzipiert. Sie verbindet sich mit verschiedenen Systemen in einem Unternehmen - HR, IT, Finanzen und andere - und ermöglicht es den Mitarbeitern, über eine einzige Schnittstelle nach Informationen zu suchen oder Aktionen auszulösen. Die Agentenschicht wird verwendet, um Anfragen zu bearbeiten, Aufgaben zu automatisieren und den manuellen Austausch zwischen Teams zu reduzieren.

Anstatt sich auf eine Abteilung zu konzentrieren, erstreckt sich das System auf das gesamte Unternehmen. Das System kombiniert Suche und Ausführung, so dass eine Anfrage von einer Frage zu einer Aktion übergehen kann, ohne das Tool zu wechseln. Außerdem unterstützt es mehrere Sprachen und lässt sich mit einer Vielzahl von Geschäftsanwendungen integrieren, was die Anwendung in verschiedenen Teams erleichtert.

Wichtigste Highlights:

- KI-Assistent für interne Workflows und Abläufe

- Kombiniert Suche und Aufgabenausführung

- Arbeitet mit HR-, IT-, Finanz- und anderen Systemen zusammen

- Integration mit mehreren Geschäftsanwendungen

- Unterstützt mehrsprachige Umgebungen

- Zentralisierte Schnittstelle für Mitarbeiteranfragen

Für wen es am besten geeignet ist:

- Große Organisationen mit mehreren internen Systemen

- Teams, die interne Serviceanfragen bearbeiten

- Unternehmen, die ihre Abläufe rationalisieren wollen

- Organisationen mit verteilten oder globalen Teams

Kontaktinformationen:

- Website: www.moveworks.com

- E-Mail: support@moveworks.com

- Twitter: x.com/moveworks

- LinkedIn: www.linkedin.com/company/moveworksai

- Anschrift: 1400 Terra Bella Avenue, Mountain View, CA 94043

Schlussfolgerung

Wenn man einen Schritt zurücktritt und sich all das ansieht, erscheinen KI-Agenten nicht wirklich als eine große, einheitliche Sache. Sie tauchen in verschiedenen Bereichen des Unternehmens auf und erledigen ganz unterschiedliche Aufgaben. An einer Stelle bearbeiten sie Support-Tickets. An anderer Stelle helfen sie den Marketingteams bei der Durchführung von Kampagnen oder beim Abrufen von Antworten aus internen Daten. Dahinter verbirgt sich dieselbe Idee, aber sie wird auf sehr praktische, manchmal recht enge Weise angewandt.

Es gibt auch ein gewisses Muster in der Art und Weise, wie sie eingesetzt werden. Die meisten dieser Tools versuchen nicht, die Arbeitsweise von Unternehmen zu ersetzen. Sie setzen auf dem auf, was bereits vorhanden ist - bestehende Systeme, bestehende Prozesse, bestehende Daten. Und wenn die Dinge ausreichend strukturiert sind, fügen sie sich in der Regel ohne große Reibung ein. Wenn das nicht der Fall ist, wird deutlich, wo die Grenzen liegen.

Es geht also weniger um das Konzept “Einsatz von KI-Agenten” als vielmehr darum, herauszufinden, wo sie bei der täglichen Arbeit tatsächlich helfen. Normalerweise sind es die sich wiederholenden, leicht lästigen Aufgaben, mit denen niemand wirklich Zeit verbringen möchte. Dort scheinen sie zuerst zu landen. Über alles andere muss man sich noch ein bisschen mehr Gedanken machen.