Kurze Zusammenfassung: AI agents are autonomous systems that use artificial intelligence to complete tasks on behalf of users with minimal supervision. They combine reasoning, planning, memory, and tool use to achieve goals across diverse domains. Learning to use AI agents involves understanding their architecture, selecting the right tools and platforms, and implementing proper governance frameworks for safe deployment.

The shift from traditional AI systems to autonomous agents represents one of the most significant developments in artificial intelligence. These aren’t simple chatbots that respond to queries—they’re systems capable of pursuing complex goals, making decisions, and adapting their behavior based on context.

But here’s the thing: understanding what AI agents are is different from knowing how to actually use them. The gap between theory and practical implementation trips up even experienced teams.

This guide cuts through the complexity. It synthesizes insights from recent deployments, academic research from institutions like MIT and leading AI research, and practical guidance from organizations at the forefront of agent development.

Verstehen, was AI-Agenten eigentlich sind

Before diving into implementation, it’s worth establishing what separates AI agents from other AI systems. The distinction matters because it shapes how these tools should be deployed.

AI agents are software systems that combine foundation models with reasoning, planning, memory, and tool use capabilities. According to research from Bin Xu (2025) on AI Agent Systems and Tula Masterman et al. on emerging AI agent architectures, these systems serve as a practical interface between natural-language intent and real-world computation.

The key differentiator? Autonomy. While traditional AI assistants wait for instructions and respond, agents can pursue goals independently. They break down complex objectives into manageable tasks, execute those tasks using available tools, and adjust their approach based on results.

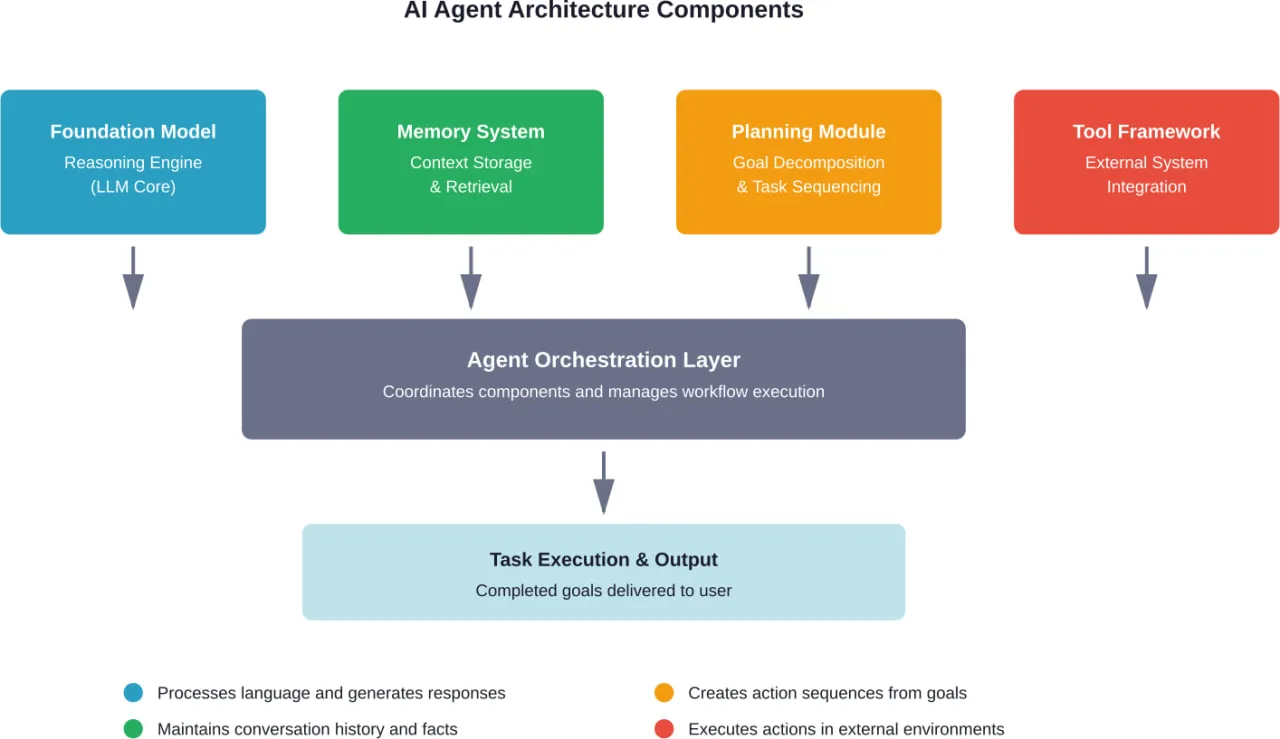

Core Components That Make Agents Work

Every functional AI agent relies on several foundational elements working in concert. Understanding these components helps clarify what’s happening under the hood.

The architecture typically includes a large language model serving as the reasoning engine, a memory system for maintaining context across interactions, a planning module that breaks goals into actionable steps, and a tool-use framework that allows the agent to interact with external systems.

Research by Bin Xu from Arizona State University (2025) on AI agent systems identifies these architectural patterns as essential for agents to deliver on their promise. Without proper memory, agents lose context. Without planning capabilities, they can’t tackle multi-step tasks. And without tool integration, they remain isolated from the systems where work actually happens.

How Agents Differ From Assistants and Bots

The terminology around AI systems gets muddy fast. Teams often use “agent,” “assistant,” and “bot” interchangeably, but the distinctions matter for implementation.

Bots automate simple, predefined tasks or conversations. They follow rigid scripts with minimal flexibility. AI assistants help users complete tasks but require continuous human direction and approval at each step.

Agents, on the other hand, operate with genuine autonomy. Give an agent a goal—say, “analyze quarterly sales data and prepare a report”—and it determines the necessary steps, accesses required systems, handles obstacles, and delivers the finished output.

| Charakteristisch | Bot | KI-Assistent | AI-Agent |

|---|---|---|---|

| Autonomiestufe | None (scripted) | Low (user-guided) | High (goal-directed) |

| Entscheidungsfindung | Rule-based only | Suggests options | Makes autonomous choices |

| Task Complexity | Single, simple tasks | Multi-step with guidance | Complex, multi-step independently |

| Learning Capability | Static | Limited adaptation | Learns and improves |

| Integration von Werkzeugen | Minimal | Mäßig | Extensive |

Getting Started With AI Agents

The theoretical foundation matters, but practical implementation is where most teams get stuck. The good news? Starting doesn’t require deep technical expertise or massive infrastructure investments.

Choosing Your First Use Case

Not every problem needs an AI agent. The most successful initial deployments focus on tasks that are repetitive, time-consuming, and follow reasonably consistent patterns—but still require some judgment.

Customer support provides an excellent entry point. Telecommunications company Vodafone implemented an AI agent-based support system that handles over 70% of customer inquiries without human intervention, reducing average resolution time by 47% while maintaining high customer satisfaction, according to research on AI agent evolution published in March 2025.

Other strong candidates include data analysis workflows, content generation pipelines, software testing and quality assurance, and process automation across business systems.

The pattern? Tasks where humans currently spend significant time on mechanical steps between moments of actual decision-making.

Selecting Tools and Platforms

The agent development landscape ranges from no-code platforms to sophisticated custom frameworks. The right choice depends on technical capabilities, use case complexity, and integration requirements.

For teams without extensive development resources, no-code platforms offer the fastest path to working agents. No-code platforms like n8n.io offer fast-track access to agent development for straightforward automation and integration tasks.

Teams with development capacity might consider frameworks that provide more control. OpenAI’s practical guide to building agents emphasizes composable patterns over complex frameworks—simple, well-designed components that fit together cleanly.

Anthropic’s research on building effective agents reaches a similar conclusion: the most successful implementations use straightforward patterns rather than heavyweight frameworks. Simple works.

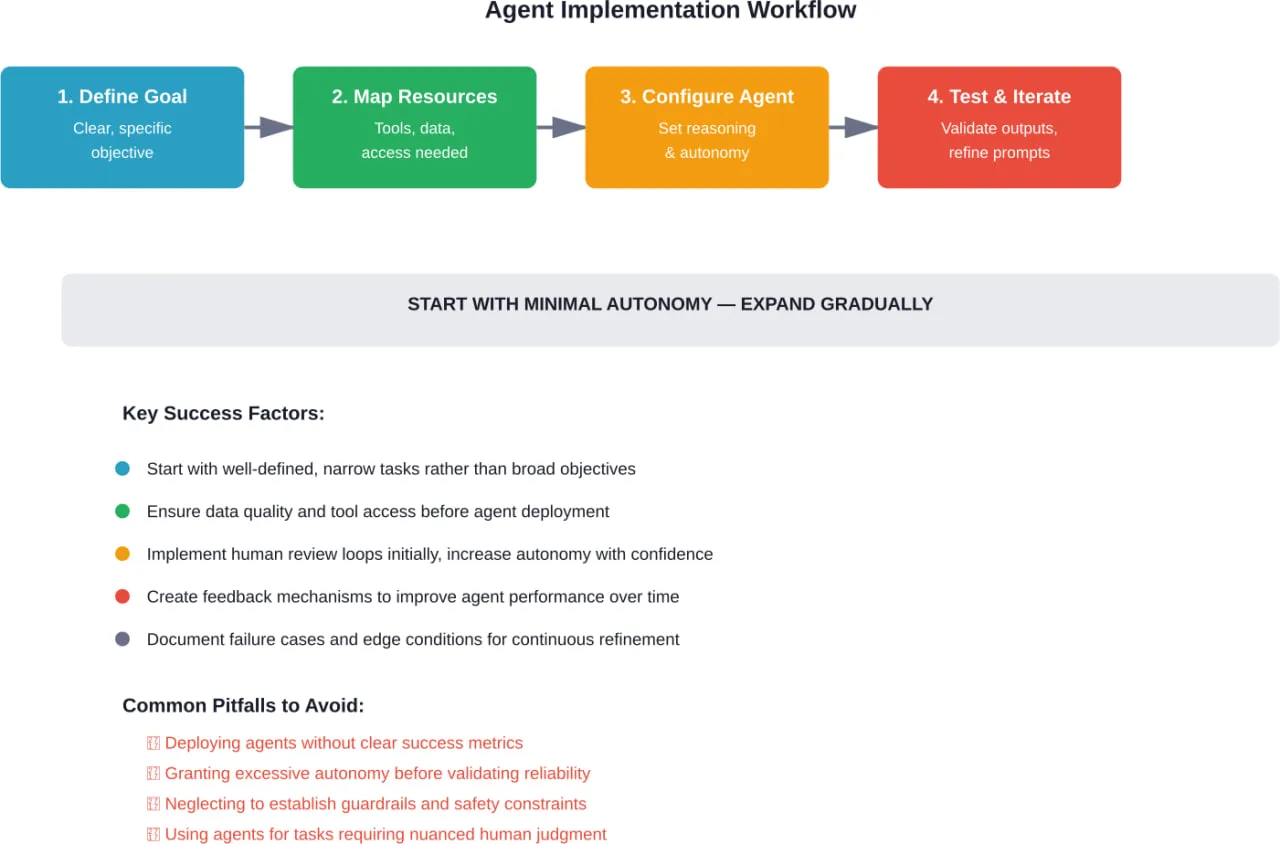

Setting Up Your First Agent

Starting simple beats starting perfect. The first agent should accomplish something useful while teaching lessons about agent behavior and limitations.

Begin by clearly defining the goal. Vague objectives produce vague results. Instead of “help with customer questions,” try “classify incoming support tickets by category and urgency, then route to the appropriate team with a summary of the issue.”

Next, identify the tools and data sources the agent needs. Can it access the ticketing system? Does it have historical ticket data to learn patterns? What external knowledge bases might help?

Then configure the agent’s reasoning approach. Research by Yao et al. (2022) comparing reasoning methods found that the ReAct method—which combines reasoning traces with task-specific actions—reduced hallucinations to 6% compared to 14% with standard chain-of-thought (CoT) prompting when evaluated on the HotpotQA dataset.

Start with conservative autonomy settings. Let the agent draft responses for human review rather than sending them directly. Gradually increase autonomy as confidence builds.

Put AI Agents Into Practice Without Rebuilding Your Team

Guides explain how to use AI agents, but implementation usually comes down to execution – connecting systems, handling data, and making sure everything works beyond a test setup.

A-listware provides development teams that support this stage with backend, integrations, and full-cycle software development. The company works as an extension of your team, covering everything from setup to ongoing support, so you can focus on how AI agents are used rather than how the system is built.

If you are moving from guidance to actual implementation, contact A-listware to support development, integration, and system rollout.

Designing Effective Agent Workflows

Random experimentation produces random results. Effective agent deployment requires intentional workflow design that accounts for how agents actually behave.

Breaking Down Complex Goals

Agents handle complex tasks by decomposing them into manageable subtasks. But the agent needs enough context to perform that decomposition correctly.

When defining goals, include relevant constraints, success criteria, and available resources. Instead of “create a marketing report,” try “analyze last quarter’s campaign performance data from the analytics dashboard, identify the top 3 performing channels by ROI, and create a summary report with specific metrics and recommendations for next quarter’s budget allocation.”

The specificity helps the agent plan effectively. Vague goals force the agent to guess at intent, which rarely ends well.

Context Engineering for Agents

According to Anthropic’s September 29, 2025 post on context engineering for AI agents, context has become a critical but finite resource. How context gets managed dramatically affects agent performance.

The challenge? Foundation models have token limits. An agent working on a complex task might need to process extensive background information, tool documentation, intermediate results, and conversation history—all competing for limited context space.

Effective context engineering strategies include using subagents for deep technical work that returns condensed summaries rather than full output. Research from Anthropic shows subagents might explore extensively using tens of thousands of tokens or more, but return only 1,000-2,000 tokens of distilled insights to the main agent.

Another approach involves implementing selective memory systems that retain critical information while discarding routine details. Not every intermediate step needs permanent storage.

Tool Design and Integration

Agents are only as capable as the tools available to them. Well-designed tools dramatically expand what agents can accomplish; poorly designed ones create frustration and failure.

Anthropic’s guidance on writing effective tools for agents emphasizes several key principles. Tools should have clear, descriptive names that communicate purpose. Documentation must explain not just what the tool does but when to use it and what its limitations are.

Tool responses should be configurable in terms of detail level. Some situations need comprehensive output; others benefit from concise summaries. Exposing a simple response format parameter lets agents control whether tools return “concise” or “detailed” responses based on current needs.

The Model Context Protocol provides a standardized way to connect agents with potentially hundreds of tools. But quantity doesn’t replace quality—a few well-designed, reliable tools outperform dozens of flaky ones.

Managing Agent Autonomy and Safety

Autonomy creates value and risk simultaneously. Agents that can’t act independently don’t save much time. Agents with unconstrained autonomy can cause significant problems.

Establishing Guardrails

Every agent deployment needs guardrails—constraints that prevent harmful actions while allowing beneficial ones. The specifics depend on the use case, but some patterns apply broadly.

Define explicit boundaries around what the agent can and cannot do. In customer service contexts, agents might be allowed to provide information and troubleshooting but forbidden from processing refunds above certain thresholds without human approval.

Implement validation layers for high-impact actions. Before an agent sends an email to thousands of customers or modifies production systems, require verification either from another agent or a human reviewer.

According to OpenAI’s February 23, 2026 guide on building governed AI agents, successful enterprise deployments balance innovation pressure with risk management through structured guardrails and scaffolding approaches.

Risk Assessment for Autonomous Action

Not every task carries equal risk. Agents analyzing internal reports pose different challenges than agents interacting directly with customers or modifying operational systems.

Microsoft’s guidance on AI agents emphasizes assessing risk before granting autonomy. Low-risk tasks—data analysis, report generation, internal research—can often run with minimal oversight. High-risk tasks—financial transactions, customer communications, system modifications—need tighter controls.

The assessment should consider both probability and impact. What could go wrong? How likely is it? What happens if it does?

Human-in-the-Loop Patterns

Many successful agent deployments use hybrid approaches where agents handle routine elements while humans manage exceptions and high-stakes decisions.

The agent performs initial work—gathering information, drafting responses, analyzing data—then presents results to a human for review and approval. This captures most of the efficiency gains while maintaining human oversight where it matters most.

As confidence builds and performance data accumulates, the threshold for human review can shift. Tasks that initially required approval might transition to automated execution with periodic audits.

Advanced Agent Architectures

Basic single-agent systems handle many use cases effectively. But some problems benefit from more sophisticated architectural patterns.

Multi-Agenten-Systeme

Complex workflows sometimes benefit from multiple specialized agents rather than one generalist. A main coordinator agent delegates subtasks to specialist agents optimized for specific functions.

One agent might excel at data extraction and analysis. Another specializes in generating written content. A third handles external API interactions. The coordinator manages the overall workflow, directing work to appropriate specialists and synthesizing their outputs.

Research on emerging AI agent architectures describes these patterns and their trade-offs. Multi-agent systems add complexity but can improve performance when subtasks have distinctly different requirements.

Memory and Learning Systems

Basic agents operate within the context window of their foundation model. More sophisticated implementations add persistent memory systems that accumulate knowledge over time.

Short-term memory holds conversation history and immediate context. Long-term memory stores facts, preferences, and learned patterns that persist across sessions. Semantic memory provides conceptual knowledge, while episodic memory captures specific past interactions.

These memory architectures let agents improve through experience rather than starting fresh each time.

Reasoning Strategies

How agents think through problems significantly impacts their effectiveness. Different reasoning approaches suit different task types.

ReAct combines reasoning and acting by having agents explicitly articulate their thought process alongside actions. This transparency helps debug failures and reduces hallucinations.

Chain-of-thought prompting breaks complex reasoning into sequential steps. Tree-of-thought approaches explore multiple reasoning paths in parallel before selecting the most promising.

The choice depends on task structure. Sequential problems benefit from chain-of-thought. Tasks with multiple valid approaches might use tree-of-thought exploration.

Real-World Agent Applications

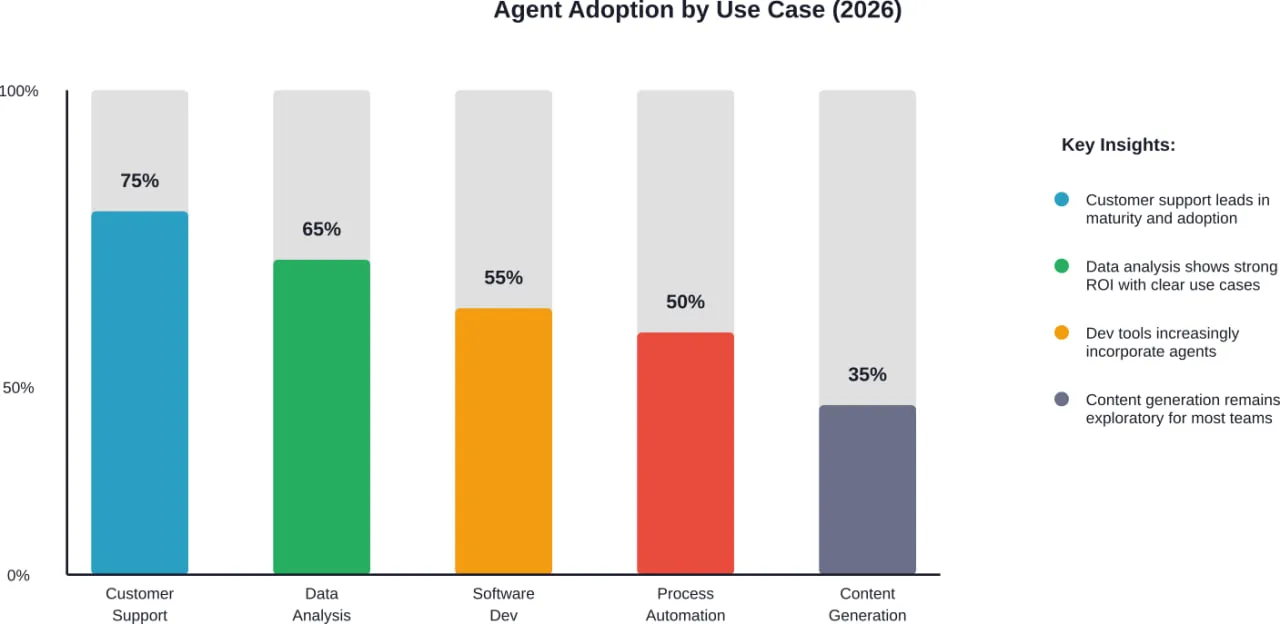

Theory matters less than results. What are organizations actually using agents for, and what outcomes are they seeing?

Customer Support and Service

Customer support represents one of the most mature agent deployment areas. Agents handle common inquiries, perform troubleshooting, and escalate complex issues to human agents with full context.

The Vodafone implementation handling over 70% of customer inquiries demonstrates the potential scale. These aren’t simple FAQ bots—they’re systems capable of understanding context, accessing customer records, diagnosing problems, and providing personalized assistance.

The key success factor? Starting with clear, well-defined use cases rather than attempting to automate all customer service at once.

Data Analysis and Reporting

Agents excel at tasks involving data gathering, analysis, and synthesis. They can pull information from multiple sources, identify patterns, perform calculations, and generate formatted reports—work that consumes significant human time despite being largely mechanical.

Teams deploy agents to create daily operational dashboards, analyze sales performance, monitor system metrics, and prepare executive summaries. The agent handles the repetitive data work; humans focus on interpretation and decision-making.

Software Development Assistance

Development workflows increasingly incorporate agents for code review, testing, documentation generation, and bug investigation. According to OpenAI’s Codex best practices documentation, at OpenAI, Codex reviews 100% of PRs.

These agents don’t replace developers. They accelerate workflows by handling routine code quality checks, identifying potential issues, suggesting improvements, and generating test cases.

Process Automation Across Systems

Agents that can interact with multiple business systems enable end-to-end process automation. An agent might gather data from a CRM, enrich it with information from a database, perform analysis, generate a report, and distribute results to stakeholders—all without human intervention.

The integration capability distinguishes agents from simpler automation tools. They can handle variations and exceptions rather than breaking when conditions don’t match rigid scripts.

Practical Considerations and Best Practices

Implementation details separate successful deployments from failed experiments. Several patterns emerge consistently from organizations getting real value from agents.

Start Small and Iterate

The temptation to automate everything immediately is strong. Resist it. Teams that succeed with agents typically start with a narrow, well-defined use case, validate effectiveness, and gradually expand scope.

This approach builds organizational confidence while generating concrete data about agent capabilities and limitations in the specific environment. Lessons learned on small deployments inform better decisions for larger ones.

Messen, was wichtig ist

Define success metrics before deployment. How will effectiveness be evaluated? Time saved? Error rate? User satisfaction? Cost reduction?

Without clear metrics, teams can’t distinguish successful agents from failing ones until problems become obvious. Better to establish measurement frameworks upfront and track performance systematically.

Plan for Monitoring and Maintenance

Agents aren’t set-and-forget systems. They require ongoing monitoring to ensure continued effectiveness. Performance degrades when underlying data changes, tools get updated, or requirements shift.

Successful deployments include logging and observability systems that track agent actions, decisions, and outcomes. When problems occur, detailed logs enable quick diagnosis and resolution.

Build Feedback Loops

The best agents improve over time based on real-world performance. Building feedback mechanisms—from users, from reviewers, from outcome measurements—lets agents learn what works and what doesn’t.

These feedback loops can be automated where appropriate. Track which agent responses lead to successful outcomes versus escalations. Use that data to refine prompts, adjust tools, or modify workflows.

Documentation and Knowledge Sharing

As organizations deploy multiple agents across different teams, centralized documentation becomes critical. What agents exist? What do they do? How should they be used? What are their limitations?

Without this knowledge sharing, teams waste time solving problems others have already addressed or deploying agents in inappropriate contexts because they don’t understand constraints.

The Path Forward With AI Agents

AI agents represent a fundamental shift in how work gets done. But the technology remains young, with capabilities and best practices still evolving rapidly.

Organizations seeing success focus on practical value over hype. They choose appropriate use cases, implement thoughtful guardrails, measure real outcomes, and iterate based on results.

The agents that deliver value today handle well-defined tasks where autonomy provides clear benefits and risks remain manageable. As capabilities advance and organizational experience deepens, the range of effective applications will expand.

But the core principles won’t change. Agents need clear goals, appropriate tools, proper constraints, and ongoing refinement. Teams that master these fundamentals position themselves to extract value as agent technology matures.

The question isn’t whether agents will transform work—they already are. The question is whether organizations will deploy them thoughtfully or haphazardly. The difference determines whether agents become genuine productivity multipliers or expensive distractions.

Start with one well-chosen use case. Build incrementally. Measure rigorously. Learn continuously. That’s how effective agent adoption actually happens.

Häufig gestellte Fragen

- What’s the difference between an AI agent and ChatGPT?

ChatGPT is an AI assistant that responds to prompts and requires continuous human direction for each step. AI agents operate autonomously—they pursue goals, make decisions, use tools, and complete multi-step tasks with minimal human oversight. Agents can access external systems, maintain memory across sessions, and adapt their approach based on results, while ChatGPT primarily generates text responses to user queries within a single conversation context.

- Do I need coding skills to use AI agents?

Not necessarily. No-code platforms like n8n.io and various agent-building tools let users create functional agents through visual interfaces without writing code. However, more complex implementations—custom tool integrations, sophisticated workflows, or specialized reasoning approaches—typically benefit from development capabilities. The technical requirements scale with use case complexity and customization needs.

- How much do AI agents cost to implement?

No-code platforms like n8n.io offer free tiers, with paid plans starting at $20/month for the platform itself. Custom implementations incur development costs plus infrastructure and API expenses for the underlying foundation models. Many organizations start with low-cost experiments on existing platforms before investing in custom solutions. Check specific platform websites for current pricing as costs change frequently.

- Are AI agents safe to use in production environments?

Safety depends entirely on implementation quality and appropriate guardrails. Agents deployed with proper constraints, validation layers, and monitoring can operate safely in production for appropriate use cases. High-risk applications require more stringent controls—human review loops, extensive testing, and careful risk assessment. Organizations should start with low-risk use cases, establish safety frameworks, and gradually expand to more critical applications as confidence builds.

- Können KI-Agenten lernen und sich mit der Zeit verbessern?

Agents can improve through several mechanisms. Memory systems let them accumulate knowledge across interactions. Feedback loops enable refinement of prompts, tools, and workflows based on performance data. Some architectures incorporate explicit learning components that adapt behavior based on outcomes. However, agents don’t automatically improve—improvement requires intentional design of learning mechanisms, feedback collection, and systematic refinement processes.

- What happens when an AI agent makes a mistake?

Mistake handling depends on the agent’s configuration and the deployment architecture. Well-designed systems include error detection, graceful failure modes, and escalation paths to human reviewers when the agent encounters situations beyond its capabilities. Logging and monitoring systems capture mistakes for analysis and learning. Organizations should design workflows assuming mistakes will occur and implement appropriate safeguards rather than expecting perfect performance.

- Which industries benefit most from AI agents?

Customer service, technology, finance, healthcare, and operations-intensive industries show strong agent adoption. However, benefit correlates more with task characteristics than industry. Any domain with repetitive, time-consuming workflows that require some judgment but follow reasonably consistent patterns can benefit from agents. The key is identifying specific use cases where autonomy adds value rather than attempting to apply agents universally across an entire industry.

Schlussfolgerung

AI agents mark a significant evolution in artificial intelligence—from tools that respond to commands toward systems that autonomously pursue goals. Organizations across industries are discovering practical applications for agents in customer service, data analysis, software development, and process automation.

Success with agents requires understanding their fundamental architecture, selecting appropriate use cases, implementing thoughtful guardrails, and committing to continuous refinement. The technology delivers real value when deployed strategically and measured rigorously.

The path forward involves starting with narrow, well-defined applications, building organizational expertise through hands-on experience, and gradually expanding scope as capabilities and confidence grow.

Ready to implement your first AI agent? Begin by identifying one repetitive, time-consuming workflow in your organization. Define clear success metrics, select an appropriate platform or framework, and build a minimal viable agent. Measure results, gather feedback, and iterate. That’s how effective agent adoption happens—one practical application at a time.