Kurze Zusammenfassung: AI agents are autonomous software systems that use large language models and artificial intelligence to independently perform tasks, make decisions, and pursue goals without constant human oversight. They combine reasoning capabilities, memory, tool usage, and environmental perception to break down complex problems into steps, execute actions, and adapt based on feedback—functioning more like digital assistants that can plan and act rather than just respond to prompts.

The shift from chatbots that answer questions to agents that actually do things represents one of the biggest leaps in artificial intelligence. But what’s happening under the hood?

AI agents aren’t just smarter chatbots. They’re systems designed to perceive their environment, reason through problems, make decisions, and take actions—all with varying degrees of autonomy. Understanding how they work means looking at their architecture, the reasoning paradigms they employ, and the mechanisms that let them interact with tools and data.

What Makes an AI Agent Different from Other AI Systems

According to IBM, an AI agent is a system that autonomously performs tasks by designing workflows with available tools. This autonomy is the key differentiator.

Traditional AI systems wait for prompts and respond. Agents, however, can initiate actions, plan multi-step workflows, and pursue goals over extended periods. Google Cloud defines AI agents as software systems that use AI to pursue goals and complete tasks on behalf of users, showing reasoning, planning, memory, and a level of autonomy to make decisions, learn, and adapt.

Das ist der Unterschied zwischen ihnen:

- Eigenständigkeit: Agents can operate with minimal human intervention, making decisions based on their programming and environmental feedback.

- Goal-oriented behavior: Rather than just responding, agents work toward defined objectives.

- Environmental interaction: Agents perceive their surroundings (data sources, APIs, user inputs) and act upon them.

- Vernunft und Planung: They break complex tasks into manageable steps and execute them sequentially or adaptively.

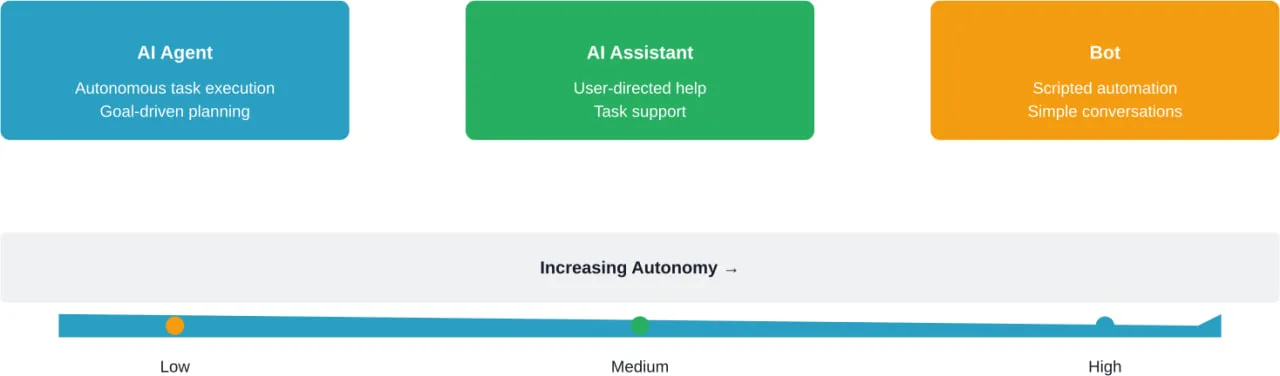

The distinction between agents, assistants, and bots matters. Assistants help users complete tasks but require direction. Bots automate simple, scripted interactions. Agents can perform complex tasks autonomously and adapt their approach based on outcomes.

The Core Architecture of AI Agents

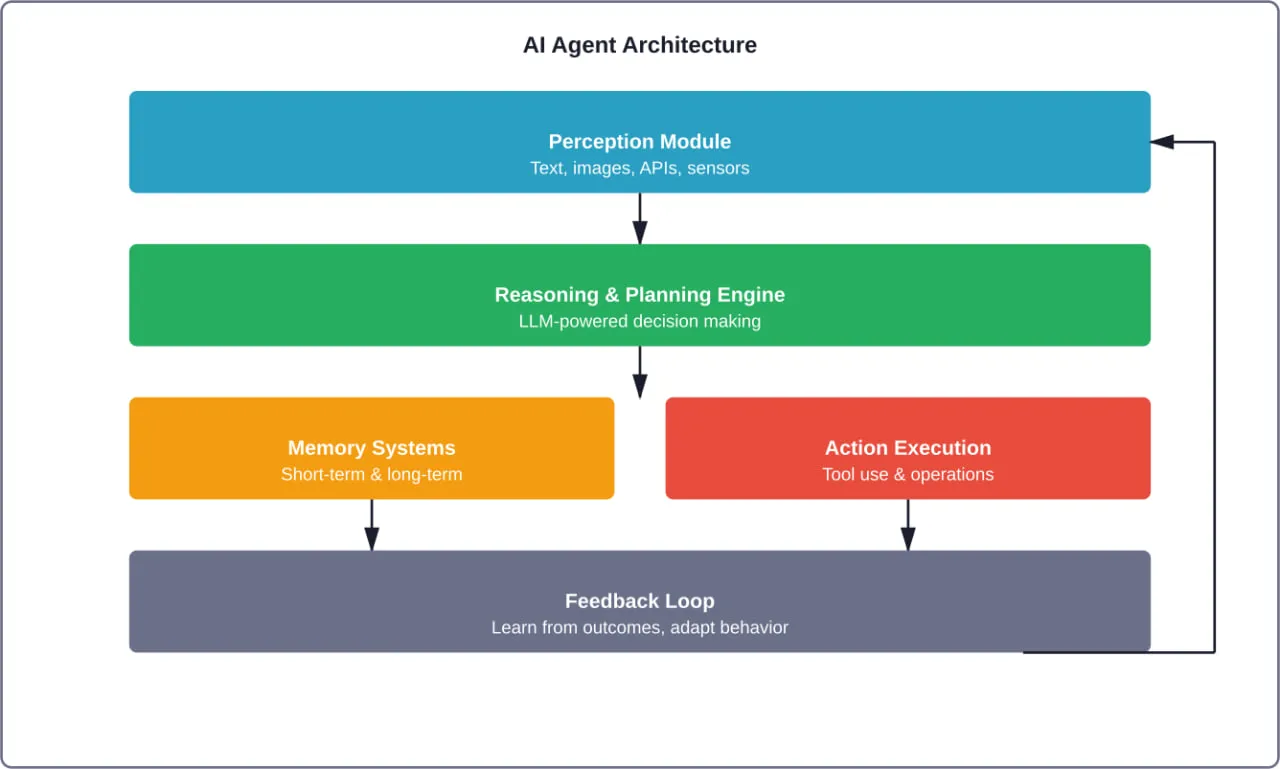

At the foundation, AI agents typically consist of several interconnected components that work together to enable autonomous behavior.

Perception Module

Agents need to understand their environment. The perception module processes inputs—text, images, audio, sensor data, API responses, or database queries. Multimodal capacity in foundation models allows agents to process diverse data types simultaneously.

This is where generative AI’s multimodal capabilities shine. Agents can analyze documents, interpret images, listen to audio, and combine these inputs to form a comprehensive understanding of the situation.

Reasoning and Planning Engine

Once the agent perceives its environment, it needs to decide what to do. The reasoning engine—often powered by large language models (LLMs)—analyzes the current state, compares it against goals, and formulates a plan.

Recent research from arXiv highlights hierarchical decision-making frameworks. The “Agent-as-Tool” study (arXiv:2507.01489) proposes detaching the tool calling process from the reasoning process. This allows the model to focus on verbal reasoning while another agent handles tool execution, achieving comparable or better performance.

Reasoning paradigms vary:

- Chain-of-thought reasoning: Breaking problems into sequential steps

- Hierarchical reasoning: Organizing decisions in layers, with high-level strategy and low-level execution

- Reinforcement learning-augmented reasoning: Using feedback loops to improve decision quality over time

According to arXiv paper 2512.24609, reinforcement learning-augmented LLM agents improve collaborative decision-making and performance optimization. LLMs perform well in language tasks but often struggle with complex sequential decisions—reinforcement learning addresses this gap.

Speicher-Systeme

Memory distinguishes reactive bots from truly autonomous agents. Agents maintain both short-term (working) memory and long-term memory.

Short-term memory holds the current context—recent interactions, intermediate results, and task state. Long-term memory stores learned patterns, past decisions, successful strategies, and domain knowledge.

This allows agents to learn from experience and adapt their behavior. An agent that failed at a task can recall what went wrong and try a different approach.

Action Execution and Tool Use

Agents don’t just think—they act. The action execution layer translates decisions into concrete operations: calling APIs, querying databases, writing code, sending messages, or controlling external systems.

Tool use is critical. OpenAI’s practical guide to building agents emphasizes that agents can define, select, and run workflows using available tools. Tools might include:

- Search engines for information retrieval

- Code interpreters for running calculations

- Database connectors for querying structured data

- External APIs for integrating third-party services

- Machine learning models for specialized predictions

The ToolUniverse framework from Harvard’s Kempner Institute provides an environment where LLMs interact with more than six hundred scientific tools, including machine learning models, databases, and simulators. Standardizing how AI models access and combine tools enables more sophisticated “AI scientist” agents.

How AI Agents Make Decisions

Decision-making in AI agents involves multiple layers of processing. Here’s the typical flow:

Goal Definition

First, the agent receives or identifies a goal. This might come from a user (“analyze this quarter’s sales data and identify trends”) or from the agent’s own programming (monitoring systems and alerting on anomalies).

Environmental Assessment

The agent gathers relevant information. What data is available? What tools can be used? What constraints exist? This contextual awareness shapes the decision space.

Plan Formulation

Using its reasoning engine, the agent generates a plan. For complex tasks, this involves breaking the goal into subtasks, ordering them logically, and identifying dependencies.

Research on hierarchical reinforcement learning (arXiv:2212.06967) shows how agents can explain their decision-making in hierarchical scenarios. High-level strategies decompose into low-level actions, making the decision process more interpretable.

Action Selection and Execution

The agent selects the next action based on the current state and plan. It executes the action using available tools—querying a database, calling an API, generating text, or running code.

Feedback Integration

After each action, the agent evaluates the outcome. Did it succeed? Did it move closer to the goal? If not, the agent updates its plan and tries a different approach.

Anthropic’s research on measuring AI agent autonomy in practice analyzed millions of human-agent interactions. Among new users of Claude Code, roughly 20% of sessions use full auto-approve, which increases to over 40% as users gain experience—showing that users trust agents more as they prove their decision-making reliability.

The feedback loop is where reinforcement learning shines. According to the Agent Lightning framework (arXiv:2508.03680), reinforcement learning enables training ANY AI agents through flexible, extensible methods that improve performance over time.

Types of AI Agents and How They Work Differently

Not all agents are built the same. Different architectures suit different tasks.

Einfache Reflexmittel

These agents react to current perceptions without considering history. They follow condition-action rules: if X, then Y. Limited but fast and predictable for straightforward environments.

Modellbasierte Reflex-Agenten

These agents maintain an internal model of the world, allowing them to handle partially observable environments. They track state over time and make decisions based on both current input and historical context.

Zielgerichtete Agenten

These agents explicitly pursue goals. They evaluate different action sequences to determine which best achieves the objective. Planning and search algorithms drive their behavior.

Nutzwertbasierte Agenten

Beyond just achieving goals, utility-based agents optimize for quality. They assign utility values to different states and choose actions that maximize expected utility. This enables nuanced decision-making when multiple paths lead to goal completion.

Lernende Agenten

Learning agents improve through experience. They combine a performance element (makes decisions), a critic (evaluates outcomes), a learning element (updates behavior based on feedback), and a problem generator (explores new strategies).

The AgentGym-RL framework (arXiv:2509.08755) focuses on training LLM agents for long-horizon decision-making through multi-turn reinforcement learning. These agents handle tasks that require sustained reasoning and adaptation over extended interactions.

| Agent Type | Decision Basis | Memory | Use Case |

|---|---|---|---|

| Simple Reflex | Current input only | Keine | Basic automation |

| Model-Based Reflex | Current + internal model | State tracking | Partially observable tasks |

| Goal-Based | Goal achievement | Planning state | Multi-step workflows |

| Utility-Based | Outcome optimization | Preference models | Quality-sensitive decisions |

| Lernen | Experience + adaptation | Long-term learning | Complex, evolving environments |

The Role of Large Language Models in AI Agents

LLMs have become the backbone of modern agentic AI. Their ability to understand natural language, generate coherent text, and perform reasoning tasks makes them ideal for agent applications.

OpenAI’s guide notes that LLMs’ advances in reasoning, multimodality, and tool use have unlocked agentic capabilities. Models can now interpret complex instructions, break them into steps, and coordinate multiple tools to accomplish objectives.

But LLMs alone aren’t enough. Real talk: they need scaffolding. Memory systems, tool interfaces, feedback mechanisms, and orchestration layers transform a language model into a functional agent.

MIT Sloan describes agentic AI as systems that are semi- or fully autonomous, able to perceive, reason, and act on their own. LLMs provide the reasoning core, but the agent architecture provides autonomy.

How LLMs Enable Agent Capabilities

- Natural language understanding: Agents can interpret user goals expressed in plain English (or any language).

- Contextual reasoning: LLMs process large amounts of context, understanding relationships between pieces of information.

- Code generation: Agents can write and execute code to perform calculations, data transformations, or automation.

- Multi-turn dialogue: Maintaining coherent, goal-directed conversations over many exchanges.

- Tool selection: Choosing the right tool for a task based on descriptions and past experience.

Limitations and How Agents Address Them

LLMs have well-known limitations: hallucination, lack of true reasoning, difficulty with math, and no inherent memory beyond their context window.

Agent architectures mitigate these:

- Hallucination: Agents verify outputs using external tools (databases, calculators, search engines) rather than relying solely on model generation.

- Reasoning depth: Multi-step prompting and chain-of-thought techniques scaffold deeper reasoning.

- Math and logic: Offloading calculations to code interpreters or symbolic solvers.

- Gedächtnis: External memory systems (vector databases, knowledge graphs) extend the agent’s recall beyond the context window.

Multi-Agent Systems and Coordination

Single agents can be powerful. But multi-agent systems—where multiple agents collaborate—unlock even greater capabilities.

Each agent can specialize in a domain or function. One agent might handle data retrieval, another performs analysis, a third generates reports, and a fourth manages user interaction. They coordinate through message passing, shared memory, or hierarchical control.

Research on hybrid agentic AI frameworks (IEEE) explores integrating AIML and machine learning for context-aware autonomous systems. Different agent types collaborate, each contributing its strengths.

Challenges in multi-agent systems include:

- Coordination overhead: Agents must communicate effectively and avoid conflicts.

- Task allocation: Deciding which agent handles which subtask.

- Consistency: Ensuring agents work toward the same overall goal.

- Failure handling: What happens when one agent fails? Others must adapt.

The payoff is resilience and scalability. If one agent hits a bottleneck, others continue. Specialization improves performance in each domain.

Training and Improving AI Agents

How do agents get better? Training involves supervised learning, reinforcement learning, and human feedback.

Supervised Fine-Tuning

Agents learn from labeled examples: given situation X, the correct action is Y. This builds baseline competence but doesn’t handle novel scenarios well.

Reinforcement Learning

Agents learn by trial and error, receiving rewards for successful actions and penalties for failures. Over time, they optimize for reward maximization.

The Agent Lightning framework presents flexible training methods for any AI agents using reinforcement learning. This approach adapts to different environments and objectives.

Human-in-the-Loop Feedback

Human evaluators review agent decisions, providing corrections and preferences. This feedback refines agent behavior and aligns it with human values.

Anthropic’s work on evaluating AI agents emphasizes that good evaluations help teams ship agents more confidently. Without rigorous evals, issues emerge only in production—where fixing one failure can create others.

Choosing the right graders for evaluation matters. Code-based graders (string matching, static analysis, outcome verification) provide objective metrics. LLM-based graders assess nuanced qualities like helpfulness or coherence. Combining both gives comprehensive evaluation.

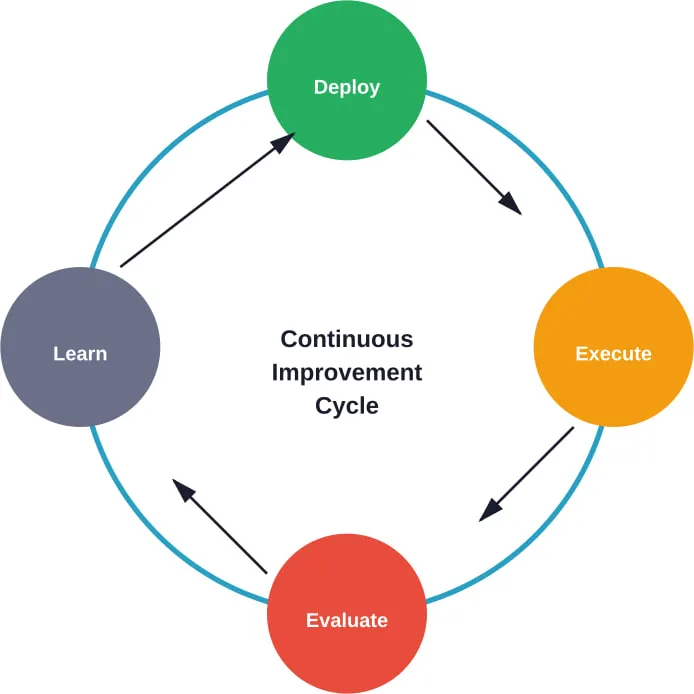

Continuous Learning

Deployed agents continue learning from real-world interactions. They log outcomes, update models, and improve strategies over time. This creates a virtuous cycle of performance enhancement.

Real-World Applications: How Agents Work in Practice

Understanding theory is one thing. Seeing agents in action clarifies their value.

Customer Service Automation

Agents handle customer inquiries end-to-end. They retrieve account information, troubleshoot issues, process requests, and escalate complex cases to humans. Memory systems track conversation history across sessions, providing continuity.

Data Analysis and Reporting

Agents query databases, perform statistical analysis, generate visualizations, and write reports. According to MIT Sloan, in areas involving substantial effort to evaluate options—such as B2B procurement—agents deliver value by reading reviews, analyzing metrics, and comparing attributes across options.

Software Development Assistance

Agents write code, debug errors, refactor functions, and manage deployments. Analysis of Claude Code usage shows that as users gain experience, they increasingly let the agent run autonomously, intervening only when needed. This shift demonstrates growing trust in agent capabilities.

Scientific Research

The ToolUniverse framework enables AI agents to interact with hundreds of scientific tools. These “AI scientists” design experiments, run simulations, analyze results, and propose hypotheses—accelerating the research cycle.

Netzwerk-Management

IEEE research on AI agent-based autonomous cognitive architecture for 6G core networks shows agents managing complex telecommunications infrastructure, optimizing performance, and responding to failures without human intervention.

Herausforderungen und Beschränkungen

Agents aren’t perfect. Several challenges remain.

Reliability and Error Handling

Agents can make mistakes—selecting wrong tools, misinterpreting context, or generating incorrect outputs. Robust error handling and fallback mechanisms are essential.

Transparenz und Erklärbarkeit

Understanding why an agent made a particular decision can be difficult. Black-box reasoning undermines trust and makes debugging hard. Research on explaining agent decision-making in hierarchical reinforcement learning scenarios (arXiv:2212.06967) addresses this by making agent reasoning more interpretable.

Security and Safety

Autonomous agents with tool access pose risks. They could inadvertently delete data, expose sensitive information, or execute harmful actions. The NIST AI Risk Management Framework provides guidance for cultivating trust in AI technologies while mitigating risk.

NIST’s Center for AI Standards and Innovation issued requests for information about securing AI agents, recognizing the unique security challenges they present.

Alignment and Value Specification

Ensuring agents pursue the right goals in the right way—alignment—remains an open problem. Misspecified objectives can lead to unintended consequences, even when the agent functions correctly.

Resource Consumption

Running sophisticated agents with large models, extensive tool calls, and continuous learning can be computationally expensive. Optimizing efficiency without sacrificing capability is an ongoing challenge.

Best Practices for Building AI Agents

Organizations deploying agents should follow proven principles.

Start Simple, Then Scale

Begin with narrow, well-defined tasks. Prove the agent works in a controlled environment before expanding scope. Incremental deployment reduces risk.

Design Robust Evaluation Systems

According to Anthropic’s eval guide, effective evaluation design combines code-based and LLM-based graders, matching evaluation complexity to system complexity. Define success metrics early and test rigorously.

Implement Guardrails and Safety Mechanisms

Restrict agent permissions, validate actions before execution, and monitor behavior continuously. NIST’s SP 800-53 Control Overlays for Securing AI Systems provide security controls tailored to AI infrastructure.

Prioritize Human Oversight for High-Stakes Decisions

Autonomy is valuable, but critical decisions should involve humans. Design agents to request approval for consequential actions.

Iterate Based on Real-World Feedback

Deploy, observe, learn, improve. User interactions reveal edge cases and failure modes that testing misses. Continuous improvement cycles are essential.

Document Agent Behavior and Limitations

Clear documentation helps users understand what agents can and can’t do, setting realistic expectations and improving trust.

Turn AI Agent Mechanics Into a Working System

Architecture diagrams and agent mechanics explain how components should interact, but real systems rarely behave exactly like схемы. Once you move into implementation, questions shift to reliability, data consistency, and how different services handle real workloads over time.

A-listware works on that practical side. The company provides development teams that handle backend systems, integrations, and infrastructure around AI-driven solutions, helping businesses move from theoretical models to systems that run day to day. Contact A-listware to support the build and keep your system working beyond the initial setup.

Die Zukunft der KI-Agenten

Where is this technology headed?

Expect deeper integration of reinforcement learning, enabling agents to tackle longer-horizon tasks with better planning. Multi-agent collaboration will mature, with standardized communication protocols and orchestration frameworks.

Specialization will increase. Domain-specific agents—trained on industry data and optimized for particular workflows—will outperform general-purpose systems in their niches.

Interoperability between agents from different vendors will become critical. Open standards and common tool interfaces will facilitate this.

Regulation and governance frameworks will evolve. As agents take on more consequential roles, accountability, transparency, and safety standards will tighten.

The lines between agents and traditional software will blur. Eventually, agentic capabilities may become standard features in most applications, not a separate category.

Häufig gestellte Fragen

- What is the main difference between an AI agent and a chatbot?

AI agents can autonomously plan, decide, and execute multi-step tasks toward goals, while chatbots primarily respond to user inputs without independent goal-directed behavior. Agents combine reasoning, memory, and tool use to operate with varying degrees of autonomy, whereas chatbots follow scripted or prompt-driven responses.

- How do AI agents use tools and APIs?

AI agents identify which tools are needed for a task, call APIs or execute code to perform specific operations, retrieve results, and integrate them into their workflow. The agent’s reasoning engine selects appropriate tools based on task requirements, and the action execution layer handles the technical interface with external systems.

- Can AI agents learn from their mistakes?

Yes, especially agents designed with reinforcement learning or continuous learning mechanisms. They evaluate outcomes after each action, update their internal models based on success or failure, and adjust future behavior accordingly. This feedback loop enables performance improvement over time.

- What types of tasks are AI agents best suited for?

AI agents excel at multi-step workflows, data analysis and reporting, customer service automation, software development assistance, and tasks requiring coordination of multiple tools or data sources. They’re particularly valuable for repetitive but complex tasks that benefit from autonomous execution with occasional human oversight.

- Are AI agents secure and safe to deploy?

Security depends on implementation. Properly designed agents with restricted permissions, action validation, monitoring, and human oversight for high-stakes decisions can be deployed safely. Organizations should follow frameworks like NIST’s AI Risk Management Framework and implement robust security controls. Risks remain, especially for agents with broad tool access or insufficient guardrails.

- How do multi-agent systems coordinate their actions?

Multi-agent systems use communication protocols, shared memory, hierarchical control structures, or message-passing interfaces to coordinate. Agents negotiate task allocation, share information about environmental state, and synchronize actions to avoid conflicts. Coordination mechanisms vary based on system architecture—some use centralized orchestration, others rely on peer-to-peer negotiation.

- What role do large language models play in AI agents?

Large language models provide the reasoning and natural language understanding core of modern AI agents. They interpret user goals, generate plans, select tools, and produce outputs. LLMs enable agents to process complex instructions, perform multi-step reasoning, and interact naturally with humans. The agent architecture provides memory, tool interfaces, and orchestration that transform an LLM into an autonomous system.

Schlussfolgerung

AI agents represent a fundamental shift from reactive AI systems to autonomous, goal-directed software. They work through integrated architectures combining perception, reasoning, memory, and action—powered increasingly by large language models but scaffolded with specialized components that enable true autonomy.

Understanding how agents perceive their environment, make decisions, use tools, and learn from feedback clarifies both their potential and limitations. As these systems mature, they’ll handle increasingly complex tasks, but challenges around reliability, security, and alignment persist.

For organizations exploring agentic AI, the path forward involves starting with well-defined use cases, building robust evaluation systems, implementing strong guardrails, and iterating based on real-world deployment. The technology is ready—but successful implementation requires thoughtful design and ongoing refinement.

Ready to build your first AI agent? Start with a narrow, high-value task, design clear success metrics, and scale gradually as you gain confidence in the system’s capabilities.