Kurze Zusammenfassung: Enterprise AI agents are transforming business operations in 2026, with 62% of companies now experimenting with autonomous systems according to McKinsey research. Organizations face critical challenges around governance, identity management, and risk controls as agents gain ability to execute tasks independently. Success requires treating agents like digital employees with defined roles, limited authority, and clear audit trails.

The enterprise AI landscape shifted dramatically as we moved into 2026. What started as experimental chatbots has evolved into autonomous agents that can reason, plan, and execute tasks across business systems without constant human oversight.

But here’s the thing—most companies aren’t ready for what that actually means.

According to research from McKinsey & Company surveying 1,993 companies in mid-2025, 62% of respondents reported their organizations were at least experimenting with AI agents. That’s a massive adoption wave happening faster than most governance frameworks can keep pace with.

From Tools to Autonomous Enterprise Actors

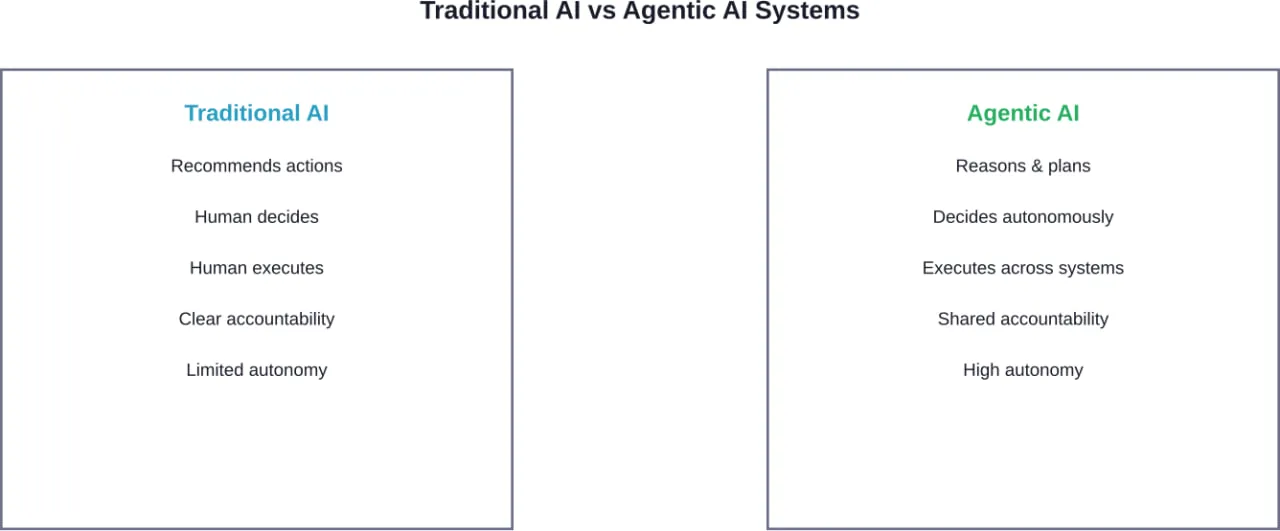

Traditional AI acted as a tool. You asked a question, got an answer, and decided what to do next. Agentic AI operates differently.

These systems can update customer records, issue refunds, route approvals, and trigger workflows across multiple platforms. They don’t just recommend actions—they take them.

MIT Sloan Management Review research shows enterprise adoption of traditional AI climbed to 72% over the past eight years. Agentic systems are following a much steeper trajectory.

The difference? Agents introduce operational risks that conventional software never created. When an agent makes a decision, who’s accountable? When it accesses sensitive data, how do you audit that? When it executes a transaction incorrectly, how do you trace what went wrong?

Identity Management Becomes Mission-Critical

Here’s where existing infrastructure falls short. Traditional identity and access management (IAM) was built for humans and maybe a few service accounts. Not for dozens or hundreds of autonomous agents operating simultaneously.

Each agent needs a defined identity. Not just a generic “AI system” credential, but specific roles with specific permissions tied to specific tasks.

Think about it like organizational hierarchy. An agent handling customer service inquiries shouldn’t have the same database access as one managing financial reconciliation. Simple concept, complicated implementation.

The challenge intensifies when agents interact with each other. Multi-agent workflows—where one agent’s output becomes another’s input—require sophisticated handoff protocols and audit mechanisms.

Governance Gaps Create Enterprise Risk

Research from academic institutions analyzing agentic AI architectures highlights a fundamental tension: organizations rapidly deploy agents before establishing governance frameworks.

That gap isn’t sustainable.

What happens when an agent misinterprets context and executes an unauthorized transaction? Who reviews the decision logic? How do you prevent the same error from recurring across similar agents?

| Governance Challenge | Traditional Software | Agentic AI Systems |

|---|---|---|

| Decision transparency | Code is deterministic | Reasoning can be opaque |

| Error attribution | Clear stack traces | Complex decision chains |

| Access controls | Role-based permissions | Context-aware authority |

| Audit requirements | Transaction logs | Decision justification trails |

Effective governance requires audit trails that capture not just what an agent did, but why it made that decision. The reasoning process matters as much as the outcome.

Platform Providers Race to Enterprise Market

Major vendors recognized the enterprise opportunity. OpenAI reportedly expects enterprise customers to grow from 40% of business to 50% by year-end, according to statements from Chief Financial Officer Sarah Friar to CNBC in February 2026.

The company now offers both agent platforms and engineering services to help organizations deploy autonomous systems safely.

Other providers like Databricks and specialized startups launched enterprise data agents designed to work within existing business ecosystems. These platforms emphasize governance, compliance, and integration with legacy systems.

But platform availability doesn’t solve the strategic challenge. Technology is ready. Organizational readiness lags behind.

Practical Deployment Strategies That Work

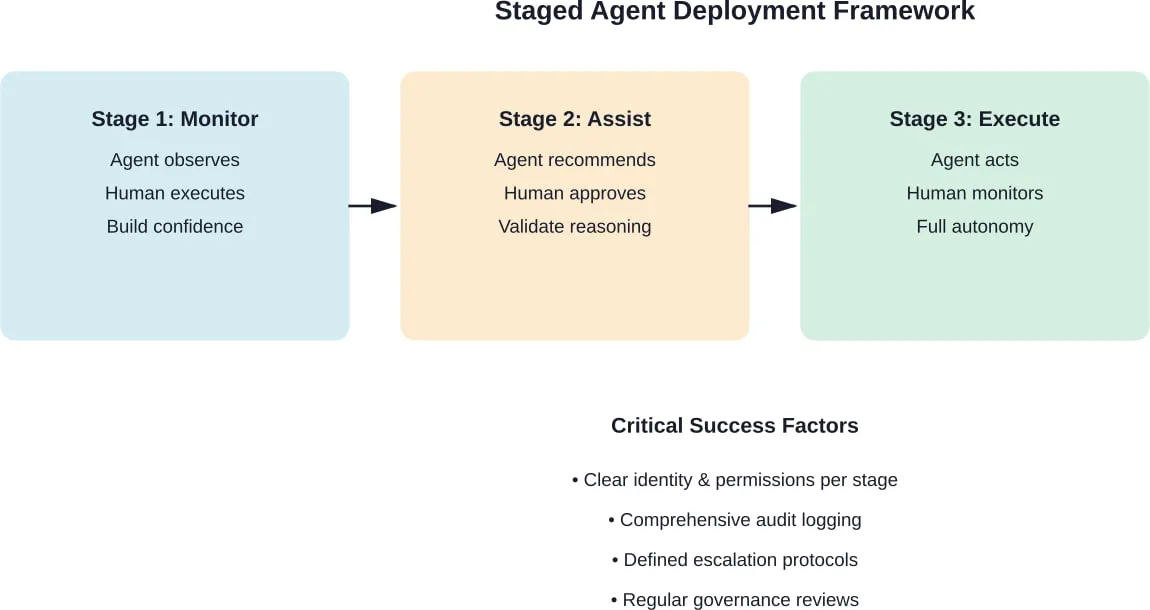

Organizations succeeding with agentic AI share common approaches. They start small, with clearly bounded use cases where agent autonomy delivers value but risk stays contained.

Customer service represents a popular entry point. Agents can handle routine inquiries, escalate complex issues, and learn from human oversight. The feedback loop accelerates improvement while maintaining control.

Data analysis offers another low-risk, high-value application. Agents can query databases, generate reports, and surface insights without directly executing business transactions.

The key? Incremental authority expansion. Start with read-only access. Add write permissions for non-critical data. Eventually grant transaction execution for well-understood processes.

Each stage builds confidence while revealing edge cases that need human judgment.

Regulatory Landscape Shapes Development

Government agencies are paying attention. NIST published reflections from its Second Cyber AI Profile Workshop on March 23, 2026, which followed the workshop held in January.

IEEE standards bodies approved new technical requirements for AI agent capabilities in materials research and other specialized domains as of February 2026. These standards provide benchmarks for security, reliability, and performance.

Organizations that proactively align with emerging standards position themselves better for compliance as regulations solidify.

What This Means for Business Leaders

The agentic AI wave isn’t coming—it’s here. The question isn’t whether to adopt these systems, but how to do it responsibly.

Start by auditing current AI deployments. Which systems already exhibit agent-like behavior? Where are the governance gaps? What identity management infrastructure exists?

Then establish clear policies before expanding deployment. Define approval thresholds for agent actions. Create audit requirements that capture decision reasoning. Build escalation paths for edge cases.

Most importantly, treat agents like team members, not just software. That mental model drives better architecture, clearer accountability, and safer operations.

The organizations that get this right will unlock significant competitive advantages. Those that rush deployment without proper controls expose themselves to risks that could undermine trust in AI across their entire operation.

Make AI Adoption Work in Practice

Enterprise AI trends often highlight adoption speed and risk factors, but most issues show up during implementation – how systems connect, how data is handled, and whether everything stays stable as usage grows.

A-listware supports companies at that stage by providing dedicated development teams and full-cycle software engineering. The focus is on backend systems, integrations, and long-term support, helping businesses turn AI initiatives into systems that actually operate in real conditions

If your AI plans are moving forward but execution is becoming a bottleneck, contact A-listware to support system development, integration, and ongoing stability.

Häufig gestellte Fragen

- What makes AI agents different from regular AI tools?

AI agents can autonomously reason, plan, and execute tasks across multiple systems without constant human approval. Traditional AI tools provide recommendations that humans must act on. Agents take actions directly, which creates new requirements for governance, identity management, and audit trails.

- How many companies are currently using enterprise AI agents?

According to McKinsey research from mid-2025 covering 1,993 companies, 62% reported at least experimenting with AI agents. Adoption has accelerated significantly in early 2026 as platforms mature and enterprise-focused solutions become available.

- What are the biggest risks of deploying AI agents in business?

Primary risks include unpredictable behavior in edge cases, unclear accountability when errors occur, insufficient audit trails for decision-making, and inadequate identity and access controls. Agents with excessive permissions can execute unauthorized transactions or access sensitive data inappropriately.

- Do existing identity management systems work for AI agents?

Traditional IAM systems weren’t designed for autonomous agents. They typically lack the granularity needed to assign context-aware permissions, track multi-agent workflows, or audit decision reasoning. Organizations need enhanced frameworks that treat each agent as a distinct identity with role-based authority.

- Which business functions benefit most from AI agents?

Customer service, data analysis, workflow automation, and routine transaction processing represent common high-value applications. These areas offer clear boundaries for agent authority, well-defined success metrics, and manageable risk profiles for initial deployments.

- How should companies start with agentic AI adoption?

Begin with limited-scope use cases where agents have read-only access or execute low-risk actions. Establish comprehensive audit logging from day one. Define clear escalation protocols. Gradually expand agent authority as confidence builds and governance frameworks mature.

- What regulations govern enterprise AI agent deployment?

Regulatory frameworks are still developing. NIST is establishing cybersecurity profiles for AI systems, and IEEE has approved technical standards for specific agent applications. Organizations should monitor evolving standards and proactively align deployments with emerging requirements to ensure future compliance.