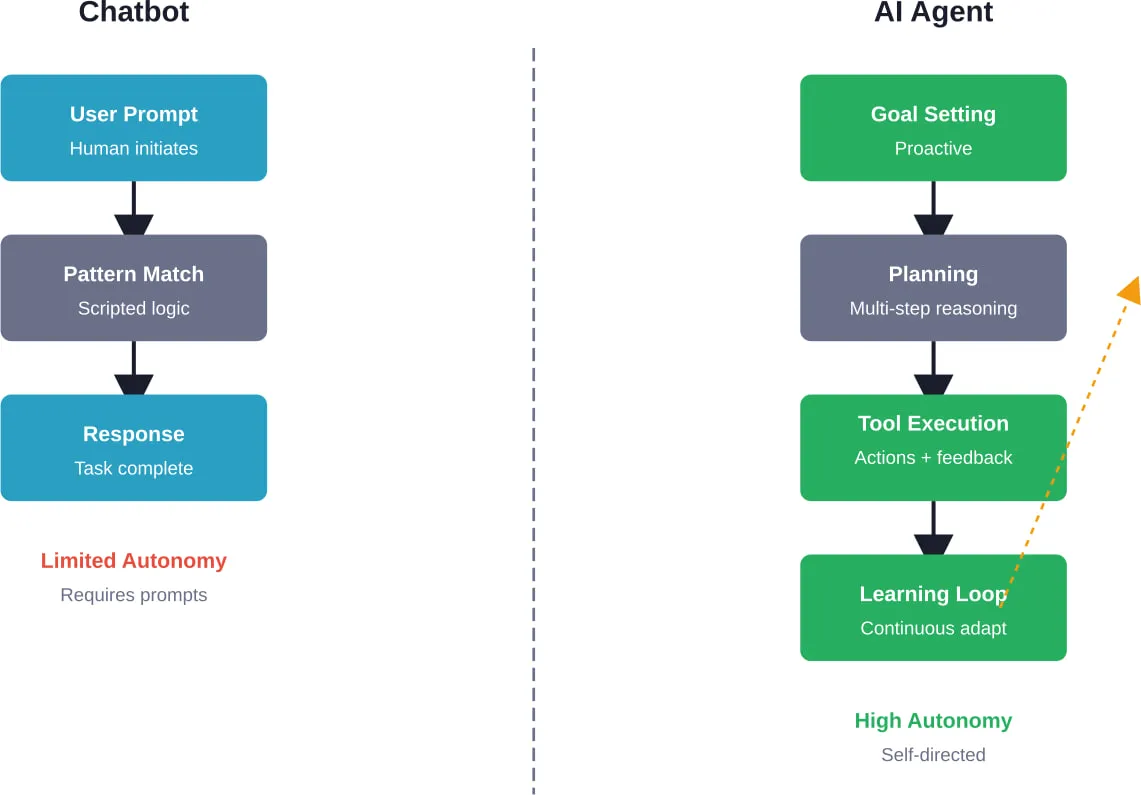

Kurze Zusammenfassung: AI agents and chatbots differ fundamentally in autonomy and capability. Chatbots respond to user prompts with scripted or learned responses, while AI agents proactively plan, make decisions, and execute multi-step tasks independently. Chatbots handle routine queries effectively, but agents tackle complex workflows that require reasoning, tool use, and continuous learning.

The artificial intelligence landscape has shifted dramatically. What started as simple chatbots answering FAQs has evolved into sophisticated AI agents capable of autonomous decision-making and task execution.

But here’s where things get confusing. The terms “chatbot” and “AI agent” often get used interchangeably, yet they represent fundamentally different technologies with distinct capabilities and limitations.

According to recent industry data, 84% of developers now use AI tools, and eight in ten enterprises have deployed agent-based AI. The market for these technologies is projected to grow at 45.8% annually through 2030. With this rapid adoption comes a critical need to understand what separates these technologies.

The distinction isn’t just semantic. It fundamentally impacts how effectively teams can automate workflows, serve customers, and scale operations.

What Is a Chatbot?

Chatbots are software applications designed to simulate human conversation. They respond to user inputs with pre-programmed or learned responses, handling interactions through text or voice interfaces.

Traditional chatbots operate on rule-based logic. When someone asks a question, the bot matches keywords or patterns to trigger specific responses. Think of early customer service bots that could only handle a narrow set of queries.

Modern chatbots leverage large language models and natural language processing. These AI-powered versions understand context better and generate more natural responses. But they still share a fundamental characteristic: they’re reactive systems that require human prompts to initiate action.

The architecture is straightforward. The user sends input, the system processes it, and returns output. That’s the loop.

Core Characteristics of Chatbots

Chatbots excel at conversational tasks within defined boundaries. They wait for input, interpret what the user wants, and respond accordingly.

Their learning capabilities vary by type. Rule-based bots don’t learn at all—they follow scripts. Machine learning-powered bots adapt over time based on training data, but this adaptation happens through retraining cycles rather than real-time autonomous improvement.

Response quality depends heavily on how well the system was trained and how closely the user’s query matches patterns the bot recognizes. Step outside those patterns, and chatbots typically struggle or escalate to human support.

Common Chatbot Use Cases

Customer service remains the primary chatbot application. These bots handle frequently asked questions, password resets, order status checks, and appointment scheduling.

E-commerce sites deploy chatbots for product recommendations and shopping assistance. Healthcare organizations use them for symptom checking and appointment booking. Educational institutions implement chatbots for student inquiries about courses and campus services.

The pattern is consistent: chatbots work best for high-volume, repetitive queries with clear parameters and expected outcomes.

Lippert, a component manufacturer with over $5.2 billion in annual sales, uses chatbots to manage significant customer service communications volume. These systems handle routine inquiries efficiently, freeing human agents for complex issues requiring judgment and expertise.

What Is an AI Agent?

AI agents represent a fundamentally different paradigm. According to research from ArXiv, AI agents are modular systems driven by large language models that can plan, reason, and execute tasks autonomously.

Here’s what makes them distinct: agents don’t just respond to prompts. They identify goals, break them into steps, choose tools, execute actions, and adapt based on results—all without requiring human input at each stage.

OpenAI’s ChatGPT agent, introduced in July 2025, exemplifies this shift. It can handle requests like “look at my calendar and brief me on upcoming client meetings based on recent news about their companies.” The agent accesses multiple tools, researches information, and compiles a comprehensive brief autonomously.

The architectural difference is substantial. Agents operate in perception-decision-action loops. They observe their environment, process that information through reasoning modules, decide on actions, execute those actions using available tools, and learn from outcomes.

Autonomy and Decision-Making

Autonomy is the defining characteristic of AI agents. Research on levels of autonomy for AI agents highlights this as both transformative opportunity and significant risk.

Agents make decisions without human intervention at every step. When faced with a task, they determine the optimal approach, select appropriate tools from their available toolkit, and execute multi-step workflows.

This autonomy operates on a spectrum. Some agents handle narrow tasks with minimal supervision. Others manage complex operations requiring extensive reasoning and tool orchestration.

But autonomy brings challenges. How much independent action should an agent have? What guardrails prevent harmful decisions? These questions shape how organizations deploy agent systems.

Learning and Adaptation

AI agents continuously improve performance through experience. Unlike chatbots that require manual retraining, agents incorporate feedback loops that enable real-time learning.

OpenAI developers note that modern agents utilize long-term memory through session notes and persistent context. This allows agents to remember preferences, past decisions, and user-specific information across interactions.

Session-level memory holds contextual information relevant to current interactions—things like “this trip is a family vacation” or “budget under $2,000.” Persistent memory stores long-term user preferences and historical patterns that inform future decisions.

This learning architecture transforms how agents operate over time. They don’t just execute tasks; they optimize execution based on accumulated experience.

Tool Use and Integration

AI agents interact with external systems through tool use. They can access databases, call APIs, execute code, browse the web, and manipulate files—all as needed to accomplish tasks.

The difference from traditional automation is crucial. Agents decide which tools to use and when to use them based on the specific context of each task. Traditional automation follows predefined workflows; agents dynamically construct workflows.

OpenAI’s agent implementation demonstrates this capability. When asked to create a presentation, the agent identifies relevant research sources, extracts key information, generates slides, formats content, and compiles the final deliverable—choosing appropriate tools at each stage without explicit instructions for every step.

Key Differences Between AI Agents and Chatbots

The distinctions between these technologies matter for business decisions, security implications, and operational outcomes.

| Fähigkeit | AI Chatbots | AI-Agenten |

|---|---|---|

| Autonomy | Require human prompts | Proactively identify needs and act independently |

| Lernen | Limited adaptation | Continuously learn and improve performance |

| Task Complexity | Single-step responses | Multi-step workflows with reasoning |

| Tool Access | Minimal external integration | Dynamic tool selection and execution |

| Decision-Making | Pattern matching | Goal-oriented planning |

| Memory | Session-based only | Long-term context retention |

Autonomy: Reactive vs Proactive

Chatbots wait. Agents act.

That’s the fundamental divide. Chatbots respond when users initiate contact. They’re excellent at this reactive role—answering questions, providing information, guiding users through processes.

AI agents operate proactively. They identify tasks that need completion, determine optimal approaches, and execute without waiting for explicit prompts at each decision point.

This distinction shapes deployment scenarios. Organizations use chatbots where human-initiated interaction makes sense. Agents fit situations requiring ongoing monitoring, complex workflows, or tasks that benefit from autonomous execution.

Complexity Handling

Chatbots handle straightforward queries effectively. Ask about store hours, and the bot provides the answer instantly. Request a password reset, and it guides through the process.

But complexity exposes limitations. Multi-step problems requiring research, tool integration, and adaptive decision-making overwhelm traditional chatbot architectures.

AI agents thrive on complexity. They break large problems into manageable components, execute each component using appropriate methods, and synthesize results into coherent outcomes.

Research capabilities illustrate this gap. A chatbot might provide links to relevant information. An agent researches the topic across multiple sources, synthesizes findings, evaluates credibility, and delivers comprehensive analysis—all autonomously.

Security Implications

The Cloud Security Alliance highlights critical security differences between chatbots and agents. Both automate tasks, but agents’ autonomous decision-making creates distinct risk profiles.

Chatbots operate within narrow boundaries. Their limited scope constrains potential security issues. An attacker compromising a chatbot gains access to conversational interfaces but not necessarily broader system control.

Agents with tool access and autonomous execution capabilities present expanded attack surfaces. Compromised agents potentially access databases, execute code, modify files, and interact with multiple systems—all autonomously.

This doesn’t make agents inherently less secure, but it demands different security approaches. Organizations deploying agents need robust authentication, authorization frameworks, activity monitoring, and guardrails preventing harmful actions.

Use Cases: When to Choose Chatbots vs AI Agents

The technology choice depends on task characteristics, complexity requirements, and operational constraints.

Optimal Chatbot Applications

Customer support for common issues represents the ideal chatbot scenario. When most queries fall into predictable categories with known solutions, chatbots excel.

FAQ automation, appointment scheduling, order tracking, basic troubleshooting, and information retrieval all fit chatbot capabilities well. These tasks have clear parameters, defined outcomes, and benefit from instant availability.

Lead qualification for sales teams works effectively with chatbots. The bot asks predefined questions, categorizes responses, and routes qualified leads to appropriate sales representatives.

Internal employee support for HR queries, IT help desk tickets, and policy questions leverages chatbots to reduce support team workload while providing immediate assistance.

Optimal AI Agent Applications

Complex workflow automation benefits from agent capabilities. Tasks requiring multiple tools, conditional logic, and adaptive decision-making justify agent deployment.

Research and analysis projects that involve gathering information from diverse sources, evaluating credibility, synthesizing insights, and producing comprehensive reports align with agent strengths.

Intelligent scheduling that considers multiple calendars, participant preferences, meeting requirements, and optimal timing represents a natural agent application. The agent autonomously handles negotiations, proposes options, and finalizes arrangements.

Data processing workflows that require extracting information from various formats, transforming data structures, validating accuracy, and loading results into target systems leverage agent reasoning and tool use.

Content creation that demands research, outline development, drafting, fact-checking, and formatting showcases agent capabilities for managing complex creative processes.

Hybrid Approaches

Many organizations deploy both technologies in complementary roles. Chatbots handle initial customer interactions, routine queries, and information gathering. When complexity exceeds chatbot capabilities, the system escalates to AI agents for resolution.

This tiered approach optimizes resource allocation. High-volume simple tasks get handled by efficient chatbot systems. Complex edge cases receive agent attention. Human experts focus on situations requiring judgment, empathy, or specialized expertise.

Slack’s Agentforce integration exemplifies this hybrid model. The platform combines conversational interfaces for common requests with agent capabilities for complex workflows requiring tool integration and multi-step execution.

Performance and Evaluation Challenges

Measuring AI agent effectiveness presents unique challenges compared to chatbot evaluation.

Chatbot Evaluation Metrics

Chatbot performance metrics are relatively straightforward. Response accuracy, conversation completion rate, user satisfaction scores, and escalation frequency provide clear performance indicators.

String matching, pattern recognition accuracy, and intent classification metrics quantify how well chatbots understand user inputs and select appropriate responses.

Response time, availability, and throughput measure operational performance. These metrics align well with chatbot use cases focused on high-volume routine interactions.

AI Agent Evaluation Complexity

Anthropic’s research on agent evaluation highlights the complexity challenge. The capabilities that make agents useful—autonomy, tool use, multi-step reasoning—also make them difficult to evaluate.

Traditional metrics fall short. String matching doesn’t capture whether an agent made optimal tool choices. Binary pass/fail tests miss nuanced performance differences in complex workflows.

Effective agent evaluation requires multi-faceted approaches. Code-based graders verify specific outcomes. LLM-based evaluators assess reasoning quality and decision appropriateness. Human review validates complex scenarios where automated evaluation proves insufficient.

OpenAI’s testing of their agent implementation demonstrates these challenges. When running up to eight parallel attempts and selecting based on confidence scores, their agent’s performance on hard benchmarks like FrontierMath showed significant variation—highlighting the non-deterministic nature of agent systems.

| Evaluation Approach | Strengths | Limitations |

|---|---|---|

| String Match Checks | Fast, deterministic, easy to implement | Misses semantic equivalence and contextual appropriateness |

| Binary Tests | Clear pass/fail criteria | Overlooks quality gradations in complex tasks |

| LLM-Based Graders | Assess reasoning and context understanding | Subject to evaluator model biases and limitations |

| Human Review | Captures nuanced judgment | Expensive, slow, doesn’t scale |

The Evolution from Chatbots to Agents

The shift from passive assistants to active agents represents the most significant transformation in artificial intelligence since ChatGPT’s launch.

Early chatbots were glorified search interfaces. Ask a question, get an answer. The intelligence lay in matching queries to knowledge bases.

Large language models expanded conversational capabilities. Chatbots became more natural, handling broader query variations and generating contextually appropriate responses. But they remained fundamentally reactive.

The agent era began when systems gained tool use, memory, and planning capabilities. Now AI doesn’t just respond—it acts.

Research from ArXiv on AI agents versus agentic AI provides conceptual clarity. AI agents are modular systems with distinct perception, reasoning, and action components. Agentic AI refers to the broader capability of systems to exhibit agency—autonomous goal-directed behavior.

This evolution continues. Current agent systems represent early implementations. As architectures mature, capabilities expand, and deployment patterns emerge, the distinction between reactive and agentic systems will likely sharpen further.

Implementation Considerations

Deploying either technology requires careful consideration of technical, operational, and organizational factors.

Technical Requirements

Chatbot implementation demands natural language processing capabilities, intent recognition systems, and response generation mechanisms. Integration with existing knowledge bases and customer service platforms shapes technical architecture.

AI agent deployment requires substantially more infrastructure. Agents need access to tool APIs, secure credential management, execution environments, monitoring systems, and error handling frameworks.

The technical complexity difference is significant. Chatbots can often be deployed as standalone services with limited integration points. Agents typically require deep integration with multiple systems to function effectively.

Governance and Control

Chatbot governance focuses on response quality, brand consistency, and escalation protocols. Control mechanisms are relatively straightforward since chatbots operate within narrow boundaries.

Agent governance demands frameworks for autonomy levels, action permissions, monitoring, and intervention. Organizations must define which actions agents can take independently versus requiring human approval.

Research on levels of autonomy for AI agents emphasizes that autonomy is a double-edged sword. The same capabilities that enable transformative outcomes create serious risks. Agent developers must calibrate appropriate autonomy levels for specific applications.

Cost Structures

Chatbot costs scale primarily with conversation volume. Each interaction consumes API calls for language model processing, but costs remain predictable and proportional to usage.

Agent costs are more complex. Tool usage, execution time, parallel processing, and memory storage all factor into operational expenses. A single agent task might require dozens of API calls across multiple services.

The cost equation depends on task value. Agents handling high-value complex workflows justify higher per-task costs. For high-volume simple tasks, chatbot economics typically prove more favorable.

Get the Technical Setup Right with A-listware

In comparisons like AI agents vs chatbots, the difference is often explained at the logic level. In practice, both rely on the same foundation – backend services, integrations, data handling, and infrastructure that keeps everything running. A-listware focuses on custom software development and dedicated engineering teams that build and support these systems, covering architecture, development, deployment, and maintenance.

The real challenge is not choosing between a chatbot or an agent, but turning either into a stable product. A-listware supports the full development lifecycle and helps integrate AI into working applications without splitting work across multiple vendors. Talk to A-listware and get a clear path from concept to implementation.

Real-World Performance Data

When OpenAI tested their agent implementation on challenging benchmarks, results highlighted both capabilities and limitations. The agent achieved a 44.4 HLE score on hard math problems when running eight parallel attempts and selecting based on confidence—substantially better than single-attempt performance but still showing room for improvement.

This performance pattern illustrates agent characteristics. Non-deterministic execution means multiple attempts may produce different quality outcomes. Confidence scoring helps select better results, but doesn’t guarantee optimal solutions.

Zendesk reports that their AI agents are trained on billions of real customer service interactions, enabling continuous improvement based on live data. This scale of training data contributes to more reliable performance in customer service contexts.

Performance ultimately depends on task alignment with system capabilities. Agents excel where complexity, tool use, and reasoning provide value. Chatbots perform best in high-volume scenarios with clear patterns and defined outcomes.

Future Trajectories

The agent market is projected to grow at 45.8% annually through 2030. This growth reflects expanding capabilities, broader use cases, and increasing enterprise adoption.

Chatbots aren’t disappearing. They’re evolving into more capable conversational interfaces while maintaining their core reactive architecture for appropriate use cases.

The convergence is partial. Some applications benefit from agentic capabilities added to conversational interfaces. Others work better with specialized agents handling complex workflows behind the scenes.

Multi-agent architectures represent an emerging pattern. Instead of monolithic AI systems, organizations deploy specialized agents for different domains, with coordination mechanisms enabling collaboration. Research from IEEE on LLM-driven multi-agent architectures explores these coordination frameworks.

The technical distinction between chatbots and agents will likely persist because it reflects fundamentally different design philosophies and operational patterns. But both technologies will continue advancing within their respective paradigms.

Häufig gestellte Fragen

- Can AI agents replace chatbots completely?

Not necessarily. While AI agents offer more advanced capabilities, chatbots remain more efficient for high-volume simple interactions. The reactive nature of chatbots actually provides advantages for straightforward query-response scenarios where autonomy adds unnecessary complexity and cost. Many organizations benefit from using both technologies in complementary roles rather than replacing one with the other.

- Are AI agents more expensive to operate than chatbots?

Generally yes, on a per-task basis. AI agents consume more computational resources, make multiple API calls per task, utilize tool integrations, and require more sophisticated infrastructure. However, cost-effectiveness depends on task value. For complex workflows that would otherwise require human labor, agents can provide significant ROI despite higher operational costs compared to chatbots.

- How do I know which technology my business needs?

Assess task characteristics. If most interactions involve straightforward queries with predictable responses, chatbots fit well. If workflows require multi-step processes, tool integration, research, or autonomous decision-making, agents provide better value. Many businesses benefit from starting with chatbots for common tasks and adding agents for complex scenarios that justify the additional investment.

- What are the main security risks of AI agents versus chatbots?

AI agents present expanded attack surfaces due to tool access and autonomous execution capabilities. A compromised agent potentially interacts with multiple systems, executes code, and modifies data—all autonomously. Chatbots have more limited scope, constraining potential damage from security breaches. Organizations deploying agents need robust authentication, monitoring, and guardrails to mitigate risks associated with autonomous system access.

- Can chatbots learn and improve like AI agents?

Chatbots can improve through retraining on new data, but this happens in discrete cycles rather than continuously during operation. AI agents incorporate feedback loops enabling real-time learning and adaptation. Agents also maintain long-term memory across interactions, while chatbots typically only retain session-level context. This learning architecture difference fundamentally separates how the technologies evolve and optimize performance over time.

- Do AI agents require more technical expertise to implement?

Yes, substantially more. AI agents need integration with multiple tools, secure credential management, execution monitoring, error handling frameworks, and governance systems. Chatbots can often be deployed with pre-built platforms and minimal custom development. Organizations considering agent deployment should assess whether they have the technical capabilities to implement, monitor, and maintain these more complex systems effectively.

- What industries benefit most from AI agents versus chatbots?

Chatbots serve nearly all industries for customer service, support, and information delivery. AI agents provide particular value in industries with complex workflows: financial services for research and analysis, healthcare for care coordination, logistics for dynamic scheduling and routing, and professional services for document processing and client deliverable creation. The determining factor is task complexity rather than industry sector.

Schlussfolgerung

AI agents and chatbots serve distinct purposes in the artificial intelligence landscape. Chatbots excel at reactive, conversational tasks with clear parameters and high volume. AI agents tackle complex, multi-step workflows requiring autonomy, tool use, and adaptive decision-making.

The choice between these technologies depends on specific business needs, task characteristics, and operational constraints. Organizations don’t necessarily need to choose one over the other—hybrid approaches leveraging both technologies in complementary roles often deliver optimal results.

As AI capabilities continue advancing, both chatbots and agents will evolve. Chatbots will become more sophisticated in natural language understanding and response quality. Agents will expand tool access, improve reasoning capabilities, and develop more robust governance frameworks.

The fundamental distinction will persist: chatbots respond, agents act. Understanding this difference enables businesses to deploy the right technology for each use case, maximizing value while managing costs and risks appropriately.

Ready to implement AI solutions for your business? Start by mapping your current processes, identifying high-volume routine tasks suited for chatbots and complex workflows that justify agent capabilities. Test both technologies in controlled environments before full deployment, and establish clear metrics for evaluating performance against your specific business objectives.