Kurze Zusammenfassung: AI agents are modular, task-specific systems that execute predefined workflows with limited autonomy, while agentic AI represents collaborative ecosystems of goal-driven agents that adapt, learn, and coordinate independently. The key distinction lies in autonomy level, learning capability, and architectural complexity—AI agents follow instructions, whereas agentic AI systems reason toward goals and handle dynamic, multi-step challenges with minimal human oversight.

The terminology around artificial intelligence keeps evolving, and the latest confusion? AI agents versus agentic AI. They sound interchangeable, but they’re fundamentally different in design philosophy, capability, and application.

Understanding this distinction isn’t academic hairsplitting. According to research published on arXiv by Sapkota, Roumeliotis, and Karkee, AI agents are characterized as modular systems driven by LLMs and LIMs with task-specific focus, while agentic AI represents collaborative ecosystems where multiple agents coordinate toward shared goals with advanced autonomy.

And the adoption timeline is aggressive. According to industry projections, by 2028, 33% of enterprise software will have integrated agentic AI capabilities—up from less than 1% in 2024. That’s a massive architectural shift happening right now.

So what separates these two approaches? Let’s break down the conceptual taxonomy, architectural differences, and practical implications.

What Are AI Agents?

AI agents operate as self-contained systems designed to perceive their environment, reason through available data, and execute specific actions. Think of them as sophisticated automation tools with decision-making capabilities baked in.

They follow a linear processing loop: perception → reasoning → action. The agent receives input, applies predefined logic or learned patterns, then executes a response. This works beautifully for well-defined tasks with clear parameters.

Here’s the thing though—AI agents typically require human intervention when scenarios deviate from expected patterns. They excel at specific workflows but struggle with ambiguity or multi-step challenges that require dynamic replanning.

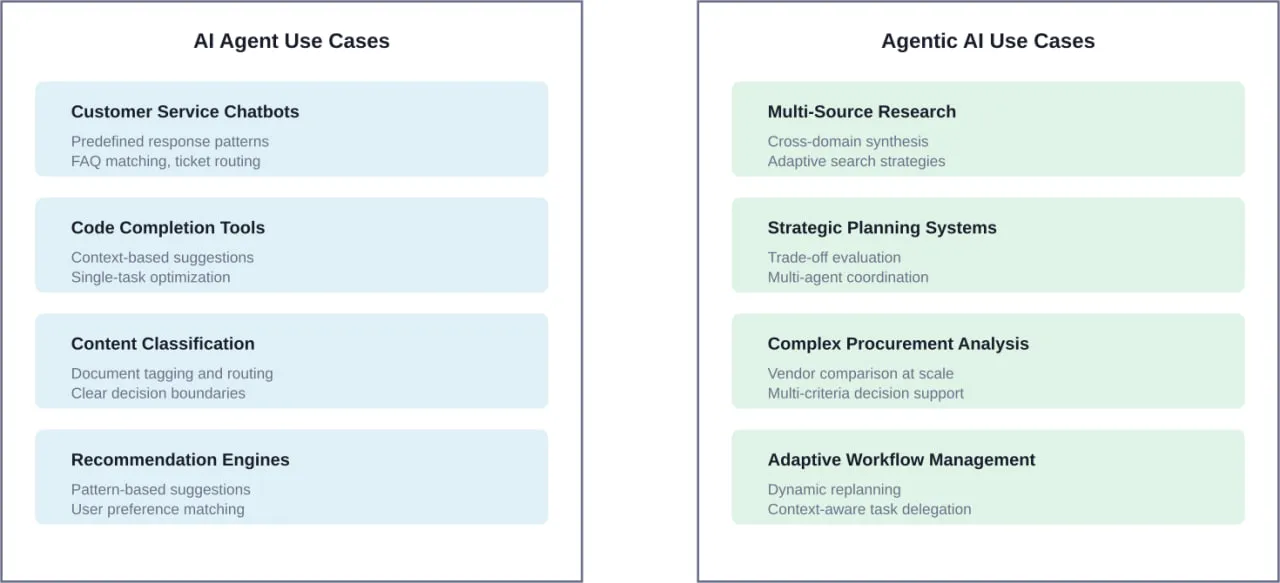

Common examples include chatbots that answer customer queries, recommendation engines that suggest products, or code completion tools that predict the next line based on context. These systems are intelligent within their domain but operate independently rather than collaboratively.

According to industry reports, a significant majority of companies are planning to implement AI agents within the next three years, making them a foundational technology for enterprise automation.

Core Characteristics of Traditional AI Agents

Traditional AI agents share several defining traits that distinguish them from more advanced agentic architectures.

First, they’re reactive systems. They respond to inputs rather than proactively pursuing objectives. An AI agent processes requests as they arrive but doesn’t maintain long-term goals or contextual memory across sessions.

Second, they operate with constrained autonomy. While they can make decisions without constant human input, those decisions happen within tightly defined guardrails. Deviation from the script typically triggers fallback behaviors or human escalation.

Third, they’re designed for single-task optimization. Each agent handles one job well—whether that’s summarizing documents, routing support tickets, or analyzing sentiment. Cross-domain reasoning isn’t the objective.

What Is Agentic AI?

Agentic AI represents a paradigm shift from task executors to goal-oriented problem solvers. Instead of single agents performing isolated functions, agentic systems deploy multiple coordinating agents that adapt their approach based on evolving conditions.

Research including work from the Tata Institute of Social Sciences characterizes agentic AI as collaborative ecosystems where agents share memory, coordinate actions, and collectively pursue complex objectives that no single agent could achieve independently.

The architecture introduces orchestration layers that manage agent communication, resource allocation, and conflict resolution. Agents don’t just execute—they plan, delegate, verify, and iterate until goals are met.

Real talk: this isn’t just about throwing more agents at a problem. It’s about emergent intelligence through coordination. According to Anthropic’s engineering documentation, multi-agent research systems excel especially for breadth-first queries that involve pursuing multiple independent directions simultaneously.

MIT Sloan’s analysis describes agentic AI as systems that are “semi- or fully autonomous and thus able to perceive, reason, and act on their own,” marking a clear evolution beyond the prompt-response patterns of earlier generative AI implementations.

The Architectural Evolution

Where traditional AI agents use linear workflows, agentic AI introduces hierarchical and networked structures. A main coordinating agent might orchestrate specialized subagents, each handling deep technical work or tool-based information retrieval.

According to Anthropic’s engineering documentation, each subagent might explore extensively using tens of thousands of tokens, but returns only condensed summaries of 1,000-2,000 tokens to the main agent. This context management strategy prevents overwhelming the orchestration layer while enabling thorough investigation.

The system maintains a shared state across agents. Memory isn’t siloed—agents can access previous findings, build on each other’s work, and avoid redundant exploration. This collaborative memory transforms isolated tool usage into coherent problem-solving.

Key Differences That Matter

Now, this is where it gets interesting. The distinctions between AI agents and agentic AI aren’t just semantic—they fundamentally change what’s possible.

| Charakteristisch | AI-Agenten | Agentische KI |

|---|---|---|

| Autonomiestufe | Operate within predefined frameworks, require human intervention for complex decisions | Can function with limited oversight, self-correct, and adapt strategies dynamically |

| Learning Capability | Static or periodic model updates, minimal runtime adaptation | Continuous learning from interactions, environmental feedback, and agent collaboration |

| Task Scope | Single-task optimization, domain-specific execution | Multi-domain coordination, complex goal decomposition, cross-functional problem solving |

| Decision Architecture | Rule-based or pattern-matching within constraints | Strategic planning, reasoning chains, multi-step problem decomposition |

| Modell der Zusammenarbeit | Isolated execution, minimal inter-agent communication | Networked agents with shared memory, delegation, and conflict resolution |

Autonomy and Agency

The autonomy gap is substantial. AI agents execute tasks when triggered. Agentic systems pursue objectives proactively, determining not just how to complete a task but whether it’s the right task to begin with.

OpenAI’s practical guide on building governed AI agents emphasizes that agentic scaffolding requires rethinking control mechanisms. Instead of permission-based workflows, organizations implement governed autonomy—agents operate independently within organizational policies encoded as constraints rather than checklists.

This shift mirrors the principal-agent framework from economics. As research from UC Berkeley’s California Management Review explains, agentic AI introduces principal-agent dynamics where organizations must balance granting autonomy against maintaining accountability.

Learning and Adaptation

Traditional AI agents are trained once and deployed. Updates happen through retraining cycles managed by data scientists. The agent doesn’t improve from individual interactions—it applies what it learned during training.

Agentic AI systems incorporate feedback loops that enable runtime learning. When an agent encounters a novel scenario, it doesn’t just log an error—it explores alternative approaches, tests hypotheses, and incorporates successful strategies into its operational model.

But wait. This doesn’t mean agentic systems are completely autonomous learners. They still operate within safety boundaries and governance frameworks. The learning happens within controlled parameters that prevent drift or unintended optimization.

Architectural Complexity

Single-agent architectures are conceptually straightforward. One model, one set of tools, one execution context. Debugging, testing, and deployment follow familiar software engineering patterns.

Agentic systems introduce orchestration challenges. How do you manage state across multiple agents? What happens when agents reach conflicting conclusions? How do you attribute decisions in a collaborative system?

Anthropic’s engineering team highlights context engineering as a critical discipline. Building effective agentic systems requires carefully curating what information each agent receives, how agents summarize findings for coordination, and when to compress or expand context windows.

Praktische Anwendungen und Anwendungsfälle

The theoretical distinctions translate into practical differences in deployment scenarios and outcomes.

Where Traditional AI Agents Excel

AI agents dominate in scenarios with clear inputs, predictable workflows, and well-defined success criteria. Customer service chatbots that route inquiries, code completion assistants that suggest syntax, or document classifiers that tag content all leverage AI agent architecture effectively.

These implementations deliver immediate ROI because they automate repetitive cognitive tasks without requiring complex orchestration. The agent does one thing well, integrates into existing systems, and scales horizontally by adding more instances.

Many experts suggest that for organizations beginning AI adoption, starting with focused AI agents provides lower risk and faster time-to-value than jumping directly to agentic architectures.

Where Agentic AI Shines

Agentic AI addresses scenarios traditional agents can’t handle: complex research tasks requiring synthesis across multiple sources, strategic planning that involves evaluating trade-offs, or adaptive workflows where requirements change based on intermediate results.

Anthropic’s multi-agent research system demonstrates this capability. The system doesn’t just retrieve information—it formulates search strategies, evaluates source credibility, identifies knowledge gaps, and iteratively refines its understanding until the research objective is satisfied.

Similarly, Harvard Business School research on leadership in an agentic AI world describes how executives can deploy agentic systems as digital support teams that handle parallel workstreams, surface insights from disparate data sources, and maintain continuity across long-horizon projects.

In procurement scenarios mentioned in MIT Sloan’s analysis, agentic AI delivers value by reading reviews, analyzing metrics, and comparing attributes across numerous vendors—tasks that involve substantial evaluation effort and multiple decision criteria.

Implementation Challenges and Considerations

Both approaches come with trade-offs that impact development complexity, operational costs, and organizational readiness.

AI Agent Implementation Challenges

Traditional AI agents face scalability limits when task complexity increases. Each edge case requires explicit handling, leading to brittle systems that break under novel conditions.

They also struggle with context retention. Without persistent memory across interactions, agents can’t build understanding over time or reference previous conversations meaningfully. Every interaction starts from zero.

Integration complexity grows linearly with the number of agents deployed. If you’re running 50 specialized agents, you’re managing 50 separate systems with individual monitoring, updates, and failure modes.

Agentic AI Implementation Challenges

Agentic systems introduce orchestration overhead. Managing communication between agents, preventing infinite loops, and ensuring convergence toward goals requires sophisticated coordination logic that doesn’t exist in single-agent designs.

Debugging becomes substantially harder. When a multi-agent system produces an incorrect result, tracing the error requires examining agent interactions, shared state mutations, and decision chains across the collaborative network.

Cost considerations shift too. Running multiple agents simultaneously consumes more computational resources than single-agent execution. Token usage multiplies when agents explore different solution paths in parallel.

Stanford’s DigiChina research on how China approaches agentic AI notes that while Chinese developers are actively building agentic systems, specific governance and regulation frameworks are still nascent—a challenge facing the global industry.

The Practical Business Implications

So what does this mean for organizations evaluating AI investments? The choice between AI agents and agentic AI isn’t binary—it’s about matching architecture to requirements.

When to Choose AI Agents

Start with AI agents when you have clearly scoped automation targets. If the task can be described with a flowchart and doesn’t require cross-domain reasoning, traditional agents deliver faster ROI with lower implementation risk.

They’re ideal for augmenting existing workflows rather than reimagining processes. Drop an AI agent into your support queue to handle tier-one questions, freeing human agents for complex cases.

Organizations with limited AI expertise should begin here. The learning curve is gentler, failure modes are more predictable, and the technology is more mature.

When to Choose Agentic AI

Agentic AI makes sense for strategic initiatives where complexity justifies the investment. Research projects, market analysis, strategic planning, and other knowledge work that requires synthesis across multiple information sources benefit from multi-agent collaboration.

Consider agentic approaches when human experts currently spend significant time coordinating information gathering, evaluating options, and iterating toward solutions. That coordination overhead is exactly what agentic systems can automate.

Organizations with mature AI capabilities and robust governance frameworks are better positioned to deploy agentic systems successfully. The technology demands more sophisticated monitoring, clearer policy definition, and deeper technical expertise.

The Hybrid Approach

In practice, most organizations will run both. Specialized AI agents handle routine tasks while agentic systems tackle complex initiatives. The key is recognizing which architecture fits which problem.

ISACA’s analysis emphasizes that understanding these architectural differences matters for organizational decision-making. Choosing the wrong approach leads to over-engineered solutions that waste resources or under-powered systems that can’t deliver promised value.

Turning AI Concepts into Working Systems? Talk to A-listware

In discussions like agentic AI vs AI agents, most attention goes to concepts and architecture. In practice, the challenge is turning those ideas into working systems – setting up services, integrating components, and making everything stable in production. A-listware focuses on software development and dedicated engineering teams that handle this part, from planning and architecture to development, deployment, and support.

When moving from theory to real use, the work usually sits around the AI layer – building applications, managing data, and connecting systems. A-listware supports the full development cycle, including custom software, cloud applications, and ongoing maintenance, so projects don’t stall after the initial concept. If you’re working on agentic systems or AI agents, talk to A-listware and see how to turn the concept into something that actually runs.

Future Trajectory and Evolution

The research landscape suggests both paradigms will continue evolving, but agentic AI represents the direction of travel for advanced AI capabilities.

According to industry projections, significant portions of organizations are expected to develop some form of AI orchestration capability by 2027—the foundation for agentic systems.

Look, the infrastructure is maturing rapidly. Cloud providers are adding native support for multi-agent workflows. Development frameworks are abstracting orchestration complexity. Governance tools are emerging to manage autonomous agent behavior at scale.

But traditional AI agents aren’t disappearing. They’re becoming more capable within their domains while agentic systems handle increasingly complex coordination challenges. The distinction will sharpen rather than blur.

NIST’s Center for AI Standards and Innovation is actively working on securing AI agents and systems, suggesting that governance frameworks will evolve alongside technical capabilities to enable safer deployment of autonomous AI.

Making the Right Choice for Your Context

The decision framework comes down to a few critical questions: What’s the scope of autonomy required? How much coordination complexity exists in the target workflow? What level of adaptability do you need?

If answers point toward narrow tasks with clear success criteria, AI agents deliver faster results with less architectural complexity. If answers involve multi-step reasoning, dynamic replanning, or cross-domain synthesis, agentic AI becomes worth the additional investment.

That said, don’t let architectural enthusiasm override practical constraints. Agentic AI requires more engineering sophistication, deeper governance consideration, and higher operational overhead. Organizations should build that capability deliberately rather than rushing adoption.

The terminology distinction between AI agents and agentic AI reflects a genuine architectural divide. Understanding that divide enables better technology decisions, more realistic project scoping, and clearer alignment between business objectives and AI capabilities.

Häufig gestellte Fragen

- What’s the main difference between AI agents and agentic AI?

AI agents are individual systems that execute specific tasks with limited autonomy, while agentic AI consists of multiple coordinating agents that pursue complex goals with higher autonomy, shared memory, and adaptive planning. The key distinction lies in collaboration architecture and decision-making sophistication.

- Can AI agents work together like agentic AI systems?

Traditional AI agents can be connected through APIs and workflow tools, but they lack the orchestration layers, shared context, and dynamic coordination that define agentic systems. Simply linking multiple agents doesn’t create agentic AI—the architecture requires purpose-built coordination mechanisms.

- Is agentic AI always better than using AI agents?

Not necessarily. Agentic AI introduces complexity, cost, and orchestration overhead that may not be justified for straightforward automation tasks. AI agents often deliver better ROI for well-defined, single-domain problems. The right choice depends on task complexity and organizational capabilities.

- How much more expensive is agentic AI to implement?

Costs vary significantly based on system complexity, but agentic implementations typically require 3-5x more engineering effort for orchestration, monitoring, and governance compared to single-agent deployments. Runtime costs also increase due to parallel agent execution and higher token consumption.

- What skills do teams need to build agentic AI systems?

Building agentic systems requires expertise in distributed systems architecture, prompt engineering, context management, and AI governance. Teams need experience debugging complex agent interactions and implementing coordination logic—capabilities beyond what’s needed for traditional AI agent development.

- Are there governance concerns specific to agentic AI?

Yes. Agentic systems introduce accountability challenges because decisions emerge from agent collaboration rather than single-agent execution. Organizations must implement traceability mechanisms, define boundaries for autonomous decision-making, and establish protocols for when systems should escalate to human oversight.

- Will AI agents eventually become obsolete?

No. Specialized AI agents will continue serving focused use cases where their simplicity offers advantages. The trend is toward hybrid architectures where AI agents handle routine tasks while agentic systems tackle complex coordination challenges. Both paradigms have enduring value.

Schlussfolgerung

The distinction between agentic AI and AI agents isn’t just terminology—it represents fundamentally different approaches to building intelligent systems. AI agents excel at focused automation within defined parameters. Agentic AI unlocks collaborative problem-solving for complex, multi-step challenges requiring coordination and adaptation.

Understanding this difference enables better architecture decisions, more realistic project planning, and clearer alignment between AI capabilities and business needs. The choice isn’t which paradigm wins, but which fits your specific context and organizational maturity.

As adoption accelerates and frameworks mature, organizations that thoughtfully match AI architecture to problem complexity will extract substantially more value than those treating all AI as interchangeable. Start by mapping your use cases to the right architectural pattern, then build your capabilities deliberately from that foundation.