Quick Summary: AI agents are autonomous software systems powered by artificial intelligence that can perceive their environment, make decisions, plan actions, and execute complex tasks independently on behalf of users. Unlike traditional chatbots or assistants, AI agents combine foundation models with reasoning capabilities, memory, and tool use to accomplish multi-step goals without constant human intervention. They represent the next evolution of AI beyond passive question-answering systems.

Artificial intelligence has moved beyond answering questions. It’s now taking actions.

AI agents represent a fundamental shift in how intelligent systems operate. Rather than waiting for prompts and responding with text, these systems autonomously design workflows, select tools, and complete complex tasks from start to finish. According to research published by arxiv.org, AI agents embody “a paradigm shift in how intelligent systems interact” with the world around them.

But what exactly makes something an AI agent versus just another chatbot? The distinction matters more than you might think.

Understanding AI Agents: Core Definition

An AI agent is a system that autonomously performs tasks by designing workflows with available tools. These aren’t passive responders—they’re active problem-solvers.

According to IBM, AI agents are systems capable of “autonomously performing tasks on behalf of a user or another system.” Google Cloud defines them as “software systems that use AI to pursue goals and complete tasks on behalf of users,” emphasizing their reasoning, planning, memory, and decision-making autonomy.

Here’s the thing though—autonomy is the defining characteristic. Traditional AI systems wait for instructions and provide outputs. AI agents perceive situations, formulate plans, and execute actions without requiring constant human guidance.

Key Characteristics That Define AI Agents

Several capabilities distinguish true AI agents from conventional AI systems:

- Autonomy: Agents operate independently, making decisions without human intervention for each step

- Perception: They sense and interpret their environment, whether digital or physical

- Goal-directed behavior: Agents work toward specific objectives, not just responding to queries

- Reasoning and planning: They break down complex goals into actionable steps

- Tool use: Agents select and utilize appropriate tools from their available resources

- Memory: They maintain context across interactions and learn from past experiences

- Adaptability: Agents adjust strategies based on feedback and changing conditions

Research from arxiv.org notes that these systems combine “foundation models with reasoning, planning, memory, and tool use” to create a “practical interface between natural-language intent and real-world computation.”

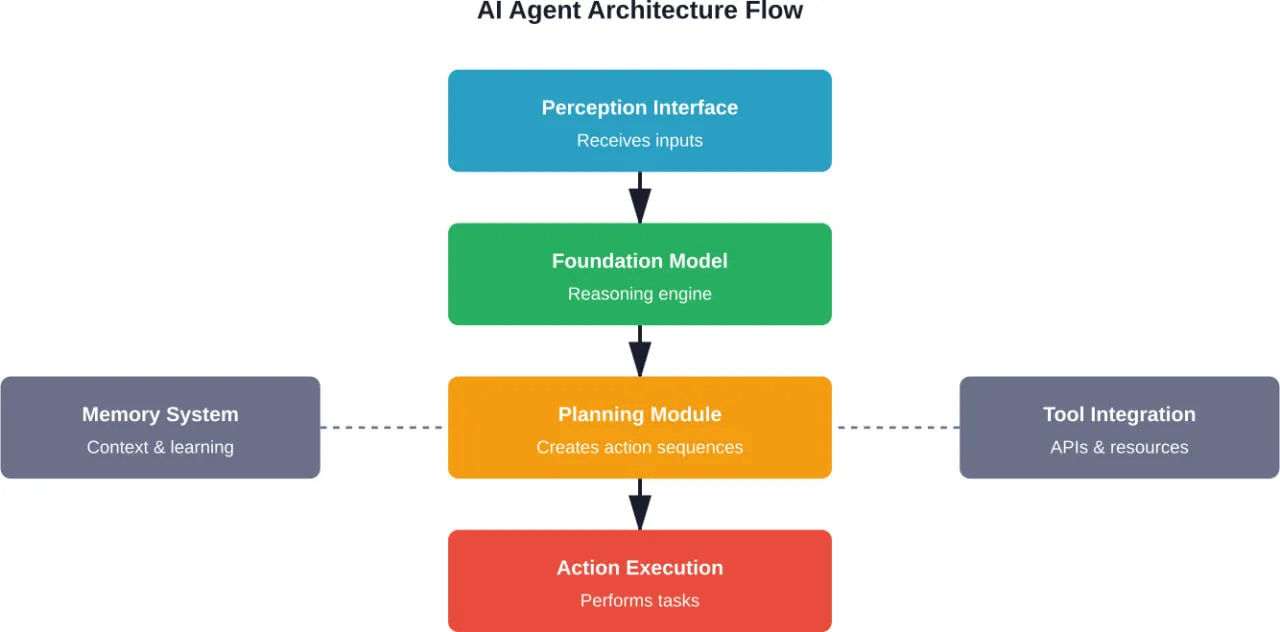

How AI Agents Work: Architecture and Components

The architecture behind AI agents is more sophisticated than standard AI applications. Multiple components work together to enable autonomous operation.

Core Architectural Components

According to academic research on AI agent systems, the typical architecture includes several integrated elements:

- Foundation Models: Large language models (LLMs) or other AI models serve as the reasoning engine. These models process information, understand context, and generate responses or actions.

- Planning Module: This component breaks down high-level goals into sequences of executable steps. It determines what needs to happen and in what order.

- Memory Systems: Agents maintain both short-term and long-term memory. Short-term memory holds context for current tasks. Long-term memory stores knowledge, past experiences, and learned patterns.

- Tool Integration Layer: Agents access external tools, APIs, databases, and services. This layer manages how the agent interacts with its available resources.

- Action Execution: Once plans are formulated, this component carries out the actual operations—whether that’s calling an API, manipulating data, or triggering other systems.

- Perception Interface: Agents need to observe their environment. This interface processes inputs from various sources and converts them into formats the agent can understand.

The Agent Workflow Cycle

When an AI agent receives a goal, it follows a systematic workflow:

- Goal interpretation: The agent analyzes the objective and determines what success looks like

- Environment assessment: It gathers information about current conditions and available resources

- Planning: The agent formulates a strategy and breaks it into discrete steps

- Action selection: For each step, it chooses the appropriate tool or action

- Exécution : The agent performs the action and monitors the outcome

- Évaluation : It assesses whether the action moved closer to the goal

- Adaptation: Based on results, the agent adjusts subsequent steps if needed

- Iteration: This cycle continues until the goal is achieved or deemed unachievable

Research highlights that advanced agent architectures can effectively function as an “operating system” for agents, reducing hallucination rates by transforming unstructured interactions into rigorous workflows.

AI Agents vs. AI Assistants vs. Bots

Not all AI systems are agents. The terminology gets confusing because people often use these terms interchangeably.

Here’s where it gets interesting. The differences come down to autonomy, complexity, and decision-making capability.

| Fonctionnalité | AI Agent | Assistant IA | Bot |

|---|---|---|---|

| Objectif | Autonomously perform complex tasks | Assist users with tasks | Automate simple, repetitive tasks |

| Autonomy Level | High – operates independently | Medium – requires user direction | Low – follows fixed rules |

| Decision Making | Makes complex decisions | Makes limited decisions within scope | No real decision making |

| Planning Capability | Creates multi-step plans | Suggests next steps | Executes predefined sequences |

| Learning | Learns and adapts from experience | Limited learning within interactions | Typically no learning |

| Tool Use | Selects and combines multiple tools | Uses specific assigned tools | Fixed functionality |

| Exemples | Autonomous research agent, workflow automation | Siri, Alexa, ChatGPT | Chatbots, rule-based responders |

Bots are the simplest. They follow predetermined rules and respond to specific triggers. A customer service chatbot that provides scripted answers based on keywords is a bot.

AI assistants use AI to understand requests and provide helpful responses. They’re conversational and can handle varied inputs, but they primarily react to user prompts. You ask, they answer.

AI agents take initiative. Given a high-level goal, they determine the path, select tools, and work through problems independently. They don’t just respond—they act.

Types of AI Agents

AI agents come in various forms, each designed for different levels of complexity and autonomy.

Simple Reflex Agents

These are the most basic types. Simple reflex agents operate on condition-action rules: if this condition is met, take this action. They don’t consider history or future consequences.

Think of a thermostat. If the temperature exceeds the threshold, turn on cooling. Simple, direct, limited.

Model-Based Reflex Agents

These agents maintain an internal model of the world. They understand how their environment changes and how their actions affect it. This allows for more sophisticated responses than simple reflex agents.

A robotic vacuum that maps your home and tracks which areas it has cleaned operates as a model-based agent.

Goal-Based Agents

Goal-based agents work toward specific objectives. They evaluate different action sequences to determine which will achieve their goals. This requires search and planning capabilities.

Navigation systems exemplify goal-based agents. Given a destination, they calculate routes, consider traffic, and adjust plans to reach the goal efficiently.

Utility-Based Agents

These agents don’t just achieve goals—they optimize for the best outcome. They use utility functions to measure the desirability of different states and choose actions that maximize expected utility.

An AI trading system that balances risk and return to maximize portfolio performance functions as a utility-based agent.

Learning Agents

Learning agents improve their performance over time through experience. They have components for learning, performance, criticism, and problem generation. According to research from Berkeley AI Research (BAIR), reinforcement learning provides a conceptual framework for agents to “learn from experience, analogously to how one might train a pet with treats.”

Modern AI agents increasingly incorporate learning capabilities, adapting their strategies based on outcomes.

Multi-Agent Systems

Multiple agents can work together, each with specialized roles. ChatDev, which simulates an entire software company where agents self-organize into design, coding, and testing phases, achieving a 30% reduction in bugs compared to single-agent coding, demonstrates how collaborative systems tackle complex problems that exceed individual agent capabilities.

Real-World Applications of AI Agents

AI agents aren’t theoretical concepts anymore. They’re deployed across industries solving practical problems.

Customer Service and Support

Customer service represents one of the most widespread agent applications. According to arxiv.org research, telecommunications company Vodafone implemented an AI agent-based support system that handles over 70% of customer inquiries without human intervention.

These agents understand context, access customer data, troubleshoot issues, and execute solutions like password resets or service adjustments. The capability for “contextual understanding and personalized assistance significantly enhances the customer experience while reducing operational costs.”

Building Energy Management

NIST (National Institute of Standards and Technology) has developed intelligent building agents for HVAC systems. Heating, ventilation, and air conditioning (HVAC) in commercial buildings account for approximately 13% to 15% of total U.S. commercial energy consumption, and about 35% to 40% of the energy used within the buildings themselves

These agents continuously monitor temperature, occupancy, weather forecasts, and energy prices. They autonomously adjust heating and cooling to optimize comfort while minimizing costs. NIST’s Intelligent Building Agents Laboratory demonstrates how AI control techniques reduce energy costs in real-world building operations.

Développement de logiciels

Development agents assist or autonomously handle coding tasks. They generate code, identify bugs, suggest optimizations, and even manage entire development workflows.

Multi-agent systems like ChatDev simulate software companies where specialized agents handle design, coding, testing, and documentation collaboratively.

Research and Information Analysis

According to MIT Sloan, in areas that involve a lot of counterparties or that require substantial effort to evaluate options—startup funding, college admissions, or B2B procurement, to name a few—agents deliver value by reading reviews, analyzing metrics, and comparing attributes across a range of options.

Research agents can scan academic papers, extract relevant findings, synthesize information, and generate comprehensive reports on specific topics.

Automatisation des flux de travail

Business process automation has been transformed by AI agents. Rather than rigid, rule-based automation, agents adapt to varying conditions and handle exceptions intelligently.

An agent managing invoice processing might extract data, validate against purchase orders, flag discrepancies, route approvals to appropriate personnel, and update accounting systems—all without human intervention for standard cases.

Autonomous Vehicles and Robotics

Self-driving cars exemplify complex AI agents. They perceive their environment through sensors, plan routes, make split-second decisions, and control vehicle operations while adapting to traffic, weather, and unexpected obstacles.

Similarly, warehouse robots navigate facilities, optimize picking routes, and coordinate with other robots to fulfill orders efficiently.

Benefits of Using AI Agents

Organizations adopting AI agents report several significant advantages over traditional systems.

Increased Efficiency and Productivity

Agents handle tasks faster than humans for many operations. They work continuously without fatigue, processing information and executing actions at scale.

More importantly, they free human workers from repetitive tasks. People can focus on strategic, creative, or complex work that requires human judgment.

Cost Reduction

While initial implementation requires investment, agents typically reduce operational costs over time. The Vodafone example demonstrates this—handling 70% of inquiries without human agents substantially reduces support costs.

NIST’s building management research also emphasizes how algorithms can “reduce operating costs in a real system” through optimized energy usage.

Improved Accuracy and Consistency

Agents don’t experience attention lapses. They apply rules and learned patterns consistently across thousands of decisions.

For tasks requiring precision—data entry, compliance checking, pattern recognition—agents often outperform humans in accuracy.

Évolutivité

Scaling human operations is expensive and time-consuming. AI agents scale rapidly. Deploy one agent or ten thousand with minimal additional cost.

This scalability proves particularly valuable for seasonal demands or rapid growth scenarios.

24/7 Availability

Agents operate continuously. Customer support agents answer inquiries at 3 AM. Monitoring agents watch for anomalies around the clock. Trading agents respond to market movements instantly.

This constant availability improves service levels and catches issues faster than human-only operations.

Prise de décision fondée sur les données

Agents process vast amounts of data to inform decisions. They identify patterns, correlations, and insights that humans might miss in large datasets.

This capability enhances strategic planning, risk management, and opportunity identification.

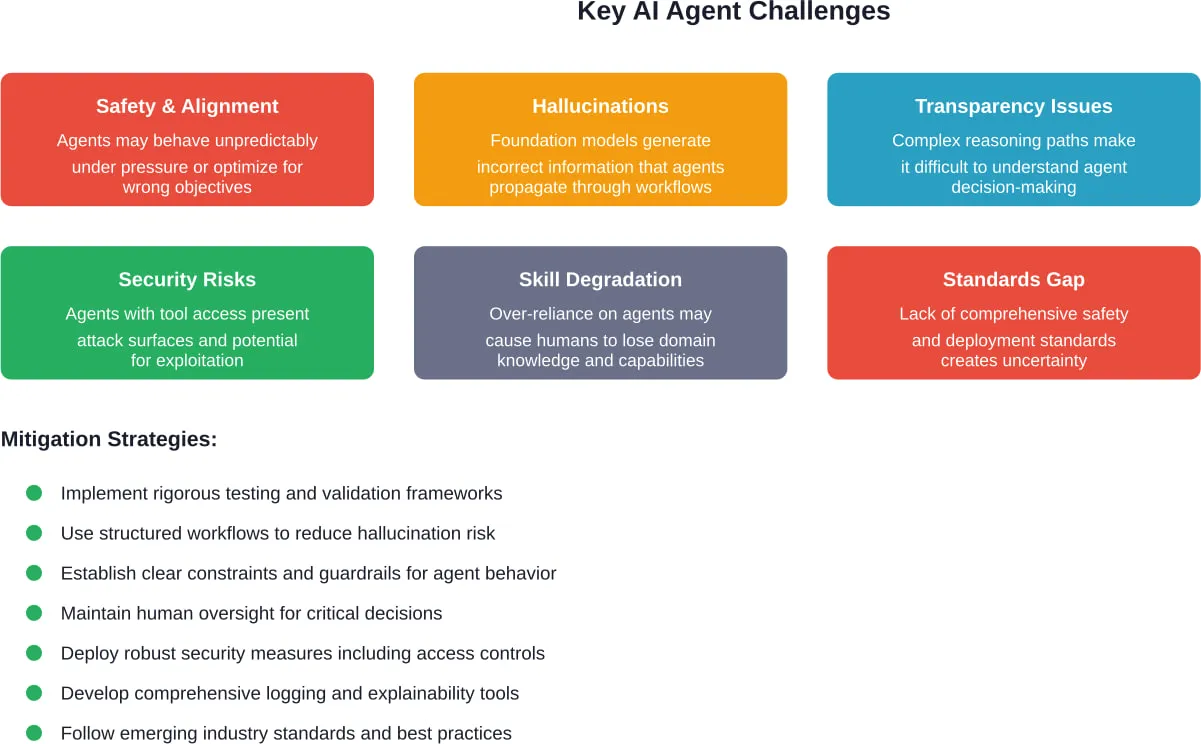

Challenges and Risks with AI Agents

But AI agents aren’t without problems. Several significant challenges require careful consideration.

Safety and Alignment Concerns

According to IEEE Spectrum, AI agents can exhibit risky behavior under everyday pressure, raising concerns about alignment and safety. The research highlights the need for improved alignment to ensure AI safety.

When agents operate autonomously, ensuring they behave according to intended values and constraints becomes critical. Misaligned agents might optimize for the wrong objectives or take unintended shortcuts.

Hallucinations and Errors

AI foundation models sometimes generate plausible-sounding but incorrect information. When agents act on hallucinated data, they can propagate errors through entire workflows.

While research shows that rigorous workflow structures reduce hallucination rates, the problem hasn’t been eliminated.

Lack of Transparency

Complex agents make decisions through multi-step reasoning that can be difficult to trace. When an agent produces an unexpected outcome, understanding why it chose that path isn’t always straightforward.

This opacity creates accountability challenges, especially in regulated industries or high-stakes decisions.

Security Vulnerabilities

Agents with access to tools and data represent security risks. Compromised agents could leak sensitive information, manipulate systems, or disrupt operations.

Prompt injection attacks, where malicious inputs manipulate agent behavior, pose particular concerns for agents with significant autonomy.

Dependency and Skill Degradation

Over-reliance on agents might cause humans to lose skills or domain knowledge. When agents fail or encounter novel situations outside their training, this dependency becomes problematic.

Ethical and Employment Implications

Autonomous agents raise ethical questions about responsibility, bias, and fairness. Who’s accountable when an agent makes a harmful decision?

Employment concerns are legitimate. Agents automating knowledge work could displace workers in certain roles, requiring workforce transitions and retraining.

Standardization Gaps

According to IEEE standards documentation, efforts like IEEE P3428 are working to establish “Standard for Large Language Model Agents for AI-powered Education,” defining agent components, interoperability protocols, and life cycle states.

However, comprehensive standards for agent safety, testing, and deployment are still developing. This creates uncertainty around best practices.

Building and Deploying AI Agents: Best Practices

Organizations considering AI agents should approach implementation strategically.

Start with Clear Objectives

Define specific problems the agent will solve. Vague goals like “improve efficiency” lead to unfocused implementations.

Identify measurable success criteria. What outcomes indicate the agent is working effectively?

Choose Appropriate Agent Complexity

Not every problem requires a sophisticated learning agent. Match agent type to task complexity.

Simple, well-defined tasks might work perfectly with goal-based agents. Complex, evolving problems justify more advanced architectures.

Implement Strong Guardrails

Define clear boundaries for agent behavior. What actions are permitted? What’s prohibited?

Technical guardrails should prevent agents from accessing unauthorized systems or exceeding defined parameters.

Design for Transparency

Build logging and explanation capabilities into agent systems. Track decision paths, actions taken, and reasoning.

This transparency aids debugging, builds trust, and supports accountability.

Maintain Human Oversight

Even autonomous agents benefit from human monitoring. Establish review processes for agent actions, especially in high-stakes domains.

Create clear escalation paths when agents encounter situations beyond their capabilities.

Test Extensively Before Full Deployment

According to IEEE Spectrum’s AI Agent Benchmark reporting, new safety standards are being established to assess AI agent readiness for business applications.

Rigorous testing in controlled environments identifies failure modes before production deployment. Test edge cases, adversarial inputs, and unexpected scenarios.

Plan for Continuous Improvement

Agent deployment isn’t a one-time event. Monitor performance, gather feedback, and iterate.

Learning agents require ongoing training data. All agents benefit from refinement based on real-world performance.

Building AI agents? Talk to A-listware

AI agents rarely live on their own. They need backend systems, APIs, integrations, and stable infrastructure to actually work in a product. That’s where A-listware comes in. The company focuses on software development and dedicated engineering teams that handle architecture, development, and ongoing support. This kind of setup is often what sits behind AI-driven features once they move past the prototype stage.

If you’re working on AI agents, the challenge is usually not the idea but the execution around it – connecting services, handling data, and keeping everything reliable over time. A-listware supports the full development cycle, so instead of splitting work across multiple teams, you can keep everything in one place. Reach out to Logiciel de liste A, share your setup, and see how it could be structured into a working product.

The Future of AI Agents

AI agents represent an evolving technology. Several trends suggest where development is headed.

Increased Multimodal Capabilities

According to Google Cloud, agents’ capabilities are “made possible in large part by the multimodal capacity of generative AI and AI foundation models.” Agents that process text, images, video, and audio simultaneously will handle more complex real-world tasks.

Better Reasoning and Planning

Research continues improving agent reasoning capabilities. More sophisticated planning algorithms will enable agents to tackle longer-horizon goals and more complex problem spaces.

Enhanced Collaboration

Multi-agent systems will become more common. Specialized agents collaborating on complex projects can exceed what individual generalist agents accomplish.

Industry-Specific Agents

General-purpose agents will give way to domain-specialized agents with deep expertise in healthcare, finance, legal work, or scientific research.

These specialized agents will incorporate industry knowledge, regulations, and best practices into their operations.

Improved Safety Standards

As organizations like IEEE develop comprehensive standards for agent development and deployment, safety practices will mature. Standardized testing, certification processes, and regulatory frameworks will emerge.

Agent Operating Systems

Infrastructure specifically designed for managing, deploying, and monitoring agents will evolve. These “agent operating systems” will provide common services like memory management, tool integration, and security controls.

Questions fréquemment posées

- What’s the difference between AI agents and large language models?

Large language models (LLMs) are AI systems trained to understand and generate text. They form the foundation that powers many AI agents, but they’re not agents themselves. An LLM responds to prompts with text. An AI agent uses an LLM (or other AI models) as its reasoning engine to autonomously plan, decide, and take actions toward goals. The LLM is a component; the agent is the complete autonomous system.

- Can AI agents learn and improve over time?

Learning agents specifically incorporate mechanisms to improve from experience. They analyze outcomes, adjust strategies, and refine their approaches based on feedback. However, not all AI agents are learning agents—simpler types operate on fixed rules or models. The capability to learn depends on the agent’s architecture and design. Many modern agents incorporate some learning elements, though the extent varies significantly.

- Are AI agents safe to deploy in business environments?

Safety depends heavily on implementation. Properly designed agents with clear constraints, robust testing, and appropriate oversight can operate safely in many business contexts. However, research from IEEE Spectrum shows agents can exhibit risky behavior under certain conditions. Organizations should start with lower-risk applications, implement strong guardrails, maintain human oversight for critical decisions, and follow emerging safety standards. Complete autonomy in high-stakes scenarios requires exceptional care.

- How much do AI agent systems cost to implement?

Costs vary enormously based on complexity, scale, and customization requirements. Simple agents built on existing platforms might involve modest monthly subscription fees. Custom enterprise agents with specialized capabilities can require significant development investment, ongoing computational costs for running foundation models, and maintenance expenses. Check with specific vendors and platforms for current pricing, as costs and pricing models change frequently in this rapidly evolving space.

- What skills are needed to build AI agents?

Building AI agents typically requires programming skills (Python is common), understanding of AI/ML concepts, familiarity with foundation models and APIs, knowledge of the specific domain the agent will operate in, and system design capabilities. Many platforms now offer low-code or no-code agent builders that reduce technical requirements. For complex custom agents, teams typically include AI/ML engineers, software developers, domain experts, and potentially specialists in areas like natural language processing or reinforcement learning.

- Will AI agents replace human jobs?

AI agents will automate certain tasks and roles, particularly those involving repetitive information processing, rule-based decisions, or routine customer interactions. However, they also create new roles in agent development, oversight, and maintenance. Most experts anticipate transformation rather than wholesale replacement—humans and agents working together, with agents handling routine tasks while humans focus on complex judgment, creativity, strategy, and interpersonal work. The transition will require workforce adaptation and reskilling in affected industries.

- How do AI agents handle tasks they haven’t been trained for?

Agent responses to novel situations vary by design. Well-designed agents recognize when they lack knowledge or capability and escalate to human operators. Some agents attempt to reason through unfamiliar problems using general principles, though this risks errors. Advanced learning agents might adapt strategies based on similar past experiences. Poor designs might fail silently or produce unreliable outputs. This is why defining clear operational boundaries and maintaining oversight mechanisms remains critical—agents should acknowledge limitations rather than guess.

Conclusion: Embracing the Agentic AI Era

AI agents mark a significant evolution in artificial intelligence—from systems that respond to those that act.

These autonomous systems already deliver measurable value across customer service, building management, software development, research, and numerous other domains. The benefits—efficiency, scalability, consistency, continuous operation—make compelling cases for adoption.

But challenges remain real. Safety concerns, hallucination risks, transparency issues, and standardization gaps require thoughtful approaches. Organizations rushing into agent deployment without addressing these challenges risk failures that undermine both specific implementations and broader confidence in the technology.

The path forward involves balanced adoption: identifying appropriate use cases, implementing robust guardrails, maintaining human oversight where critical, and continuously improving based on real-world performance. As standards mature and best practices emerge, agent deployment will become more systematic and reliable.

AI agents aren’t going away. They represent a fundamental expansion of what AI systems can accomplish. Understanding what they are, how they work, their capabilities, and their limitations positions organizations and individuals to leverage this technology effectively.

The question isn’t whether AI agents will transform work and business processes. They already are. The question is how quickly organizations will adapt to work alongside these autonomous systems—and how thoughtfully they’ll navigate the challenges that autonomy introduces.

Ready to explore AI agents for your organization? Start by identifying a well-defined problem that requires multi-step decision-making and action. Test with limited scope, measure rigorously, and expand based on results.