Real-time data processing has a reputation for being expensive, and sometimes that reputation is deserved. But the cost isn’t just about faster pipelines or bigger cloud bills. It’s about the ongoing work required to keep data moving reliably, correctly, and on time.

Many teams budget for infrastructure and tooling, then discover later that engineering time, operational overhead, and design decisions quietly shape the real spend. Others rush into streaming everything, only to realize that not every data flow actually needs to be real-time.

This article takes a practical look at what real-time data processing really costs, why estimates often miss the mark, and how to think about spending in a way that reflects how these systems behave in the real world, not just on architecture diagrams.

So, How Much Does Real-Time Data Processing Actually Cost?

For most teams, real-time data processing is not a single price tag but a monthly operating range shaped by scale, urgency, and complexity. In 2025–2026, realistic end-to-end costs typically fall into the following bands:

- Small, focused setups (1–2 critical streams, managed services): $3,000 to $8,000 per month

- Mid-size production systems (multiple pipelines, SLAs, on-call coverage): $10,000 to $30,000 per month

- Large or business-critical platforms (high volume, strict latency, governance): $40,000 to $80,000+ per month

What matters most is not the exact number, but whether the cost aligns with the value of acting in real time. When speed prevents losses, reduces risk, or unlocks revenue, these numbers often make sense. When it does not, the same spend quickly feels excessive.

The Five Cost Layers of Real-Time Data Processing (With Real Price Ranges)

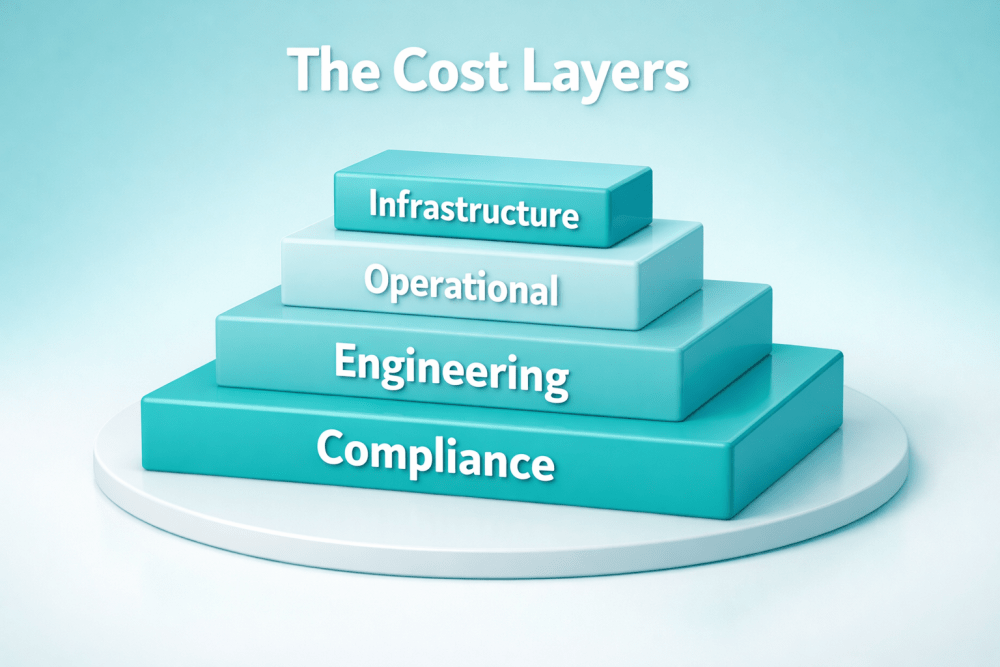

A useful way to understand real-time data processing cost is to break it into layers. Infrastructure is the most visible one, but it is rarely the biggest driver long term. The real spend shows up when all five layers are considered together.

Infrastructure Costs

This is the part most teams start with, because it is easy to measure.

Infrastructure costs include compute, storage, network traffic, and data transfer. In self-managed setups, this usually means virtual machines, disks, load balancers, backups, and replication. In managed platforms, the same costs are bundled into usage-based units, throughput pricing, or subscription tiers.

Typical Monthly Ranges (Rough Guidance)

- Small workloads (up to 100 GB per day): $300 to $1,500 per month

- Mid-scale workloads (500 GB to 1 TB per day): $2,000 to $8,000 per month

- Large or spiky workloads (multiple TB per day): $10,000 to $40,000+ per month

The tricky part is sizing. Real-time systems are usually built for peaks, not averages. If traffic triples for a few hours, the system still needs to keep up. Teams that provision for worst-case scenarios often pay for idle capacity most of the time. Teams that do not provision enough pay later in outages, throttling, or emergency scaling.

Managed platforms reduce over-provisioning, but inefficient pipeline design can still drive infrastructure costs up fast.

Operational Costs

Operating real-time systems is not passive work, even when the platform is managed.

Clusters need upgrades. Pipelines need monitoring. Alerts need tuning. Scaling events need oversight. Someone has to respond when latency spikes or consumers fall behind.

Operational cost includes observability tools, incident response, on-call rotations, and the ongoing effort to keep systems stable.

Typical Monthly Ranges

- Lightweight setups with managed platforms: $1,000 to $3,000

- Mid-size production systems: $4,000 to $12,000

- Business-critical or multi-region systems: $15,000 to $30,000+

In self-managed environments, this often translates to at least one dedicated DevOps or platform engineer. In managed environments, it is usually a shared responsibility across teams.

A common mistake is assuming that managed platforms remove operational cost entirely. They reduce it, but they do not eliminate it. Observability, reliability, and integration issues still require real human time.

Engineering Costs

This is where many budgets quietly fall apart.

Real-time pipelines are not set-and-forget systems. Schemas evolve. Producers change behavior. Consumers add new expectations. Connectors break. Edge cases only appear under real traffic.

Engineering time is spent building pipelines, maintaining them, debugging failures, and tuning performance. Streaming expertise is specialized and expensive.

Typical Monthly Ranges (Engineering Time Only)

- Simple use cases with limited scope: $3,000 to $8,000

- Growing systems with multiple pipelines: $10,000 to $25,000

- Complex platforms with many consumers and SLAs: $30,000 to $60,000+

In many organizations, a small group of specialists ends up supporting dozens of pipelines. That concentration of knowledge becomes both a delivery risk and a long-term cost driver. Even when infrastructure is cheap, engineering time rarely is.

Governance and Compliance Costs

Streaming data often includes sensitive or regulated information: personal data, financial events, operational logs, or telemetry tied to users or devices.

Ensuring proper access control, encryption, auditing, retention policies, and compliance adds both tooling and process overhead. Reviews slow down changes. Security incidents trigger audits, documentation work, and remediation.

Typical Monthly Ranges

- Basic security and access controls: $500 to $2,000

- Regulated environments (finance, healthcare, enterprise SaaS): $3,000 to $10,000

- Heavily regulated or audited systems: $15,000+

These costs rarely appear in early estimates because they grow gradually. But once a system becomes business-critical, governance is not optional. It becomes part of the steady baseline cost.

Opportunity Costs

This is the least visible layer and often the most expensive.

When real-time pipelines fail, products stall. When latency spikes, users notice. When engineers spend days fixing streaming issues, they are not building features or improving products.

There is also opportunity cost in over-streaming. Teams that push everything into real-time pipelines often realize later that much of that data did not need immediate processing. They pay ongoing costs for speed that delivers no additional business value.

Typical Impact

- Missed launches or delayed features worth tens of thousands per month

- Outages or data quality issues causing revenue loss or customer churn

- Engineering capacity tied up in maintenance instead of innovation

Opportunity cost does not show up on cloud invoices, but it shows up in roadmaps, delivery speed, and competitive position..

How We Help Teams Build Cost-Smart Real-Time Data Systems

At A-listware, we work with teams that want real-time data without losing control over cost or complexity. We’ve seen firsthand how streaming systems can quietly grow into something heavier than expected, not because the technology is wrong, but because the setup was rushed or overbuilt. Our role is to help clients design real-time pipelines that match real business urgency, not abstract technical ambition.

We act as an extension of your team, bringing experienced engineers who understand streaming, data platforms, and cloud infrastructure, but also know when real-time is not the right answer. That balance matters. We help define scope early, choose architectures that scale predictably, and avoid the common traps that drive up engineering and operational overhead over time.

Because we work across industries and system sizes, we focus on practical delivery. From building and supporting real-time pipelines to integrating them into existing platforms, we stay close to the work and the outcomes. The goal is simple: systems that perform when they need to, stay reliable under pressure, and make sense financially as they grow.

Real Cost Drivers Teams Commonly Miss

After reviewing many real-time systems, a few patterns show up again and again.

Over-Streaming

Not every event needs to be processed immediately. Teams often stream everything because it feels future-proof. Later, they realize that only a small subset of data drives time-sensitive decisions.

Filtering earlier in the pipeline saves compute, storage, and operational effort.

Retention Without Purpose

Keeping months of data in hot storage is expensive. If older data is rarely accessed, moving it to cheaper storage reduces cost without losing value.

Retention should be a business decision, not a default setting.

Ignoring Engineering Load

Streaming pipelines do not maintain themselves. Every new integration adds long-term maintenance cost. Designing fewer, higher-quality pipelines often costs less than managing many fragile ones.

Treating Cost as Static

Real-time systems evolve. New consumers appear. Data volume grows. Pricing models change. Cost estimates need regular review, not one-time approval.

A Practical Way to Estimate Real-Time Data Costs

Rather than starting with tools or vendors, start with questions that tie data speed directly to business impact. The goal is to understand where real-time actually matters before putting numbers on infrastructure or platforms.

Use this checklist as a starting point:

- Which decisions truly depend on real-time data? Identify actions that lose value if delayed by minutes or hours, not just ones that feel nice to have live.

- What is the cost of acting late? Estimate financial loss, risk exposure, user impact, or operational disruption caused by delayed insight.

- How much data really needs immediate processing? Separate critical event streams from data that can be processed in batches without affecting outcomes.

- What is the expected data volume and peak throughput? Model not just average load, but realistic spikes that the system must handle without falling over.

- How long does data need to stay readily accessible? Define retention in hot, warm, and cold storage based on actual usage, not default settings.

- How much engineering and operational effort will this require? Include build time, ongoing maintenance, on-call coverage, monitoring, and incident response.

Once these pieces are in place, add up infrastructure, engineering, and operational costs to form a realistic baseline. If that total feels uncomfortable, that is valuable insight. It may point to a smaller initial scope, looser latency requirements, or an architecture that mixes real-time and batch processing more deliberately.

When Real-Time Processing Is Worth the Cost

Real-time data processing earns its keep when delay has a measurable price tag. If acting minutes or even seconds later leads to lost revenue, higher risk, or visible user impact, streaming quickly justifies its cost. Fraud detection is the obvious example, but it also applies to system monitoring, operational alerting, dynamic pricing, and personalized user experiences that depend on what is happening right now. In these cases, real-time systems reduce losses, prevent outages, or unlock revenue that batch processing simply cannot reach in time.

The equation changes when speed does not materially affect outcomes. Periodic reporting, compliance workflows, historical analysis, and low-urgency metrics rarely benefit from second-by-second updates. Streaming these workloads often adds complexity and ongoing cost without delivering additional value. For those scenarios, batch processing remains simpler, cheaper, and easier to maintain. The practical rule is straightforward: if acting on the data later does not change the decision, real-time processing is usually not worth paying for.

Conclusion: Making Cost a Design Constraint, Not a Surprise

The most successful teams treat cost as part of system design, not as a billing problem to solve later.

They choose latency intentionally. They monitor usage. They simplify pipelines. They revisit assumptions as systems grow.

Real-time data processing is not cheap, but it is rarely as expensive as poorly planned real-time processing. The difference lies in understanding where the real numbers come from and aligning them with actual business value.

In the end, the question is not whether real-time data is expensive. It is whether the cost matches what you gain from acting faster.

Frequently Asked Questions

- Is real-time data processing always more expensive than batch processing?

Not always, but it usually costs more to run on a monthly basis. The key difference is where the value shows up. Batch processing is cheaper and simpler for low-urgency workloads. Real-time processing becomes cost-effective when acting late leads to revenue loss, higher risk, or operational disruption. In those cases, the business cost of delay often exceeds the technical cost of streaming.

- What is the biggest cost driver in real-time data systems?

For most teams, engineering and operational effort outweigh pure infrastructure costs over time. Cloud bills are visible and predictable, but ongoing maintenance, debugging, monitoring, and on-call support quietly shape the long-term spend, especially as the number of pipelines grows.

- Can managed streaming platforms significantly reduce costs?

Managed platforms usually reduce operational overhead and make costs more predictable, but they do not eliminate cost entirely. Poorly designed pipelines, excessive retention, or streaming low-value data can still drive expenses up. The biggest advantage of managed services is clarity and reduced operational risk, not zero cost.

- How do I know which data actually needs real-time processing?

A simple test is to ask whether acting on the data later would change the decision. If the answer is no, real-time processing is likely unnecessary. Data tied to fraud prevention, outages, customer interactions, or fast-moving inventory typically benefits from immediacy. Periodic reporting and historical analysis usually do not.

- Is micro-batching a cheaper alternative to real-time streaming?

Sometimes, but it often introduces its own costs. Micro-batching reduces infrastructure pressure compared to continuous streaming, but it adds complexity around scheduling, state management, and error handling. In practice, it can end up being harder to operate than batch and slower than true streaming.