Quick Summary: Digital transformation in crisis management refers to integrating advanced technologies like AI, cloud computing, and real-time data analytics to enhance organizational resilience and response capabilities during emergencies. This approach enables faster decision-making, improved coordination, and proactive risk mitigation across government agencies, businesses, and critical infrastructure sectors.

The COVID-19 pandemic exposed critical vulnerabilities in how organizations respond to crises. According to the Federal Reserve, 200,000 more business closures occurred than normal during the pandemic’s first year. But here’s the thing—the organizations that survived and even thrived weren’t just lucky. They had something different: digitally-enabled crisis management systems.

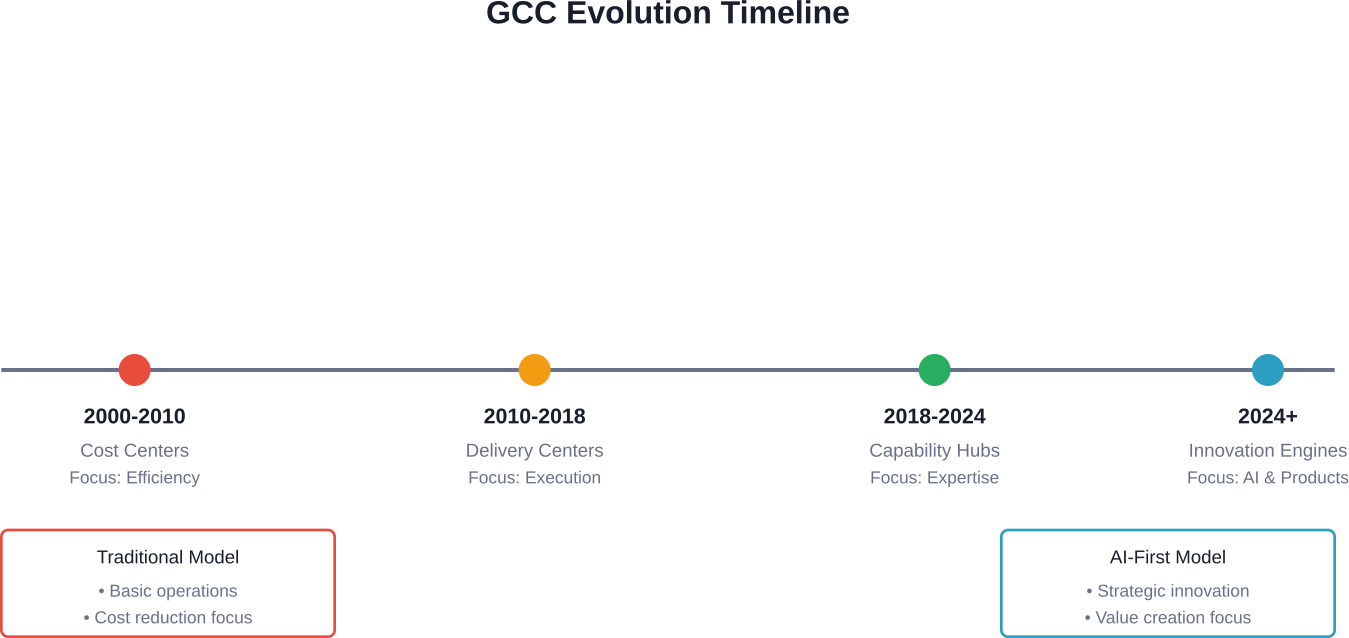

Digital transformation has fundamentally altered how organizations prepare for, respond to, and recover from crises. From earthquakes that strike without warning to cyberattacks targeting critical infrastructure, modern threats demand modern solutions.

The Cybersecurity and Infrastructure Security Agency (CISA) has doubled down on building resilience at all levels of critical infrastructure over recent years. Their focus? Launching customer-focused products and services that empower national resilience in what they call “the era of disruption.”

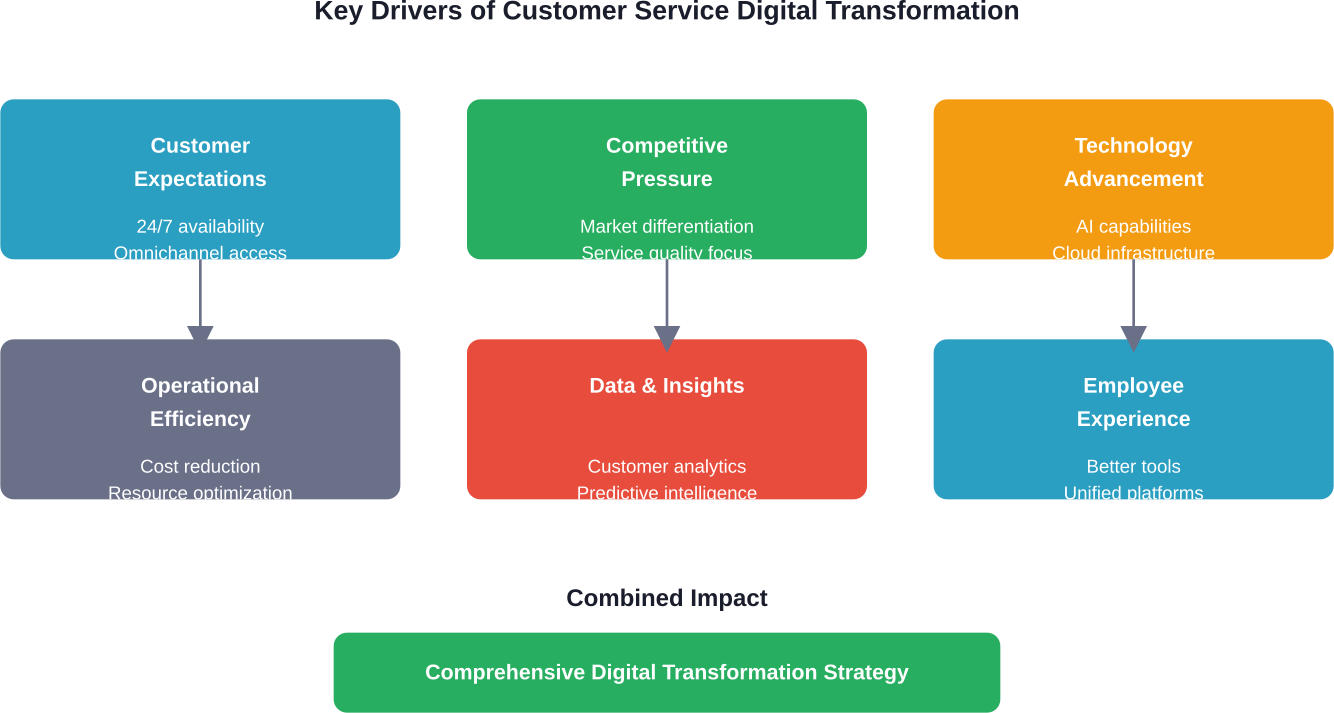

This isn’t just about having fancy technology. Real talk: digital transformation for crisis management is about fundamentally rethinking how organizations detect threats, mobilize resources, coordinate responses, and learn from each incident.

Understanding Digital Transformation in Crisis Management

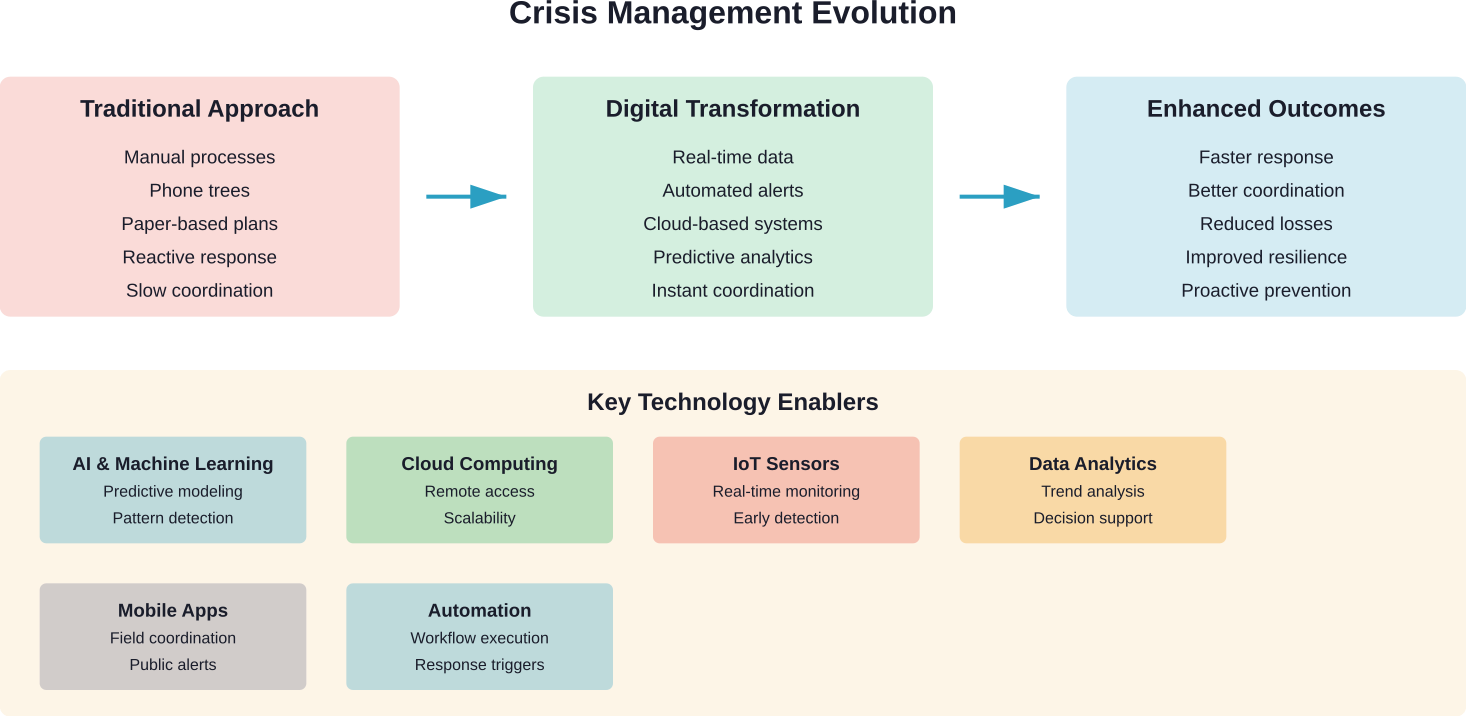

Digital transformation in crisis management represents a fundamental shift from reactive, manual processes to proactive, technology-enabled systems that can predict, prevent, and respond to emergencies with unprecedented speed and coordination.

Traditional crisis management relied heavily on phone trees, paper-based plans, and manual coordination. That approach simply doesn’t work anymore. Modern crises are too complex, too fast-moving, and too interconnected.

What Makes Digital Crisis Management Different

The core difference lies in three capabilities: real-time data integration, automated response protocols, and predictive analytics. These aren’t just buzzwords—they represent concrete operational advantages.

Real-time data integration means pulling information from multiple sources simultaneously. During Japan’s 2011 Tōhoku earthquake, the country’s early warning system provided crucial minutes of warning that enabled millions to take protective action.

Key metrics demonstrate its effectiveness:

- Average warning time: 15-20 seconds

- False positive rate: Less than 2%

- Coverage: 100% of Japanese territory

Automated response protocols eliminate delays inherent in human decision-making chains. When Singapore deployed its TraceTogether contact tracing app during COVID-19, it achieved a 78% adoption rate and dramatically improved contact tracing efficiency.

Predictive analytics leverage historical data and machine learning to identify potential crises before they fully materialize. This shifts organizations from purely reactive postures to proactive risk management.

The Dual Nature of Technology in Crises

But wait. Technology isn’t always the hero of the story.

The same digital systems that can prevent crises can also accelerate them. Cyberattacks spread through interconnected networks in seconds. Misinformation—what the World Health Organization calls an “infodemic”—can undermine public health responses during disease outbreaks.

An infodemic refers to too much information, including false or misleading content, during a disease outbreak. It causes confusion and risk-taking behaviors that can harm health. With growing digitization, the challenge intensifies.

This paradox demands thoughtful implementation. Organizations can’t simply throw technology at crisis management and expect success. They need strategic integration aligned with clear objectives and robust governance.

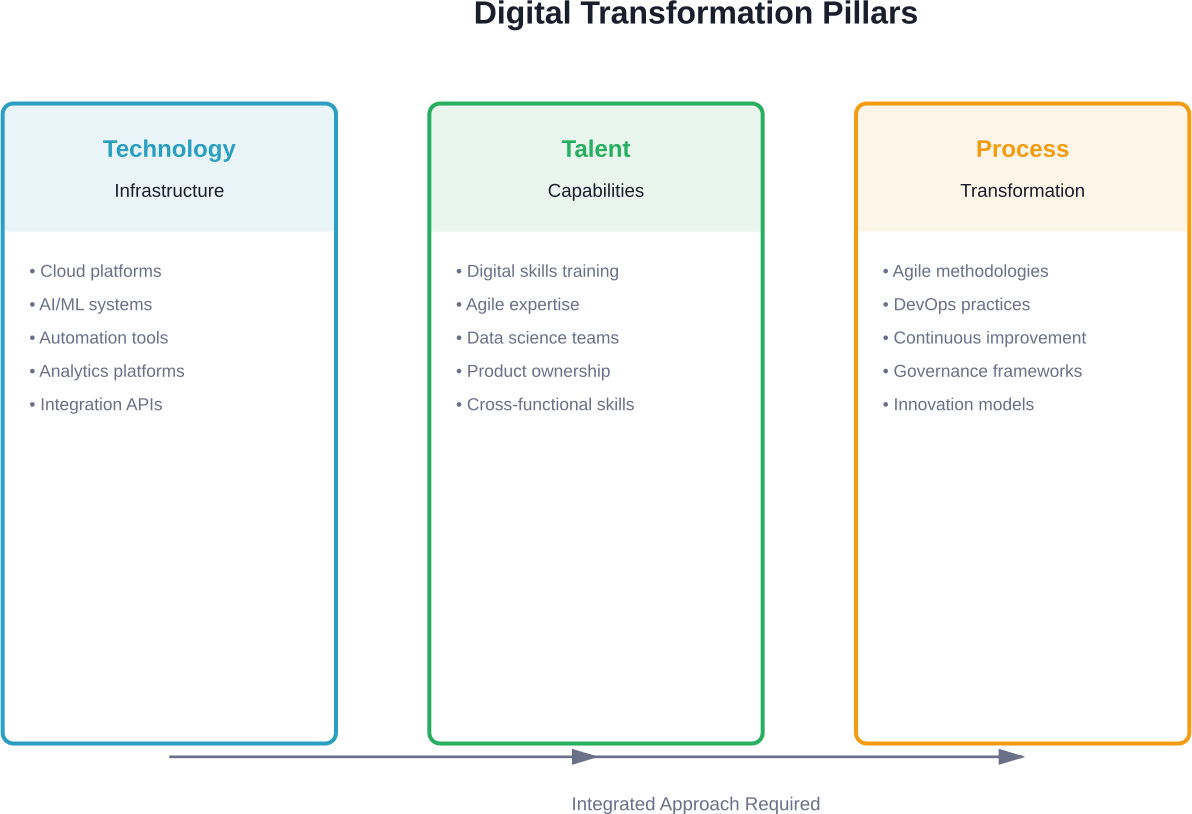

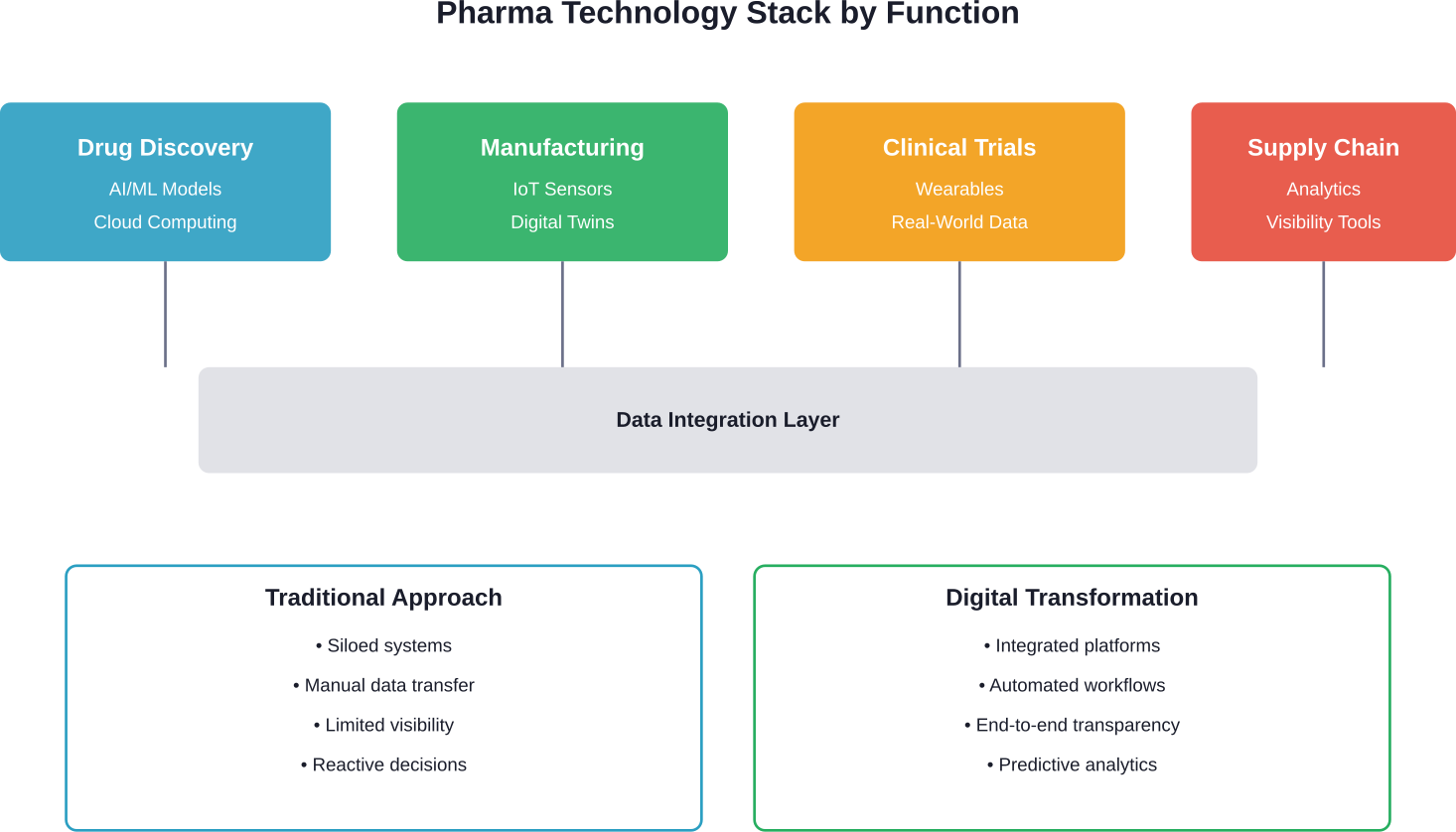

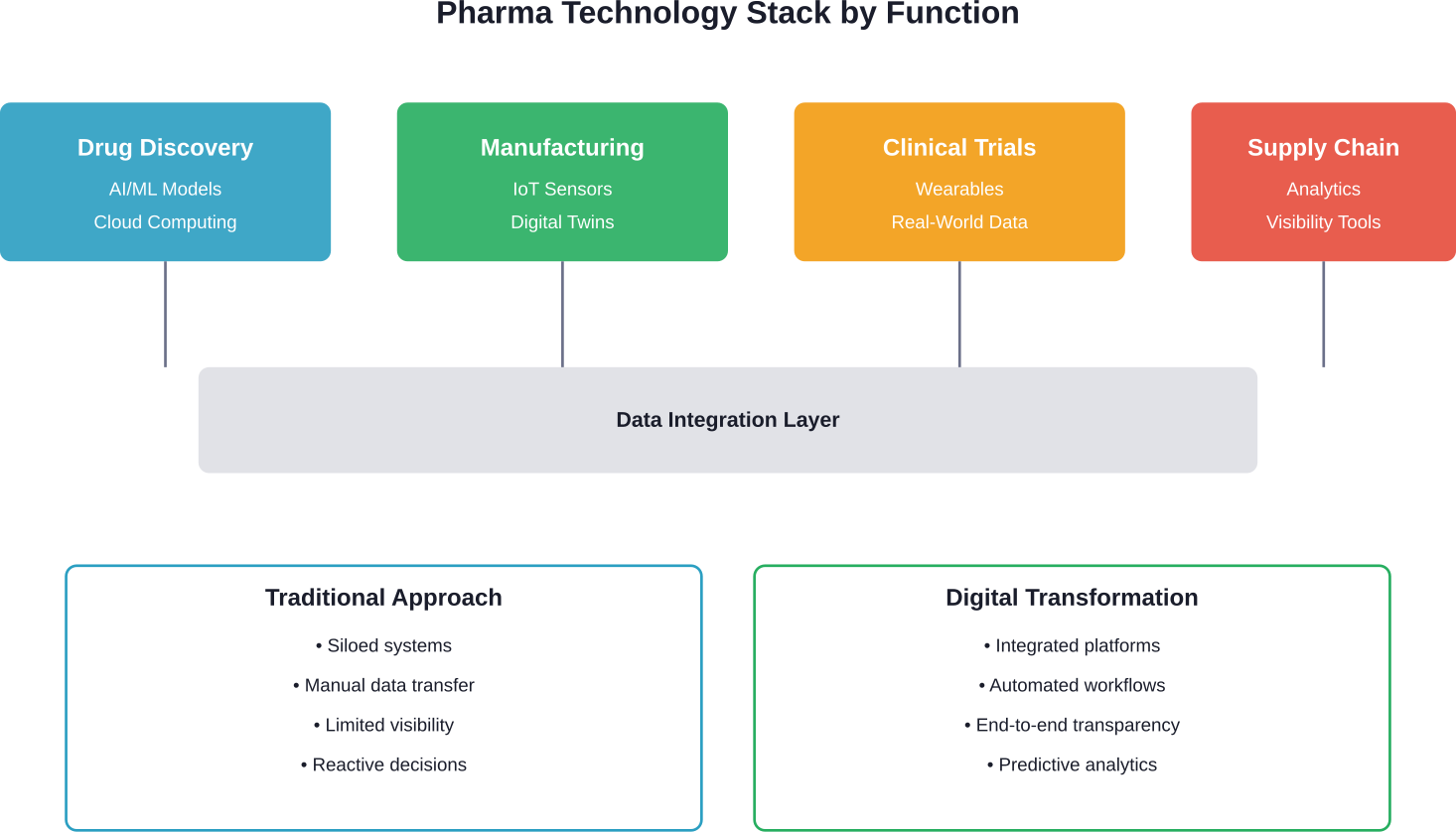

Core Technologies Driving Crisis Management Transformation

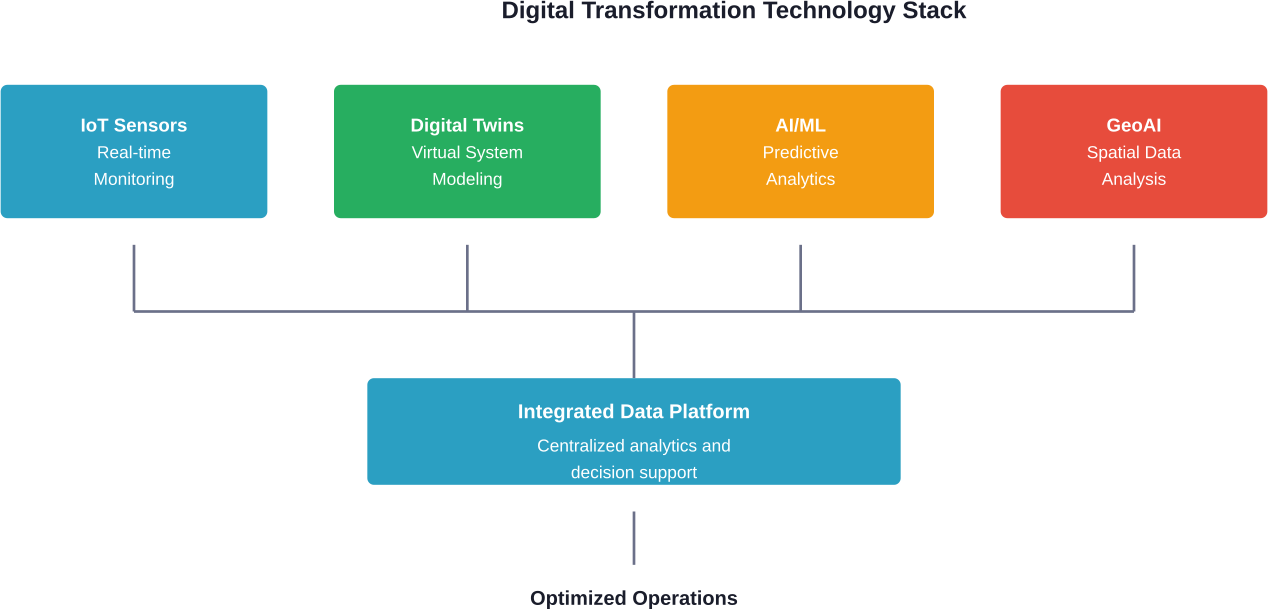

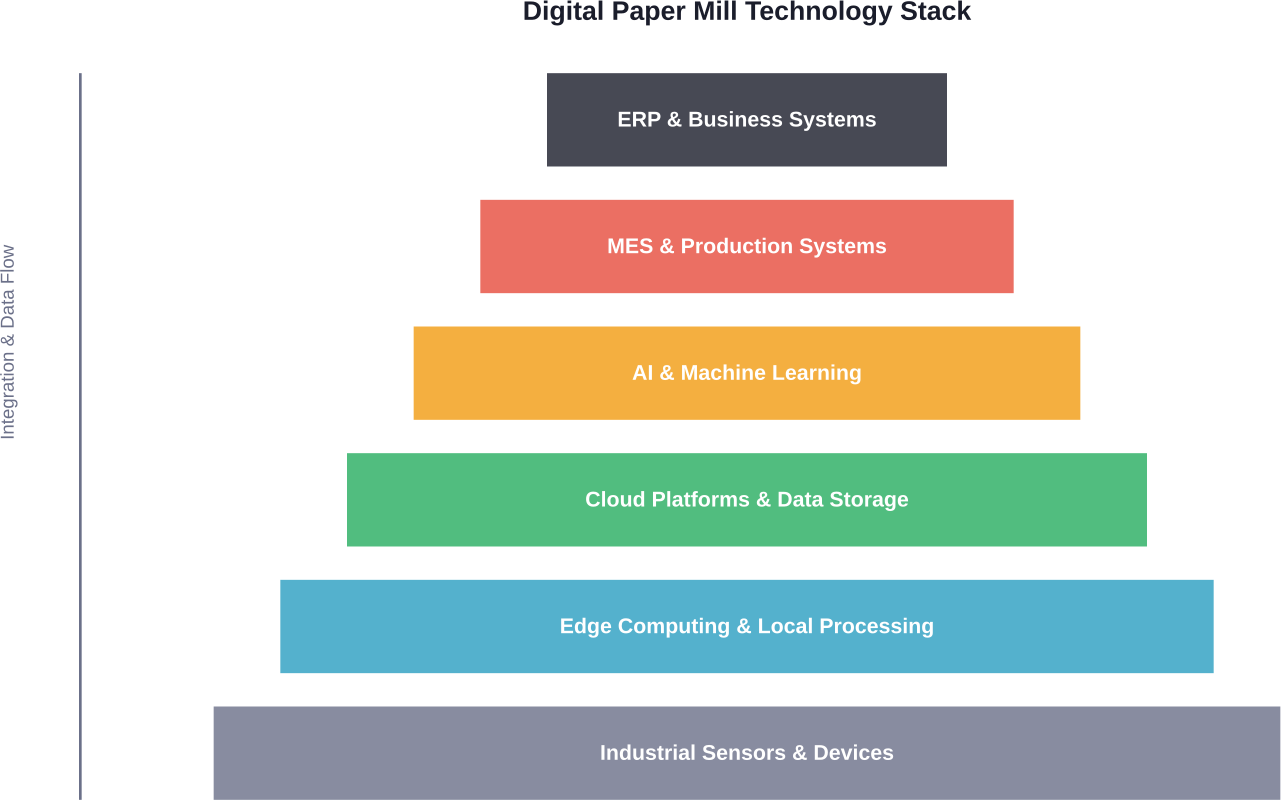

Several key technologies form the foundation of modern crisis management systems. Each brings specific capabilities that address traditional limitations.

Artificial Intelligence and Machine Learning

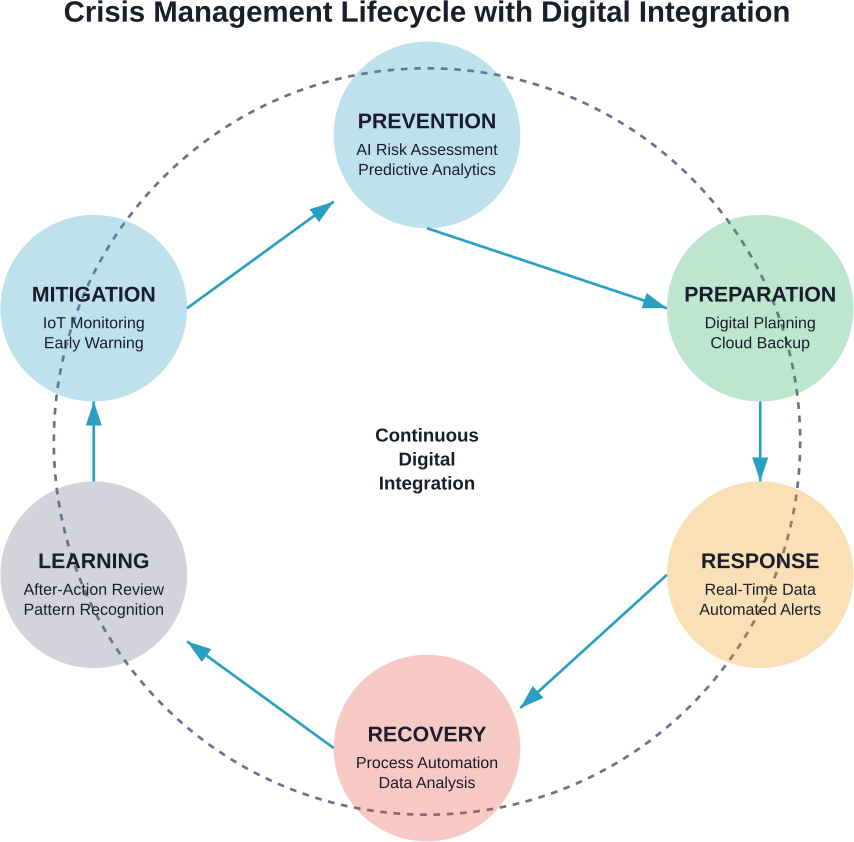

AI enhances crisis management across three critical phases: preparation, response, and recovery.

During preparation, AI systems analyze vast datasets to identify emerging risks. Machine learning algorithms detect patterns humans might miss—subtle supply chain vulnerabilities, infrastructure weaknesses, or brewing social tensions.

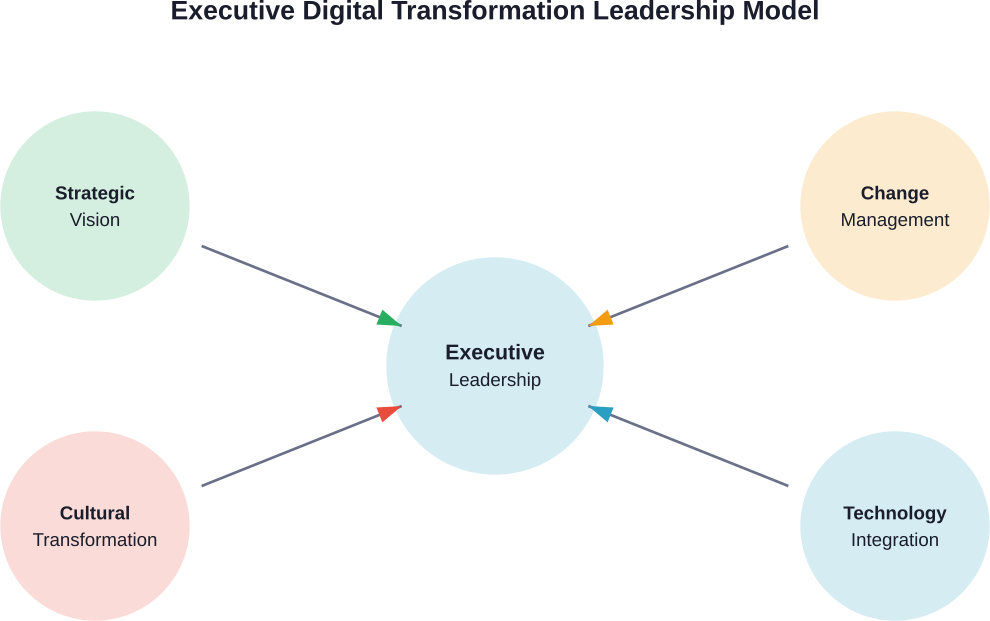

Research shows transformational leadership enhanced resilience by 82% in organizations facing cyber incidents. Similarly, ethical leadership improved organizational citizenship behaviors by 75% in crisis situations. These improvements don’t come from leadership approaches alone but from leaders who leverage AI-powered tools for decision support.

For response, AI accelerates decision-making under pressure. Systems can model responses to complex scenarios, helping leaders understand the impact of different decisions before committing resources. They can also monitor risks using real-time metrics and support regulatory compliance by predicting potential breaches.

Recovery benefits from AI’s ability to benchmark good practice across industries and identify process gaps. Organizations learn faster from each incident, building institutional knowledge that strengthens future responses.

Cloud Computing and Remote Accessibility

Cloud-based systems solved a fundamental problem exposed by COVID-19: crisis management teams can’t always gather in physical command centers.

Cloud document management provides easy access to critical files from anywhere. During the pandemic, this capability meant the difference between operational continuity and paralysis for many organizations.

Scalability represents another crucial advantage. Crisis demands fluctuate dramatically. Cloud infrastructure scales up during emergencies without requiring permanent investment in excess capacity.

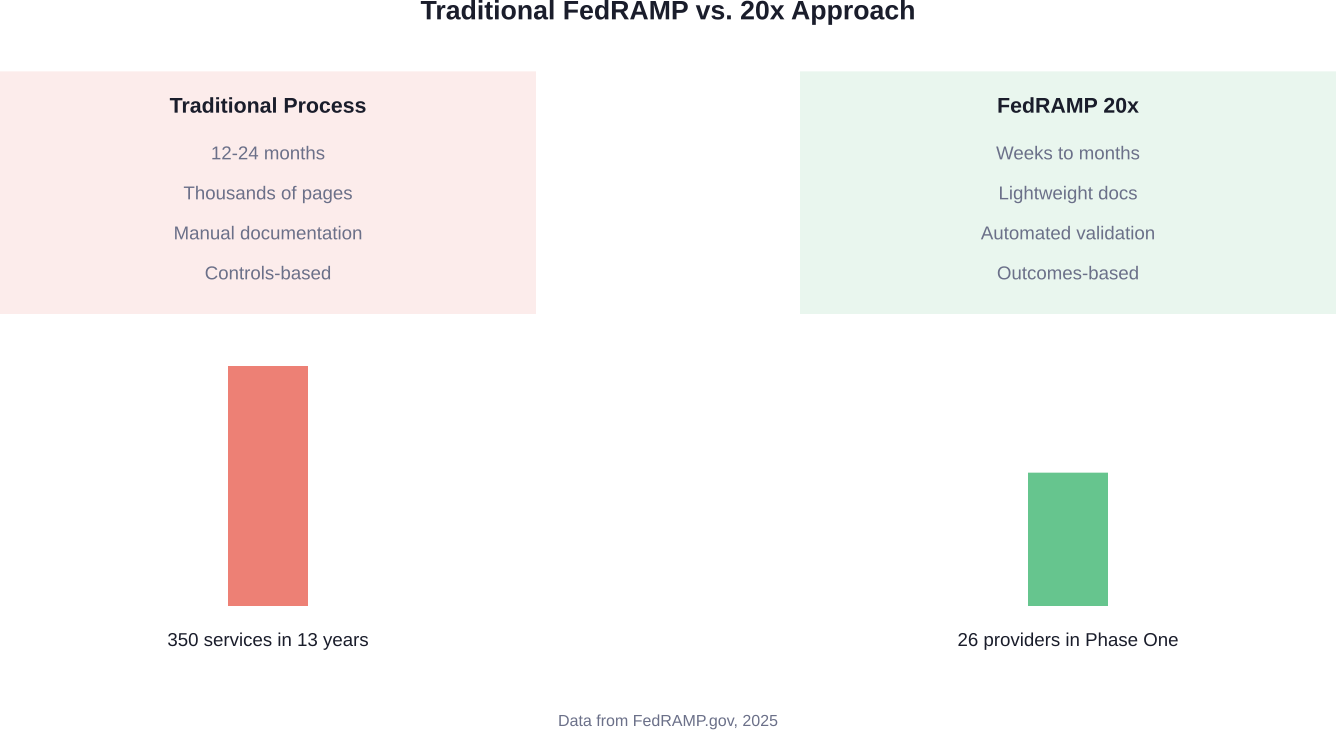

But cloud adoption introduces new vulnerabilities. CISA released guidance in January 2026 calling on critical infrastructure organizations to take decisive action against insider threats. The guidance emphasizes building strong, multi-disciplinary threat management teams—recognizing that cloud systems require sophisticated security approaches.

Real-Time Data Integration and Analytics

Speed matters in crises. Real-time data integration pulls information from diverse sources—social media, sensor networks, emergency services, weather systems—into unified dashboards.

The Emergency Services Sector, as defined by CISA, comprises highly skilled personnel in both paid and volunteer capacities, along with related physical and cyber resources. These resources increasingly depend on real-time data to coordinate prevention, protection, mitigation, response, and recovery activities.

Analytics transform raw data into actionable intelligence. During disasters, responders need to know where resources are most needed, which routes remain passable, and how situations are evolving minute by minute.

Internet of Things and Sensor Networks

IoT devices create unprecedented situational awareness. Environmental sensors detect chemical leaks, structural monitors identify building damage, and wearable devices track responder locations and vital signs.

Japan’s earthquake early warning system exemplifies IoT potential. Thousands of seismometers across the country feed data into centralized systems that can trigger alerts within seconds of detecting seismic activity.

The challenge lies in managing the sheer volume of data these devices generate. Organizations need robust infrastructure and intelligent filtering to extract signal from noise.

Work With a Software Development and Consulting Partner

If your crisis management strategy depends on better systems, stronger infrastructure, or extra technical support, consider working with A-listware. A-listware provides software development, IT consulting, cybersecurity, infrastructure services, data analytics, and dedicated development teams. The company also helps businesses modernize legacy software, extend internal teams, and support digital projects that need to move without adding hiring delays.

Need Technical Support for Crisis-Ready Systems?

Talk with A-listware to:

- modernize outdated software and internal systems

- add developers, DevOps, data, or security specialists

- build and support digital tools for more stable operations

Start by requesting a consultation with A-listware.

Strategic Implementation Approaches

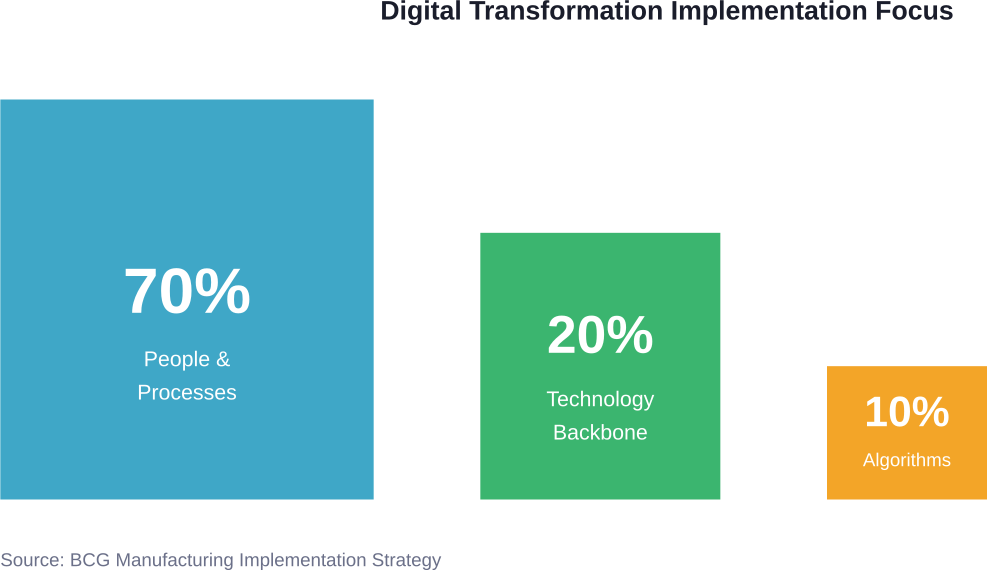

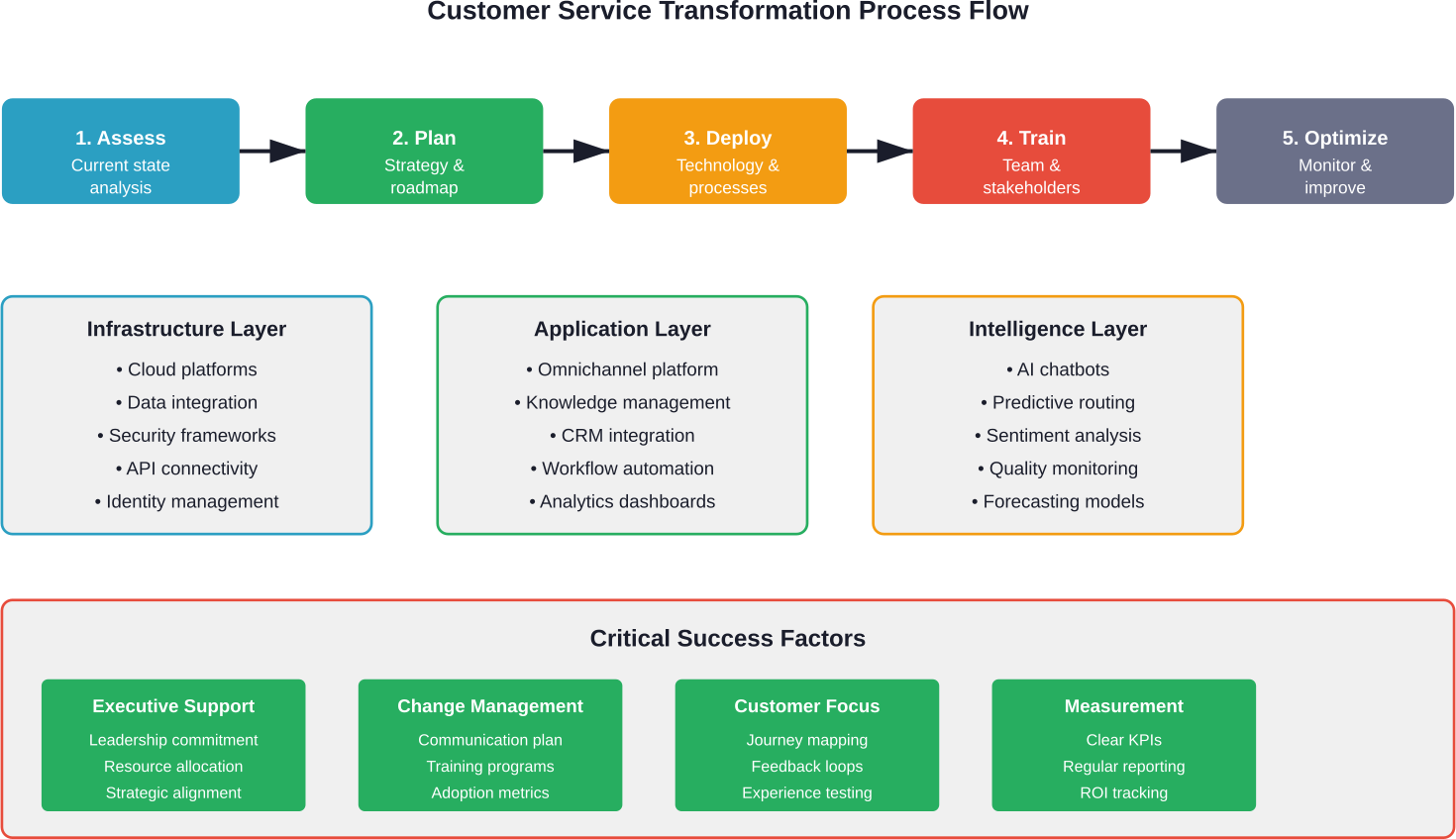

Technology alone doesn’t create effective crisis management. Organizations need strategic implementation frameworks that align digital tools with operational realities.

Assessing Organizational Readiness

Before investing in digital transformation, organizations must honestly assess their current state. This includes evaluating existing infrastructure, staff capabilities, budget constraints, and cultural readiness for change.

The World Health Organization emphasizes supporting countries in documenting digital health maturity across key building blocks: leadership and governance, strategy and investment, legislation and policy, workforce capabilities, standards and interoperability, and infrastructure.

These same building blocks apply beyond healthcare to any organization undertaking digital transformation for crisis management.

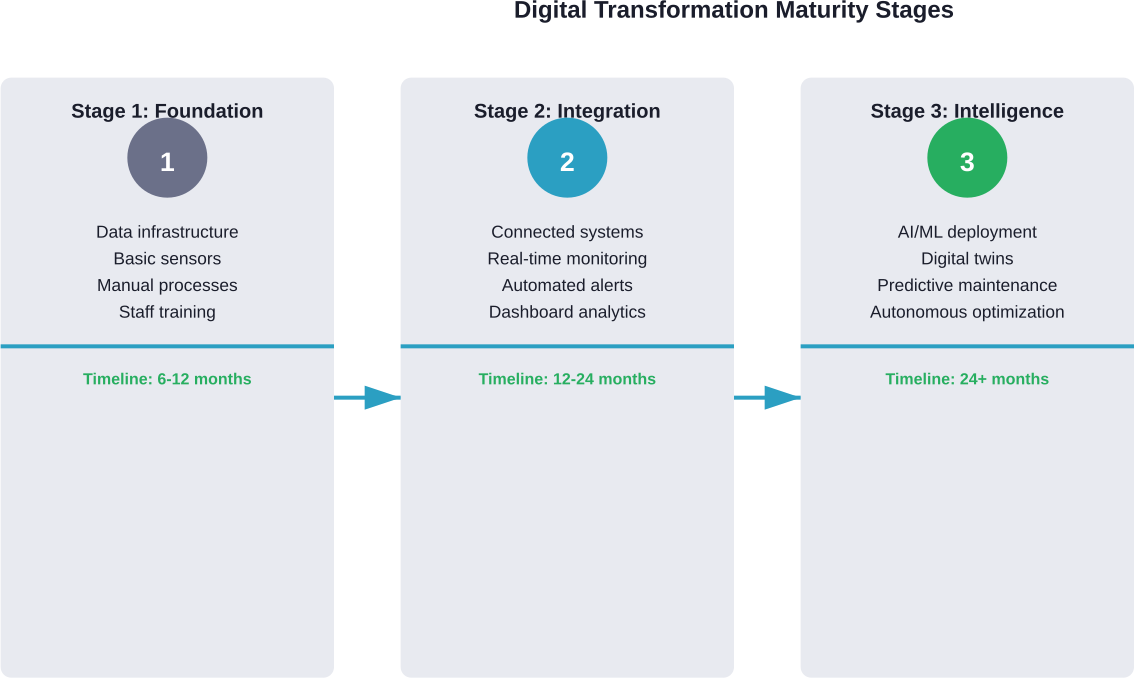

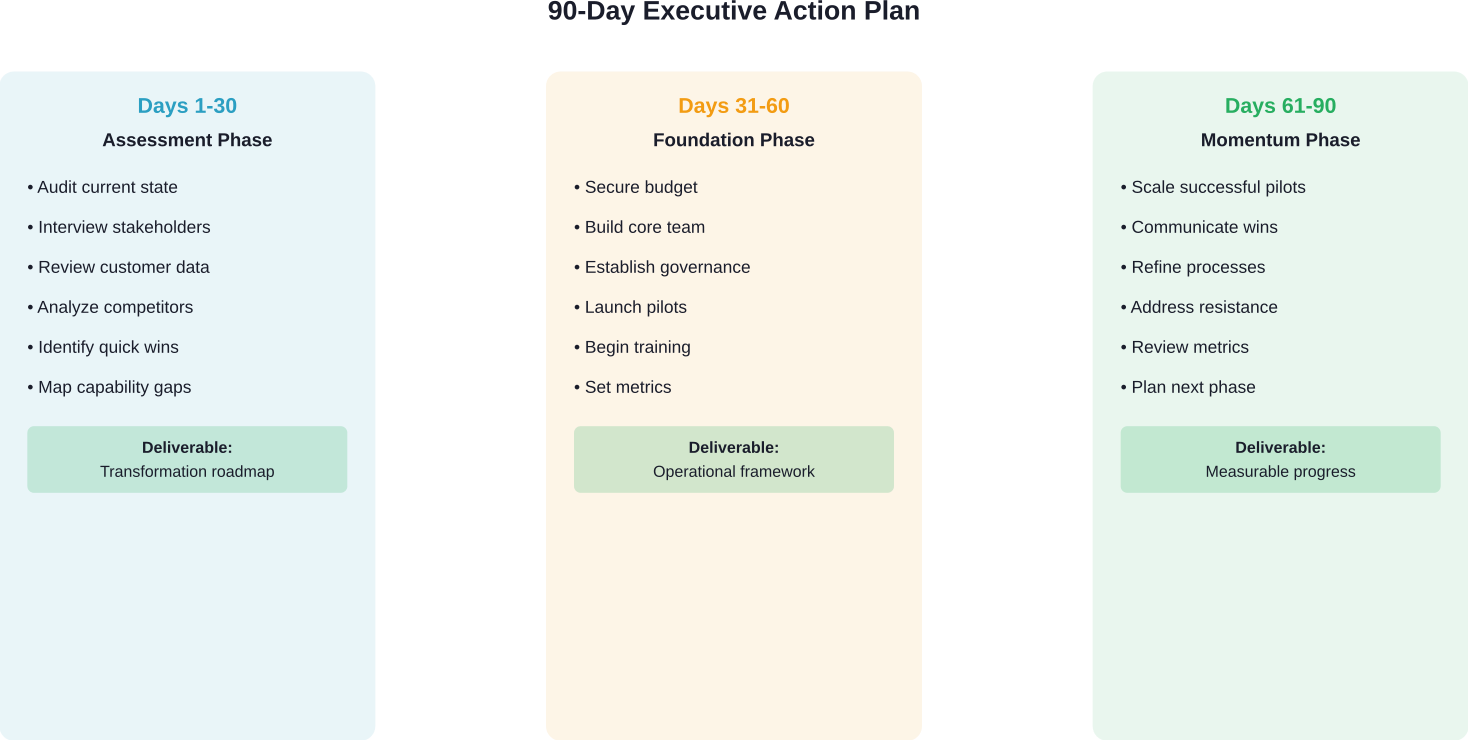

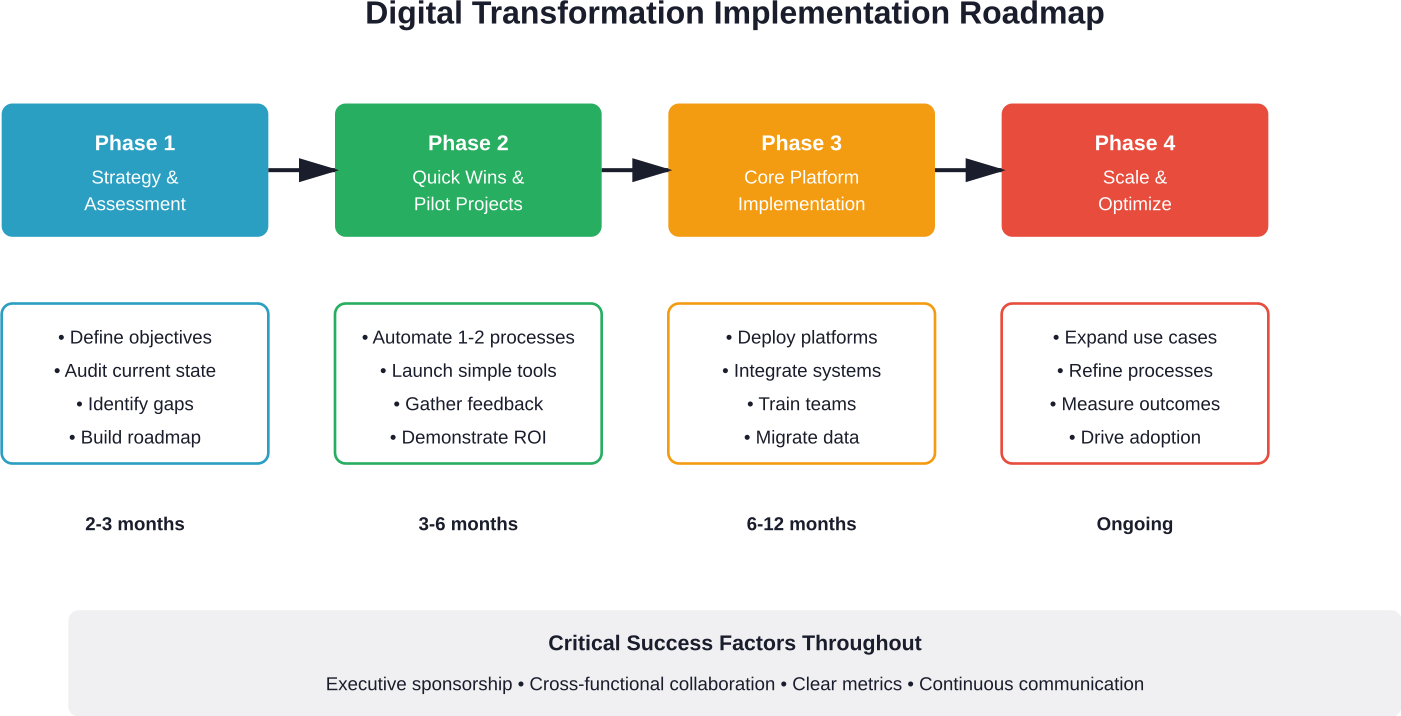

Developing a Clear Roadmap

Successful transformations start with clear roadmaps that define objectives, milestones, and success metrics. The roadmap should identify quick wins that build momentum while planning for long-term systematic change.

Phased implementation reduces risk. Organizations might start with document digitization and cloud migration before advancing to AI-powered predictive analytics. Each phase builds on previous successes and generates lessons that inform subsequent efforts.

Investing in Employee Training

Technology is only as effective as the people using it. Comprehensive training programs ensure staff can actually leverage new tools during high-stress crisis situations.

Training shouldn’t focus solely on technical skills. Crisis management requires judgment, coordination, and leadership. Digital tools should enhance human decision-making, not replace it.

Research shows ethical leadership improved organizational citizenship behaviors by 75% in crisis situations. Technical competence combined with strong ethical frameworks creates resilient crisis response capabilities.

Choosing Scalable and Flexible Technologies

Technology decisions should prioritize interoperability, scalability, and vendor independence. Proprietary systems that lock organizations into single vendors create long-term vulnerabilities.

Open standards and specifications enable different systems to communicate. The WHO supports international collaboration in developing data standards and interoperability specifications—recognizing that crises don’t respect organizational or national boundaries.

| Technology Selection Criteria | Why It Matters | Red Flags to Avoid |

|---|---|---|

| Interoperability | Enables communication with other systems | Proprietary formats, closed APIs |

| Scalability | Handles variable demand during crises | Fixed capacity limits, expensive expansion |

| Reliability | Functions when needed most | Poor uptime records, single points of failure |

| Security | Protects sensitive crisis data | Weak encryption, poor access controls |

| Usability | Works under stress with minimal training | Complex interfaces, steep learning curves |

| Vendor Support | Ensures assistance during implementation | Limited support hours, slow response times |

Digital Solutions for Specific Crisis Management Functions

Different crisis management functions benefit from specific digital solutions. Understanding these applications helps organizations prioritize investments.

Document Scanning and Digital Conversion

Paper-based crisis plans are liabilities. They can’t be accessed remotely, updated efficiently, or searched quickly. Document scanning converts legacy materials into accessible digital formats.

This seems basic, but it’s foundational. During COVID-19, organizations with digitized documentation maintained operational continuity while those dependent on physical files struggled.

Digital Mailroom for Remote Operations

Traditional mail processing creates single points of failure. Digital mailroom solutions scan, route, and manage incoming communications electronically, enabling distributed teams to maintain awareness regardless of location.

For organizations managing crises that require remote operations—pandemics, building damage, regional disasters—digital mailrooms ensure communication channels remain open.

Business Process Automation

Automation drives operational efficiencies by handling routine tasks without human intervention. During crises, this frees personnel to focus on high-value activities requiring judgment and creativity.

Automated systems can trigger alerts, execute predefined response protocols, generate status reports, and coordinate resource allocation. They work tirelessly, consistently, and without the fatigue that degrades human performance during extended emergencies.

Accounts payable automation, for instance, ensures invoices continue processing even when finance teams are displaced or working remotely. This maintains vendor relationships and cash flow during disruptions.

Real-Time Collaboration Platforms

Crisis response demands coordination across multiple teams, departments, and often organizations. Real-time collaboration platforms provide shared workspaces where responders can communicate, share information, and coordinate activities.

These platforms integrate chat, video conferencing, document sharing, and task management. During the G20’s work on digital health for pandemic management, international collaboration platforms enabled 17 countries and multiple international organizations to coordinate responses across borders.

Building Organizational Resilience Through Digital Transformation

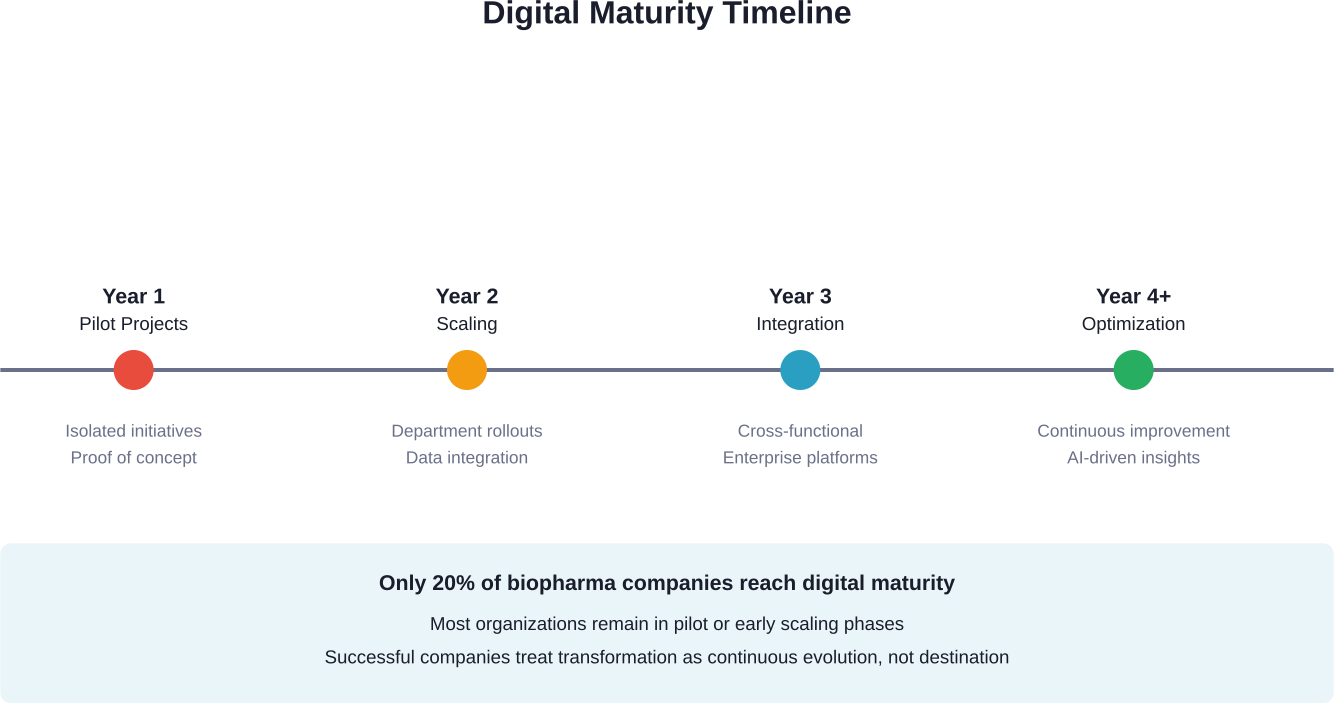

CISA’s 2025 focus on “Resolve to be Resilient” reflects a fundamental shift in crisis management thinking. The goal isn’t just surviving individual crises—it’s building systematic resilience that strengthens with each challenge.

From Reactive to Proactive Postures

Digital transformation enables organizations to move from reactive crisis response to proactive risk management. Predictive analytics identify emerging threats. Continuous monitoring detects anomalies before they escalate. Scenario modeling tests response plans against potential futures.

This proactive approach reduces both the frequency and severity of crises. Problems get addressed while they’re still manageable rather than after they’ve exploded into full emergencies.

Continuous Learning and Improvement

Digital systems capture detailed data about how crises unfold and how organizations respond. This creates opportunities for systematic learning that paper-based approaches can’t match.

After-action reviews become more thorough when supported by comprehensive data. Organizations can identify what worked, what didn’t, and why. These insights feed back into improved plans, better training, and more effective tools.

Cross-Sector Collaboration

Modern crises often span multiple sectors. Cyberattacks on healthcare providers affect patient care. Supply chain disruptions impact manufacturing, retail, and consumers. Climate events damage infrastructure, disrupt services, and displace populations.

Digital platforms enable cross-sector information sharing and coordination. The National Institute of Standards and Technology (NIST) provides frameworks for disaster recovery planning that emphasize interoperability and standardization—recognizing that effective crisis response requires coordinated action across organizational boundaries.

Critical Infrastructure and National Resilience

Critical infrastructure sectors face unique crisis management challenges. These systems—energy, water, transportation, communications, healthcare—form the backbone of modern society. Their failure cascades across entire regions or nations.

CISA’s Role in Infrastructure Resilience

CISA has focused intensively on forging national resilience for what they call an era of disruption. From weathering the Great Depression and mobilizing for World War II, to enhancing homeland security after 9/11 and responding to COVID-19, resilience has defined the nation since its founding.

Building on this tradition, CISA has launched customer-focused products and services that empower critical infrastructure resilience. These initiatives recognize that modern threats—cyberattacks, climate events, pandemics, supply chain disruptions—demand coordinated, technology-enabled responses.

Addressing Insider Threats

Digital transformation creates new vulnerabilities even as it enhances capabilities. In January 2026, CISA released guidance urging critical infrastructure organizations to take decisive action against insider threats.

Insider threats represent particularly challenging risks. Trusted personnel with legitimate access can cause devastating damage—whether through malice, negligence, or compromise. Digital systems, with their extensive access controls and audit capabilities, provide tools for detecting and preventing insider threats.

The guidance emphasizes building strong, multi-disciplinary threat management teams. Technology alone can’t solve this problem. Organizations need integrated approaches combining technical controls, personnel security, and organizational culture.

Emergency Services Sector Integration

The Emergency Services Sector maintains public safety and security, performs lifesaving operations, protects property and the environment, and assists communities impacted by disasters. This sector increasingly relies on digital tools to coordinate complex operations.

First responders use mobile apps for field coordination, cloud platforms for information sharing, and AI systems for resource optimization. During major incidents, these tools enable coordination across fire, police, emergency medical services, and other agencies that traditionally operated independently.

Lessons from the COVID-19 Pandemic

COVID-19 provided a brutal real-world test of organizational crisis management capabilities. The lessons learned continue shaping digital transformation strategies.

Digital Health Interventions

The G20’s first report on digital health for pandemic management outlined the emergency response landscape and proposed implementation recommendations. WHO assumed leadership in multiple strategic areas, committed to supporting countries in enhancing capacity for leveraging digital interventions through strengthened international collaboration.

Key recommendations included supporting countries in documenting digital health maturity, facilitating international collaboration on data standards and interoperability, and promoting open-source digital health applications compliant with interoperability standards.

Contact Tracing and Surveillance

Digital contact tracing represented one of the pandemic’s most visible technology applications. Singapore’s TraceTogether app achieved 78% adoption and dramatically improved contact tracing efficiency compared to manual approaches.

But digital contact tracing also raised privacy concerns and highlighted the importance of public trust. Successful implementations balanced public health benefits against privacy protections—demonstrating that technical capability alone doesn’t ensure adoption.

Telemedicine and Remote Care

Telemedicine adoption accelerated dramatically during COVID-19. What had been a niche service became mainstream necessity almost overnight. WHO supported sharing telemedicine tools and platforms during states of emergency where these tools weren’t previously available.

This rapid scaling demonstrated both the potential and challenges of digital health transformation. Organizations with robust digital infrastructure adapted quickly. Those dependent on legacy systems struggled.

Managing the Infodemic

The infodemic—too much information including false or misleading content during a disease outbreak—created confusion and risk-taking behaviors that harmed health. It led to mistrust in health authorities and undermined public health responses.

With growing digitization, the challenge intensifies. Social media amplifies both accurate information and misinformation at unprecedented speed. Crisis managers must now combat not just the primary crisis but also information chaos that undermines response efforts.

Implementation Best Practices and Common Pitfalls

Organizations pursuing digital transformation for crisis management should learn from both successes and failures documented across industries.

Do’s: Actions That Drive Success

- Start with a clear roadmap aligned to organizational objectives. Vague aspirations don’t translate into operational capabilities. Specific milestones, defined responsibilities, and measurable outcomes create accountability.

- Invest in comprehensive employee training that goes beyond technical skills. Crisis management requires judgment, communication, and leadership. Training should develop these capabilities alongside technical competence.

- Choose scalable and flexible technologies that grow with organizational needs. Fixed-capacity systems become bottlenecks during crises when demand surges unpredictably.

- Prioritize cybersecurity from the beginning, not as an afterthought. Digital crisis management systems become attractive targets for adversaries. Robust security protects both the systems themselves and the sensitive data they contain.

- Test regularly through exercises and drills. Systems that work perfectly in demonstrations sometimes fail under the stress of actual emergencies. Regular testing identifies weaknesses while there’s still time to fix them.

Don’ts: Pitfalls to Avoid

- Don’t ignore the importance of cybersecurity. Digital systems introduce new vulnerabilities. Organizations that focus solely on functionality while neglecting security create new crisis risks even as they address existing ones.

- Don’t overcomplicate the implementation process. Complexity creates fragility. Simple, robust systems often outperform sophisticated but fragile alternatives during actual crises when conditions deviate from plans.

- Don’t assume technology alone solves organizational problems. Digital transformation requires cultural change, process redesign, and leadership commitment. Technology enables these changes but doesn’t create them automatically.

- Don’t neglect interoperability with external partners. Crises rarely respect organizational boundaries. Systems that can’t share information with partner organizations limit coordination and response effectiveness.

- Don’t skip the after-action review process. Each crisis provides learning opportunities. Organizations that fail to capture and apply these lessons repeat mistakes instead of improving.

| Do’s | Don’ts |

|---|---|

| Invest in employee training | Ignore the importance of cybersecurity |

| Start with a clear roadmap | Overcomplicate the implementation process |

| Choose scalable and flexible technologies | Assume technology alone solves problems |

| Test systems regularly through exercises | Neglect interoperability with partners |

| Prioritize cybersecurity from the start | Skip after-action reviews and learning |

| Document processes and decisions | Deploy without adequate user testing |

| Engage stakeholders throughout implementation | Ignore legacy system integration needs |

Measuring Success and Demonstrating Value

Digital transformation initiatives require significant investment. Organizations need frameworks for measuring success and demonstrating return on investment.

Key Performance Indicators

Effective metrics balance leading and lagging indicators. Leading indicators measure activities that should improve outcomes—training completion rates, system uptime, drill participation. Lagging indicators measure actual outcomes—response times, incident costs, recovery duration.

Common KPIs for digital crisis management include:

- Time from incident detection to initial response

- Number of personnel reached by alerts within target timeframes

- System availability during crisis events

- Accuracy of predictive risk assessments

- Cost of crisis response and recovery

- Time to restore normal operations

- Stakeholder satisfaction with crisis communications

Demonstrating Return on Investment

ROI for crisis management systems can be challenging to quantify. The value lies partly in crises prevented or mitigated—events that by definition don’t fully materialize.

Organizations can demonstrate value through multiple lenses. Operational efficiency improvements during normal operations—faster processes, reduced manual work, better resource utilization. Enhanced capabilities documented through exercises and drills. Reduced insurance premiums reflecting lower risk profiles. Faster recovery and reduced losses when incidents do occur.

Continuous Improvement Cycles

Measurement should drive continuous improvement, not just justify past investments. Regular reviews of metrics identify trends, highlight emerging issues, and guide resource allocation.

After each crisis event or major exercise, organizations should conduct comprehensive after-action reviews. What worked as planned? What didn’t? Why? What changes would improve future performance?

These insights feed back into updated plans, refined training, system enhancements, and adjusted resource allocations. Over time, this creates a virtuous cycle of continuous improvement.

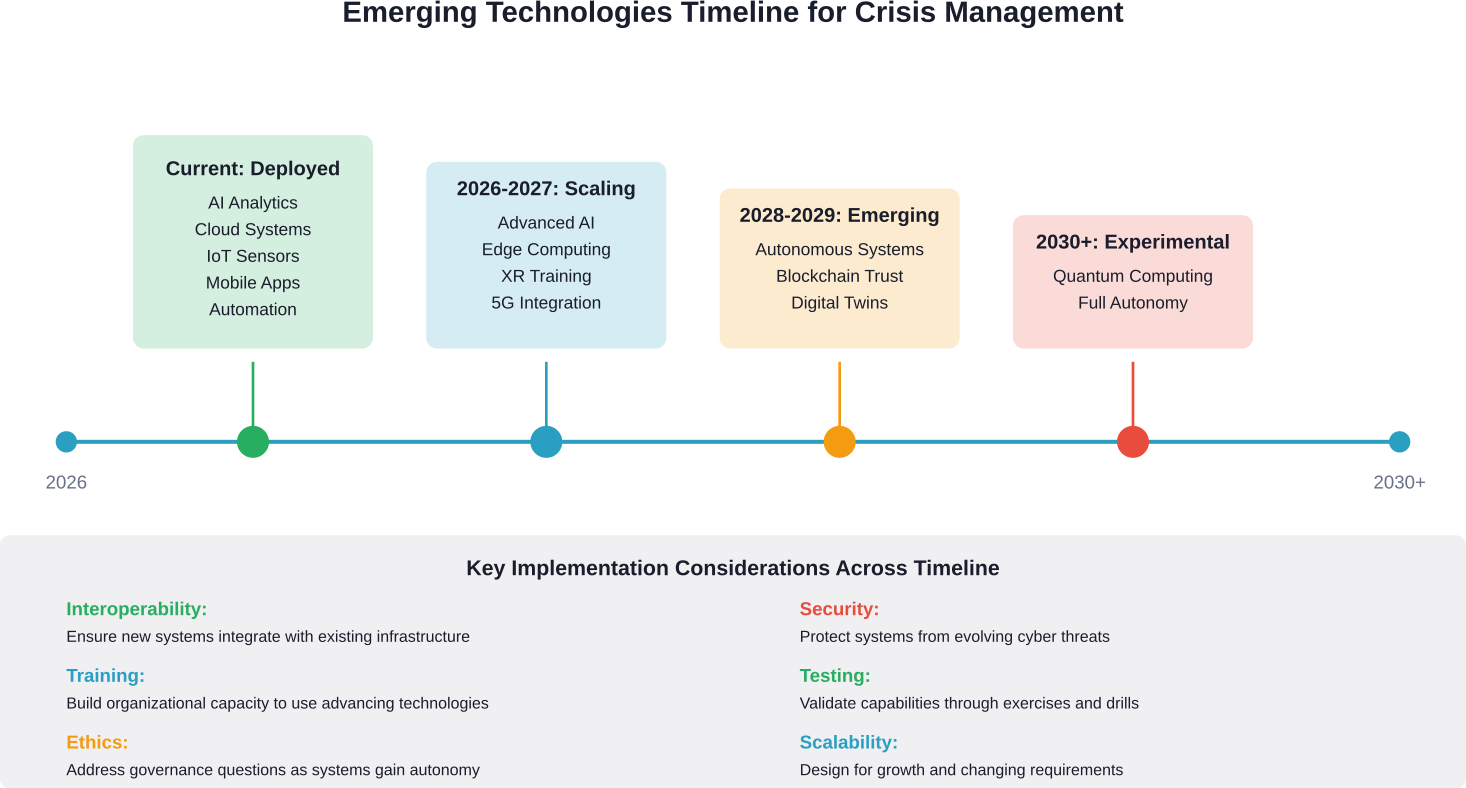

Future Trends Shaping Crisis Management

Digital transformation for crisis management continues evolving rapidly. Several emerging trends will shape the field’s future.

Advanced AI and Autonomous Systems

AI capabilities continue advancing. Future systems will increasingly operate autonomously—detecting threats, initiating responses, and coordinating resources with minimal human intervention.

This raises important governance questions. How much authority should autonomous systems have? What decisions require human judgment? How do organizations maintain appropriate oversight while benefiting from AI speed and consistency?

Edge Computing and Distributed Intelligence

Current systems often depend on centralized cloud infrastructure. Edge computing pushes intelligence to the network’s edges—enabling faster local decisions and reducing dependence on network connectivity.

For crisis management, this means systems that continue functioning even when communications infrastructure is damaged. Local sensors and devices can make critical decisions autonomously, then synchronize with central systems when connectivity is restored.

Quantum Computing for Complex Modeling

Quantum computing promises computational capabilities far beyond current systems. For crisis management, this could enable vastly more sophisticated scenario modeling—evaluating thousands of response options across complex, interconnected systems in real time.

While quantum computing remains largely experimental as of 2026, organizations should monitor developments and consider how future capabilities might transform crisis management approaches.

Blockchain for Trust and Transparency

Blockchain technology creates tamper-evident records and enables coordination among parties who don’t fully trust each other. For crisis management, this could support secure information sharing across organizations, transparent resource allocation, and verified credential management.

Applications remain early stage, but the underlying capabilities address real coordination challenges in multi-organization crisis response.

Extended Reality for Training and Coordination

Virtual reality, augmented reality, and mixed reality technologies—collectively called extended reality or XR—offer new approaches to training and coordination.

VR enables immersive crisis simulations that develop skills and test responses without real-world risks. AR overlays digital information onto physical environments—helping responders navigate unfamiliar locations, identify hazards, or access technical information hands-free.

Sector-Specific Applications

Different sectors face unique crisis management challenges that benefit from tailored digital approaches.

Healthcare and Public Health

Healthcare organizations manage crises ranging from disease outbreaks to mass casualty incidents to cybersecurity breaches. Digital transformation enables better resource tracking, patient flow management, supply chain visibility, and clinical decision support.

The COVID-19 pandemic accelerated digital health adoption dramatically. Telemedicine, remote monitoring, digital contact tracing, and data-driven resource allocation became mainstream necessities.

Financial Services

Banks and financial institutions face crises including cyberattacks, fraud, market disruptions, and operational outages. Digital systems enable real-time fraud detection, automated compliance monitoring, resilient transaction processing, and rapid incident response.

Research on relationship-first digital transformation shows small financial institutions can compete effectively even without the scale advantages of larger competitors. The key lies in strategic technology adoption aligned with organizational strengths.

Manufacturing and Supply Chain

Supply chain disruptions during COVID-19 highlighted vulnerabilities in global manufacturing networks. Digital transformation provides supply chain visibility, alternative sourcing identification, demand forecasting, and inventory optimization.

IoT sensors track materials and products throughout supply chains. AI analyzes patterns to predict disruptions before they fully materialize. Cloud platforms enable coordination across complex supplier networks.

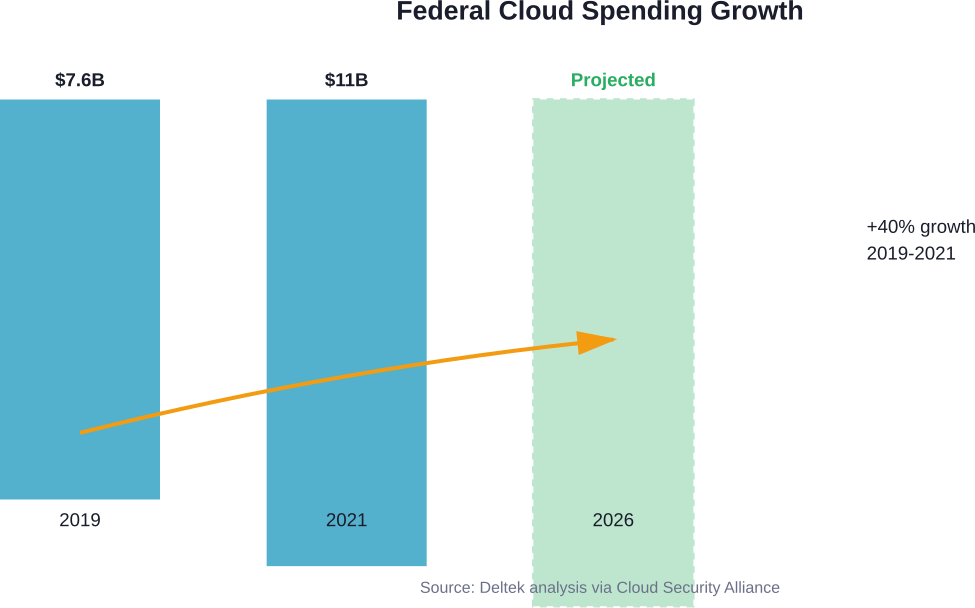

Government and Public Sector

Government agencies manage diverse crises from natural disasters to public health emergencies to civil unrest. Digital transformation enables better citizen communication, resource coordination, interagency collaboration, and evidence-based policy making.

Crisis-driven digital transformation in the public sector often faces unique challenges—legacy systems, procurement constraints, political pressures, and diverse stakeholder needs. Successful initiatives address these constraints thoughtfully rather than ignoring them.

Frequently Asked Questions

- What is digital transformation for crisis management?

Digital transformation for crisis management refers to integrating advanced technologies—including AI, cloud computing, IoT sensors, and real-time analytics—into organizational crisis response capabilities. This transformation moves organizations from reactive, manual approaches to proactive, technology-enabled systems that can predict, prevent, and respond to emergencies more effectively.

- How much does implementing digital crisis management systems cost?

Implementation costs vary dramatically based on organizational size, existing infrastructure, chosen technologies, and implementation scope. Small organizations might start with cloud-based solutions costing thousands of dollars annually, while large enterprises or government agencies might invest millions in comprehensive systems. Organizations should check with specific vendors for current pricing and consider phased implementation to spread costs over time.

- What technologies are most important for crisis management?

Core technologies include cloud computing for remote accessibility and scalability, AI and machine learning for predictive analytics and decision support, real-time data integration platforms for situational awareness, IoT sensors for monitoring and early warning, and automation tools for executing response protocols. The specific technology priorities depend on the types of crises an organization faces most frequently.

- How do organizations measure the success of digital crisis management initiatives?

Success metrics typically include response time improvements, reduced crisis-related costs, faster recovery to normal operations, enhanced coordination effectiveness, system availability during emergencies, and stakeholder satisfaction with crisis communications. Organizations should establish baseline measurements before implementation and track improvements over time through both real incidents and regular exercises.

- What are the biggest challenges in implementing digital crisis management systems?

Common challenges include integration with legacy systems, cybersecurity risks, staff training and change management, budget constraints, interoperability across partner organizations, and maintaining systems during normal operations when crisis urgency isn’t present. Successful implementations address these challenges through clear roadmaps, executive sponsorship, phased deployment, and continuous testing.

- How does digital transformation help prevent crises rather than just responding to them?

Predictive analytics identify emerging risks before they fully materialize, allowing proactive intervention. Continuous monitoring detects anomalies early when they’re still manageable. Scenario modeling tests organizational responses against potential futures, revealing vulnerabilities that can be addressed preemptively. This shifts organizations from purely reactive postures to proactive risk management.

- Can small organizations benefit from digital crisis management, or is it only for large enterprises?

Small organizations can absolutely benefit, often through cloud-based solutions that don’t require massive upfront infrastructure investment. Many crisis management platforms offer tiered pricing and scalable features. The key is identifying the specific crisis risks most relevant to the organization and prioritizing technologies that address those risks effectively. Small organizations shouldn’t try to replicate enterprise-scale systems but should focus on targeted solutions that provide meaningful risk reduction within budget constraints.

Conclusion: Building Resilience for an Uncertain Future

Digital transformation has fundamentally altered crisis management capabilities. Organizations that thoughtfully integrate technology into their crisis response frameworks can detect threats earlier, respond faster, coordinate more effectively, and recover more completely than those relying on traditional approaches.

But technology alone doesn’t create resilience. Successful digital transformation requires strategic planning, cultural change, continuous training, robust cybersecurity, and sustained leadership commitment. Organizations must balance innovation with security, autonomy with oversight, and standardization with flexibility.

CISA’s emphasis on building national resilience for an era of disruption reflects the reality that crises will continue evolving in complexity and interconnectedness. Climate change, cyber threats, pandemics, supply chain fragility, and geopolitical instability create an operating environment where preparedness isn’t optional—it’s existential.

The organizations that thrive won’t be those that avoid all crises. That’s impossible in the modern world. They’ll be those that build systematic resilience through thoughtful digital transformation—creating capabilities to withstand disruption, adapt to changing conditions, and emerge stronger from each challenge.

Research shows transformational leadership enhanced resilience by 82% in organizations facing cyber incidents. Similarly, ethical leadership improved organizational citizenship behaviors by 75% in crisis situations. These improvements didn’t come from technology alone but from leaders who understood how to strategically deploy technology in service of organizational objectives.

As we move deeper into 2026 and beyond, the gap will widen between digitally-enabled organizations and those still relying on paper plans and phone trees. The former will manage crises as opportunities to demonstrate capability and build stakeholder confidence. The latter will struggle to survive disruptions that their better-prepared competitors navigate successfully.

The question isn’t whether to pursue digital transformation for crisis management. It’s how quickly and how thoughtfully organizations can execute this transformation before the next crisis tests their capabilities.

Start by assessing current capabilities honestly. Identify the most significant gaps between current state and desired future state. Develop a clear roadmap with specific milestones and success metrics. Invest in training that builds both technical competence and crisis leadership. Choose technologies that prioritize interoperability, security, and scalability. Test regularly through realistic exercises. Learn continuously from each incident and drill.

Above all, recognize that building resilience is a journey, not a destination. The threat landscape keeps evolving. Technology keeps advancing. Organizational needs keep changing. Digital transformation for crisis management requires sustained commitment, not one-time projects.

Organizations willing to make this commitment will find themselves better prepared not just for the crises they can anticipate but also for the unexpected disruptions that inevitably arise in complex, interconnected systems. That preparation represents perhaps the most valuable investment any organization can make in an uncertain future.