Quick Summary: Digital transformation and sustainability are converging to create powerful solutions for environmental challenges. Organizations leveraging AI, IoT, blockchain, and cloud computing can reduce carbon footprints, optimize resource use, and drive measurable environmental impact while maintaining business growth. The integration of digital technologies with sustainability goals represents a critical pathway toward meeting global climate targets and achieving long-term resilience.

The intersection of digital innovation and environmental responsibility has become impossible to ignore. As climate change accelerates and regulatory pressure mounts, businesses face a dual challenge: modernize operations while simultaneously reducing environmental impact.

But here’s the thing—digital transformation isn’t just about efficiency anymore. It’s becoming the backbone of how organizations address their sustainability commitments. From smart energy grids to AI-powered waste reduction, technology is reshaping what’s possible in environmental management.

According to the World Economic Forum, digital technologies could help reduce up to 20 percent of global greenhouse gas (GHG) emissions by 2050. That’s not a small number. Yet many organizations still treat digital initiatives and sustainability efforts as separate tracks, missing the tremendous synergies between them.

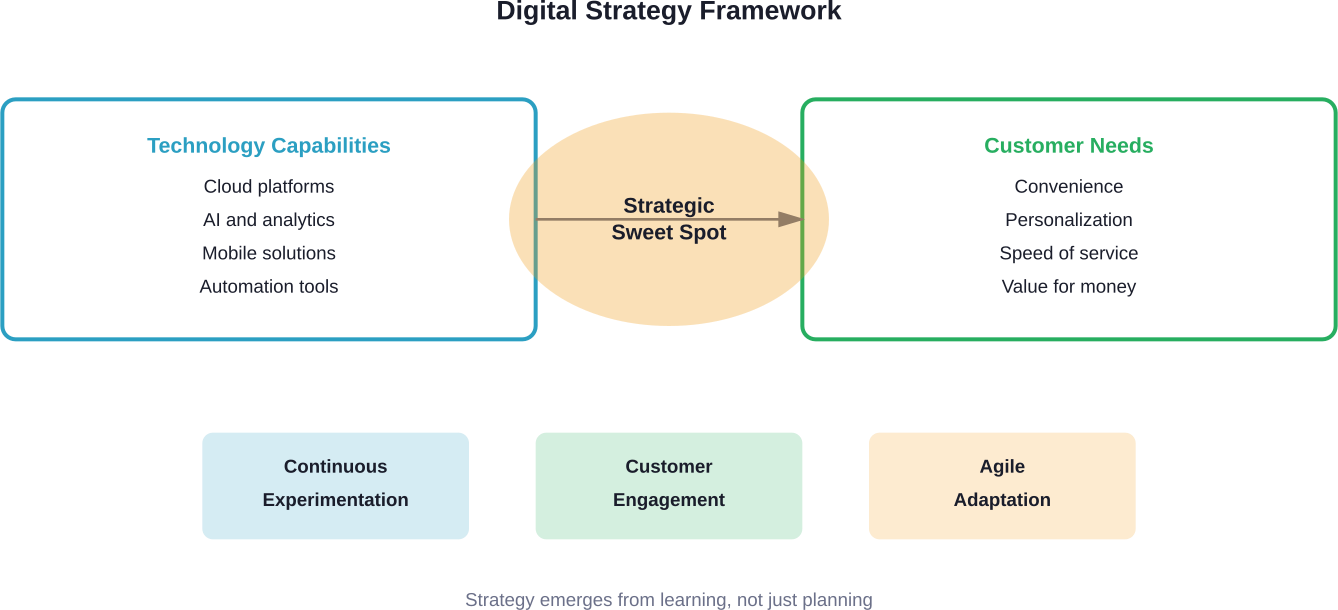

The question isn’t whether to pursue digital transformation or sustainability. It’s how to integrate both strategies into a unified approach that delivers environmental and business results simultaneously.

Understanding the Digital-Sustainability Convergence

Digital transformation fundamentally changes how organizations operate, make decisions, and create value. Sustainability transformation addresses how businesses impact the environment and society. When these two forces combine, something powerful happens.

The U.S. Environmental Protection Agency notes that embodied carbon—emissions from constructing, maintaining, and demolishing buildings—is responsible for 11 percent of global greenhouse gas emissions. Material recovery through improved recycling technologies represents one pathway to reducing this impact, which is why the EPA supports technology development through programs focused on circular economy solutions.

Real talk: the relationship works both ways. Digital technologies enable better sustainability outcomes through monitoring, optimization, and transparency. Simultaneously, sustainability goals drive innovation in digital solutions, pushing developers to create energy-aware computing and clean AI systems.

Research published in Frontiers in Environmental Science examined how digital transformation impacts corporate sustainability across Chinese listed companies. The analysis revealed positive correlations between digitalization initiatives and environmental performance, suggesting that strategic technology adoption contributes measurably to sustainability outcomes.

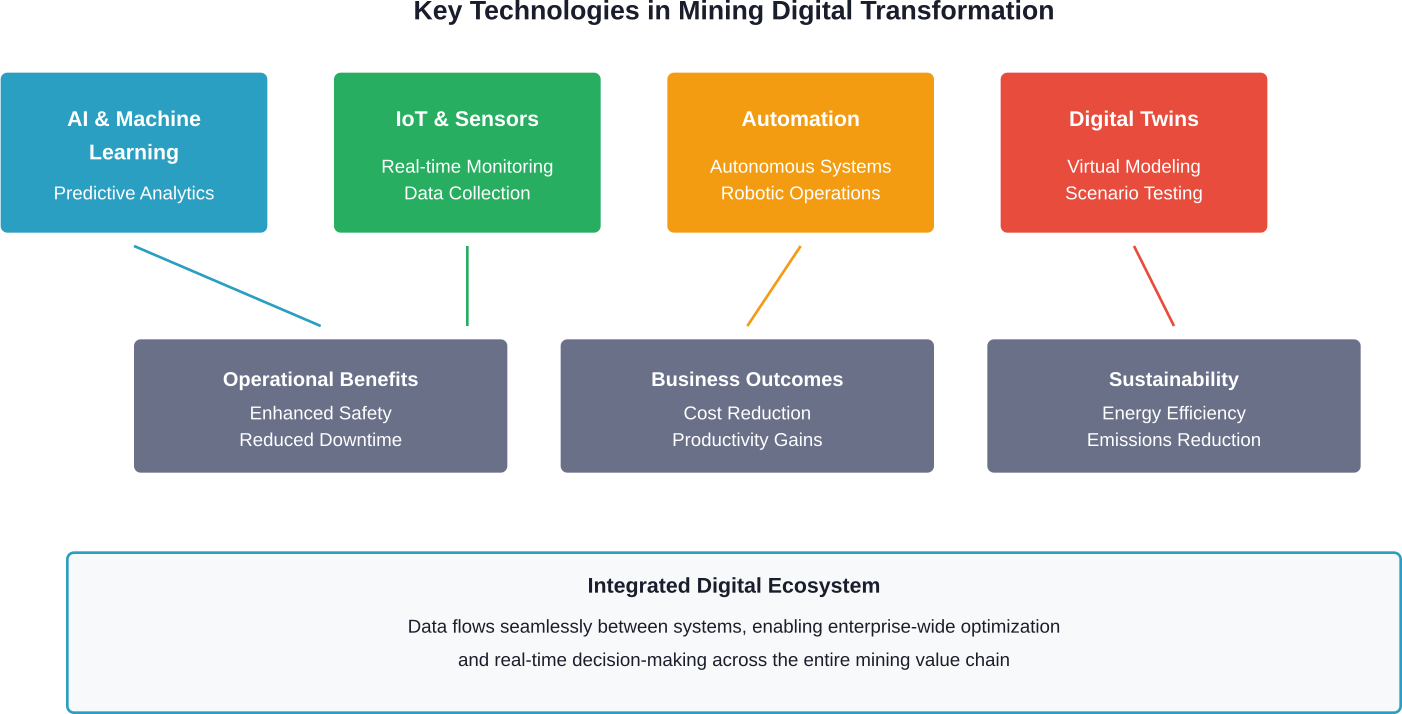

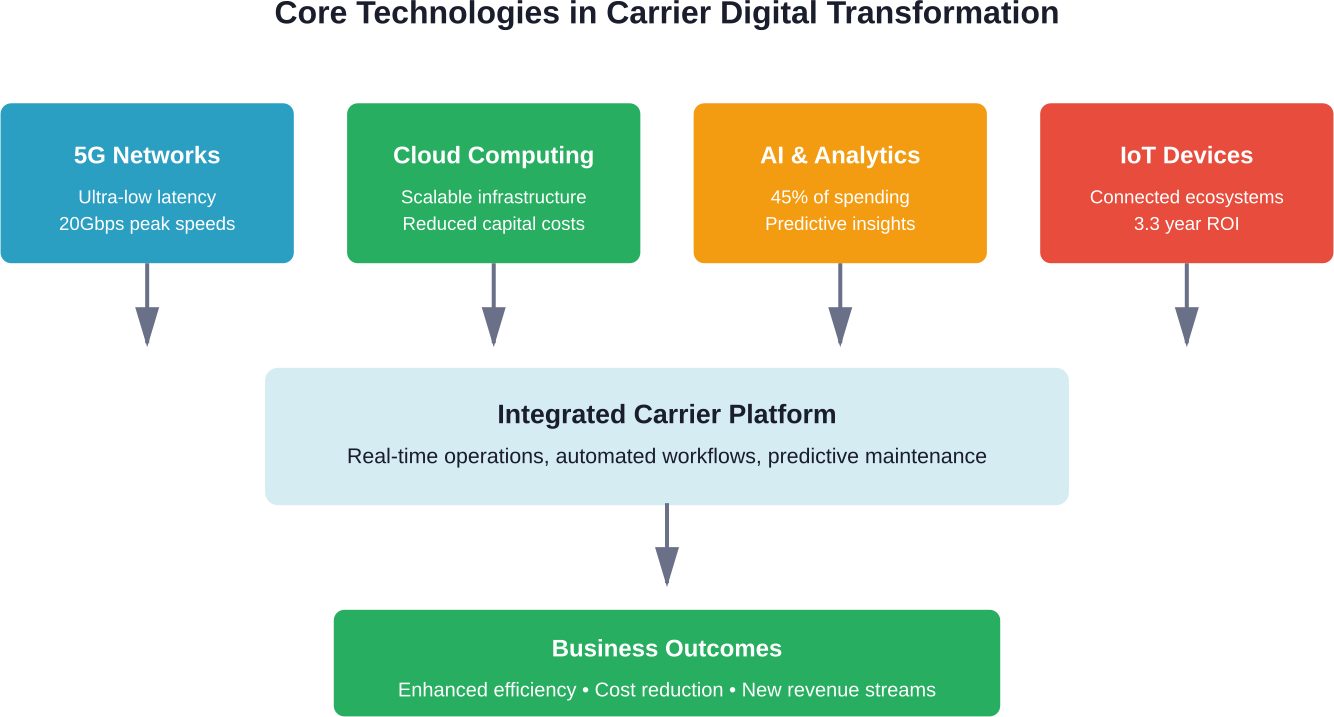

Core Technologies Driving Sustainable Digital Transformation

Several digital technologies stand out as particularly impactful for sustainability efforts. Understanding their specific applications helps organizations prioritize investments and build effective strategies.

Artificial Intelligence and Machine Learning

AI-driven systems excel at optimizing complex processes where human decision-making can’t keep pace with data volume. Energy management represents one of the clearest use cases.

Smart building systems use machine learning algorithms to predict heating, cooling, and lighting needs based on occupancy patterns, weather forecasts, and historical usage. These systems adjust in real-time, reducing energy consumption without sacrificing comfort or productivity.

Manufacturing operations deploy AI to minimize waste by predicting equipment failures, optimizing production schedules, and identifying quality issues before defective products consume additional resources. The combination of predictive maintenance and quality optimization cuts both operational costs and environmental impact.

That said, AI itself carries environmental costs. The computing power required for training large models generates significant carbon emissions. Organizations pursuing sustainable AI need to consider model efficiency, renewable energy sources for data centers, and the net environmental benefit of each application.

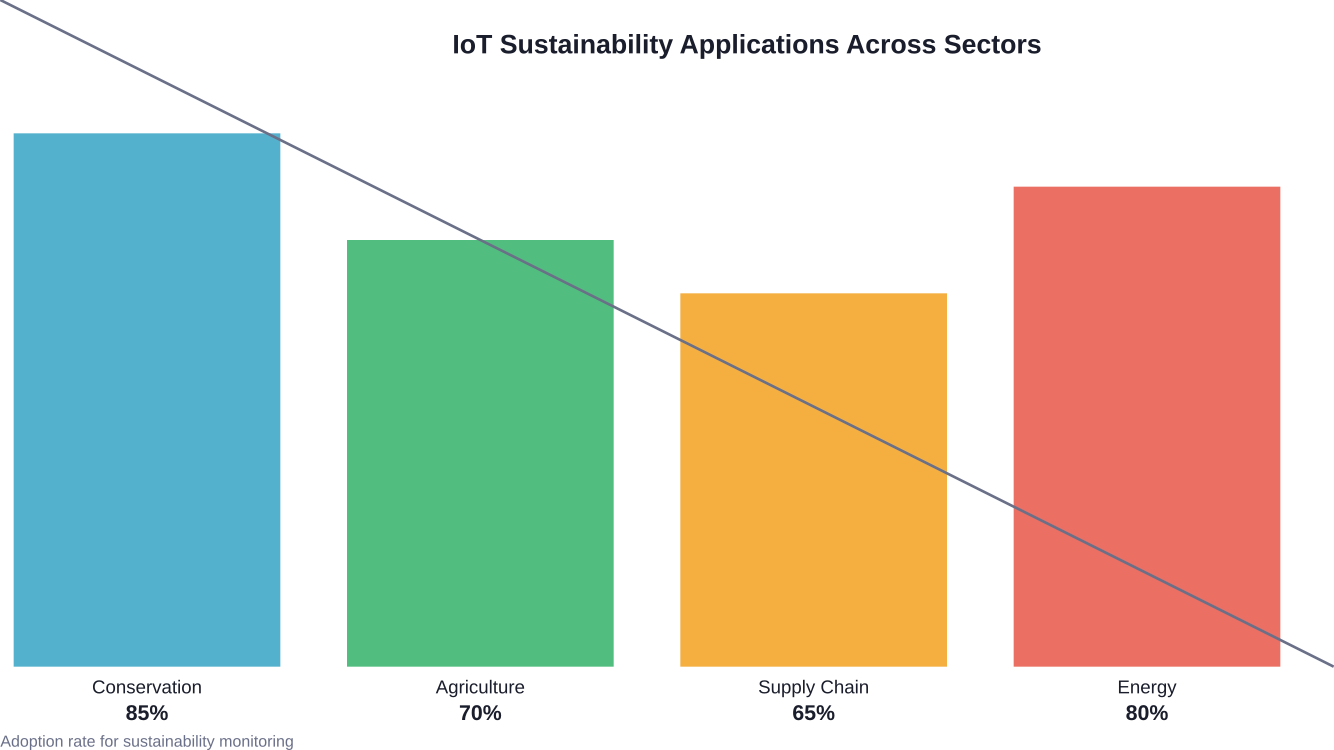

Internet of Things (IoT) for Environmental Monitoring

IoT sensors provide the real-time data foundation for many sustainability initiatives. Deployed across natural ecosystems, these devices monitor air quality, water levels, soil conditions, and wildlife movements with unprecedented granularity.

Conservation efforts benefit enormously from this continuous monitoring capability. Park managers detect illegal logging or poaching activities faster. Water resource managers identify contamination events before they spread. Agricultural operations optimize irrigation based on actual soil moisture rather than schedules.

Supply chain applications of IoT enable tracking of products throughout their lifecycle, monitoring conditions like temperature and humidity that affect product quality and waste. Retailers reduce spoilage of perishable goods. Manufacturers ensure proper handling of sensitive materials.

Blockchain for Supply Chain Transparency

Sustainability claims mean nothing without verification. Blockchain technology creates immutable records of product journeys, making greenwashing significantly harder.

Fashion brands use blockchain to trace garments from raw material sourcing through manufacturing and distribution. Consumers scan QR codes to verify claims about organic cotton, fair labor practices, or carbon-neutral shipping.

Food supply chains deploy similar systems to track organic certifications, sustainable fishing practices, and humane animal treatment. The transparency creates accountability at every step, rewarding genuinely sustainable practices and exposing problematic ones.

Carbon credit markets also benefit from blockchain’s verification capabilities. Trading platforms record emissions reductions and credit transfers with high transparency, reducing fraud and increasing confidence in offset programs.

Cloud Computing and Data Centers

Cloud infrastructure enables the scalability and data processing that powers other sustainability technologies. But data centers themselves consume massive amounts of energy.

Major cloud providers have responded by committing to renewable energy and improving efficiency. Consolidating workloads in hyperscale facilities typically consumes less total energy than distributed on-premises infrastructure, though the net benefit depends on specific circumstances.

Organizations migrating to cloud platforms should evaluate providers based on renewable energy commitments, power usage effectiveness ratings, and data center locations. Geographic choices affect both the carbon intensity of electricity and cooling requirements. Specific power usage effectiveness ratings should be evaluated when selecting providers.

Practical Applications Across Industries

Different sectors face unique sustainability challenges that digital transformation addresses in sector-specific ways.

Manufacturing and Production

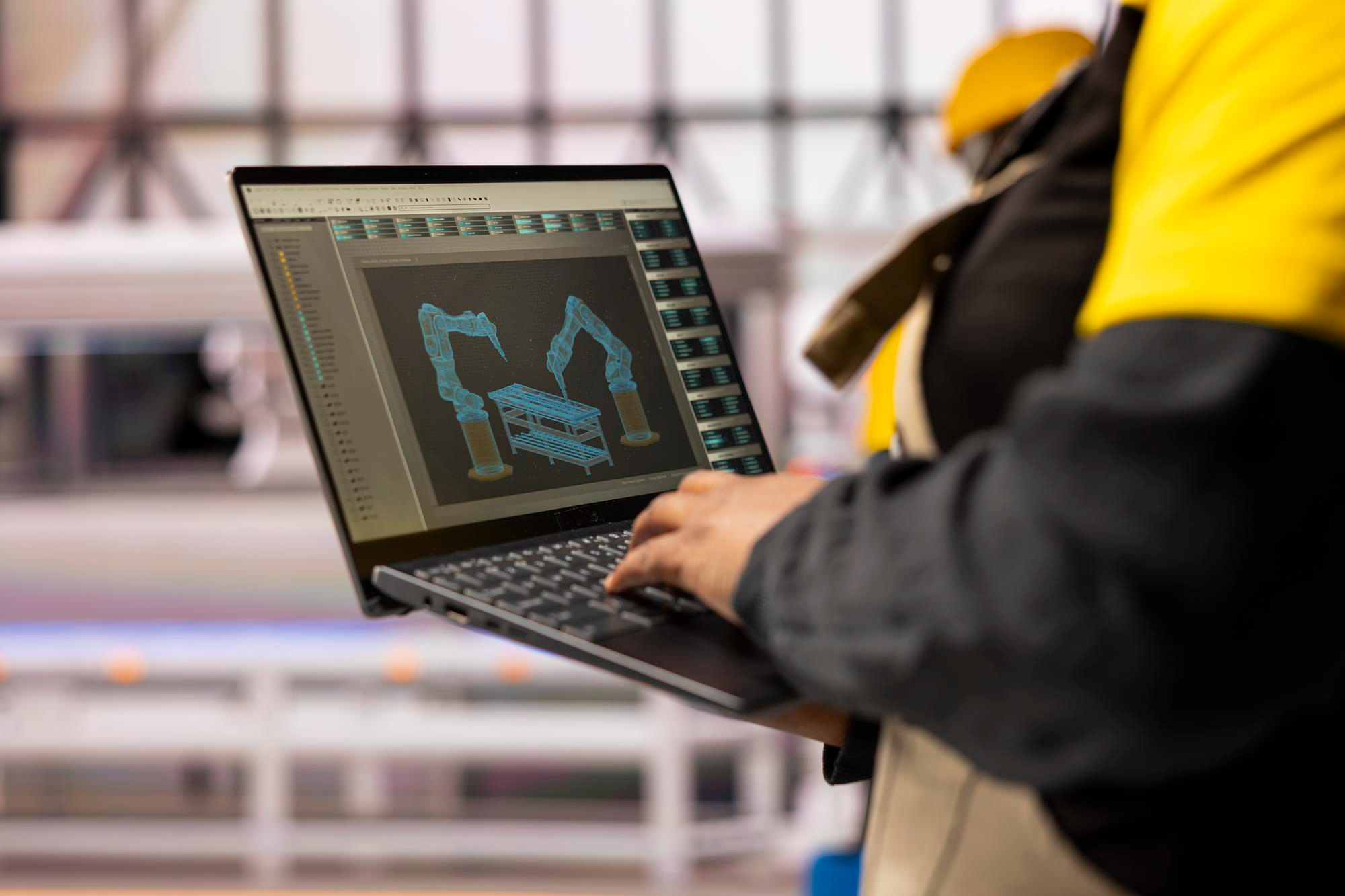

Sustainable manufacturing leverages digital twins—virtual replicas of physical production systems that enable testing and optimization without resource consumption. Engineers simulate process changes, identify bottlenecks, and predict outcomes before implementing changes on factory floors.

Additive manufacturing (3D printing) reduces material waste by building products layer-by-layer rather than cutting away excess material. Complex geometries that minimize weight while maintaining strength become feasible, reducing material use and transportation emissions simultaneously.

Predictive maintenance systems monitor equipment health, scheduling repairs before failures occur. This prevents both unplanned downtime and the environmental impact of catastrophic equipment failures that might release hazardous materials or require energy-intensive emergency responses.

Energy and Utilities

Smart grids represent perhaps the most transformative application of digital technology to sustainability. These systems balance supply and demand in real-time, integrating variable renewable sources like solar and wind more effectively than traditional infrastructure.

Distributed energy resources—rooftop solar, battery storage, electric vehicles—create bidirectional power flows that require sophisticated digital management. AI algorithms predict generation and consumption patterns, optimize storage charging cycles, and maintain grid stability.

The World Resources Institute’s Energy Access Explorer tool demonstrates how geospatial data and digital platforms accelerate energy access planning. As the first Digital Public Good in the energy domain, it analyzes high-resolution information to support evidence-based infrastructure decisions.

Transportation and Logistics

Route optimization algorithms reduce fuel consumption by analyzing traffic patterns, delivery windows, and vehicle capacities. Fleet management systems track driver behavior, identifying inefficient practices like excessive idling or aggressive acceleration.

Electric vehicle adoption accelerates as charging infrastructure becomes smarter. Demand response programs charge vehicles when renewable generation peaks, aligning transportation electrification with clean energy availability.

Shared mobility platforms reduce total vehicle miles traveled by matching riders and optimizing vehicle utilization. The sustainability benefit depends on displacing private car trips rather than public transit, making implementation details critical.

Agriculture and Food Systems

Precision agriculture uses GPS, sensors, and data analytics to apply water, fertilizer, and pesticides only where needed. This targeted approach reduces chemical runoff, conserves water, and lowers input costs while maintaining or improving yields.

Vertical farming systems leverage IoT sensors and automated controls to grow crops in controlled environments with dramatically reduced water consumption and no pesticide requirements. While energy-intensive, facilities powered by renewable sources can produce food with lower overall environmental impact than traditional agriculture.

Supply chain digitalization reduces food waste by improving demand forecasting, optimizing inventory levels, and coordinating harvesting with market needs. Given that food waste contributes significantly to global emissions, these improvements carry substantial environmental significance.

Measuring and Reporting Environmental Impact

Effective sustainability transformation requires rigorous measurement. Digital tools make this increasingly feasible and standardized.

The ISO 14019-4:2026 standard addresses principles and requirements for bodies validating and verifying sustainability information. This framework supports credible environmental reporting as stakeholder demands for transparency intensify.

Software platforms now automate carbon accounting, pulling data from utility bills, travel records, procurement systems, and production logs. These tools calculate Scope 1, 2, and 3 emissions according to established protocols, reducing the manual effort that previously made comprehensive accounting impractical for many organizations.

Real-time dashboards track sustainability metrics alongside traditional business KPIs, making environmental performance visible to decision-makers. This integration helps sustainability considerations influence operational decisions rather than remaining siloed in dedicated departments.

Open data initiatives play crucial roles in climate action. According to the World Resources Institute, shared data and information are fundamental to mainstreaming climate responses across government and society. Open data publication enables civil society scrutiny while allowing developers to create tools that broaden impact and engage new audiences.

| Measurement Category | Digital Tools | Key Metrics | Reporting Frequency |

|---|---|---|---|

| Carbon Emissions | Automated accounting platforms | Scope 1, 2, 3 emissions (tCO2e) | Monthly/Quarterly |

| Energy Consumption | IoT sensors, building management systems | kWh total, kWh per unit output | Real-time/Daily |

| Water Usage | Smart meters, flow sensors | Gallons total, water intensity ratios | Daily/Weekly |

| Waste Generation | Waste tracking software, weighing systems | Total waste, diversion rate, recycling % | Weekly/Monthly |

| Supply Chain Impact | Blockchain platforms, supplier portals | Supplier emissions, certifications | Quarterly/Annual |

Overcoming Implementation Challenges

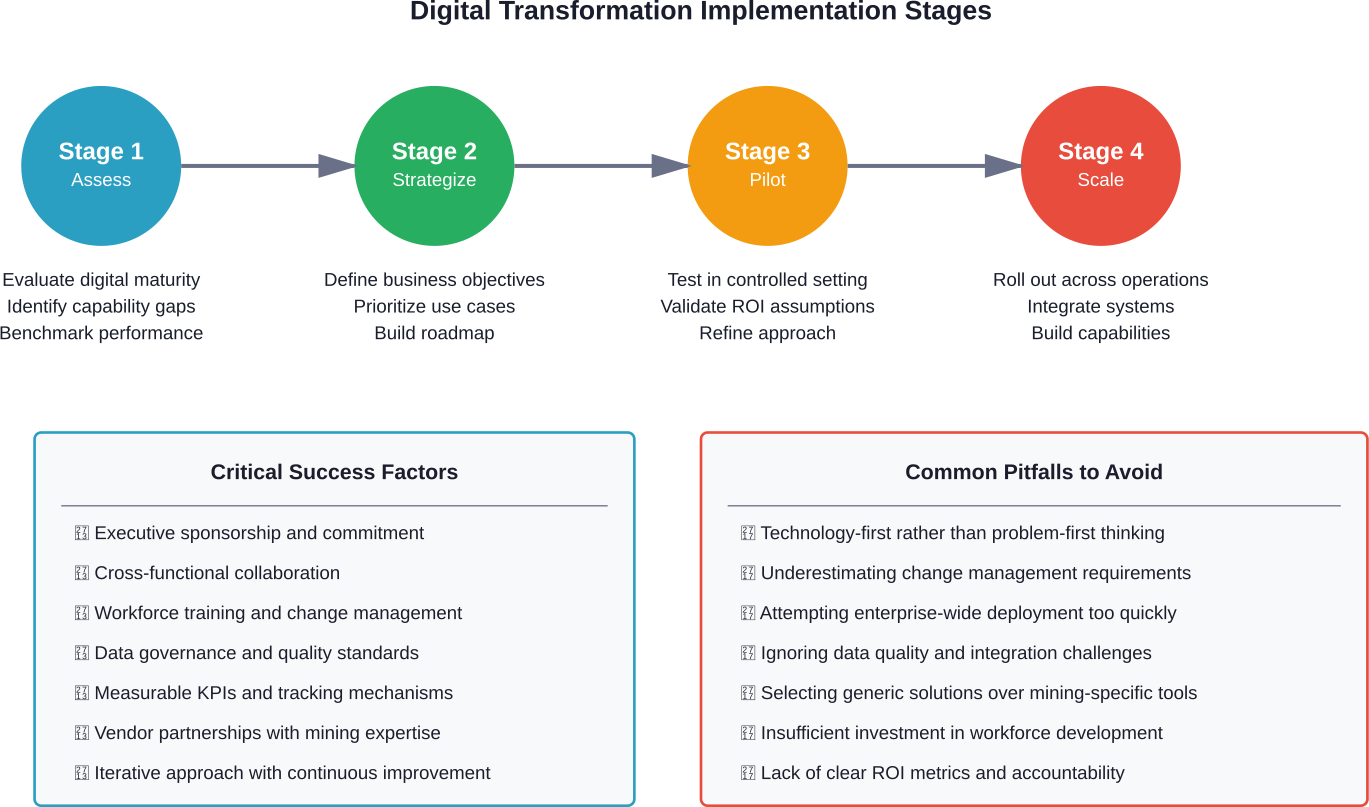

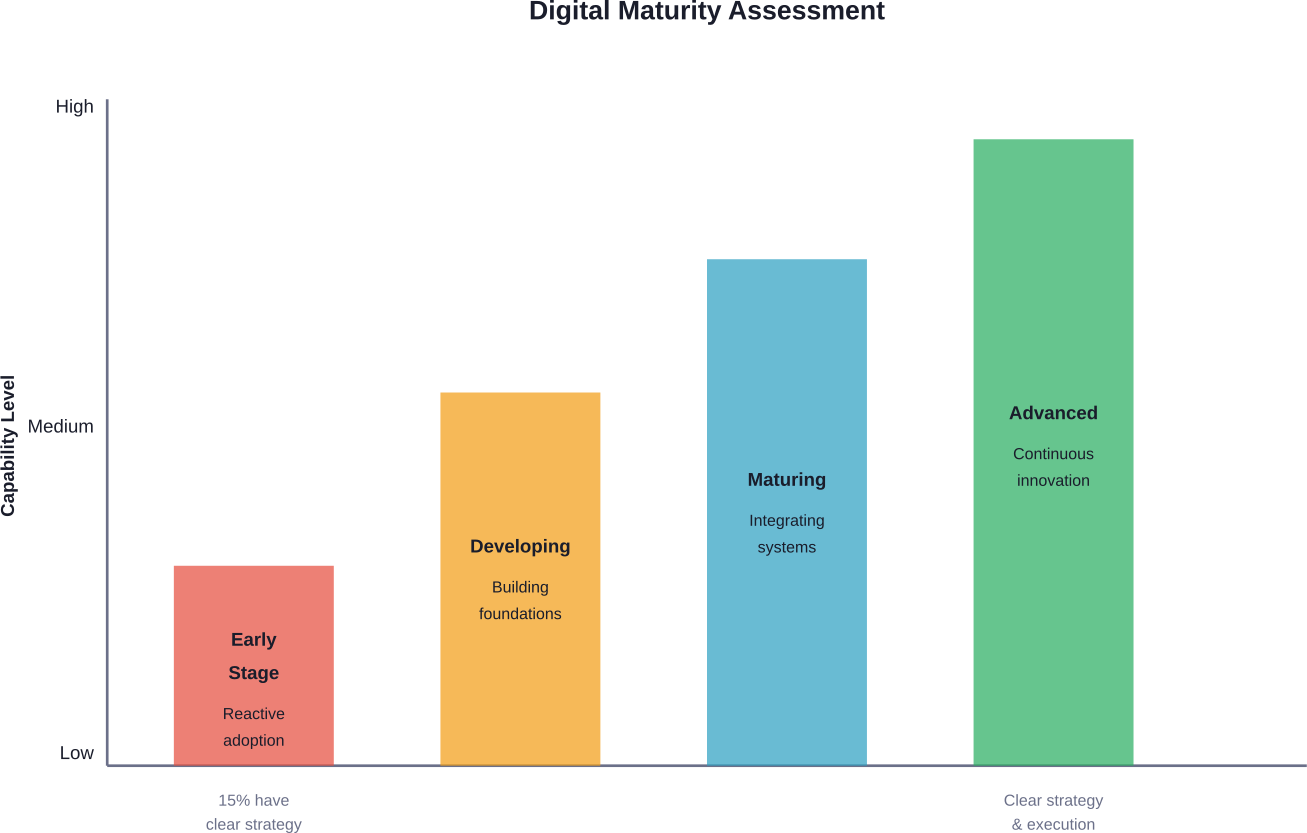

Digital transformation for sustainability sounds compelling in theory. Implementation brings real challenges that organizations must navigate.

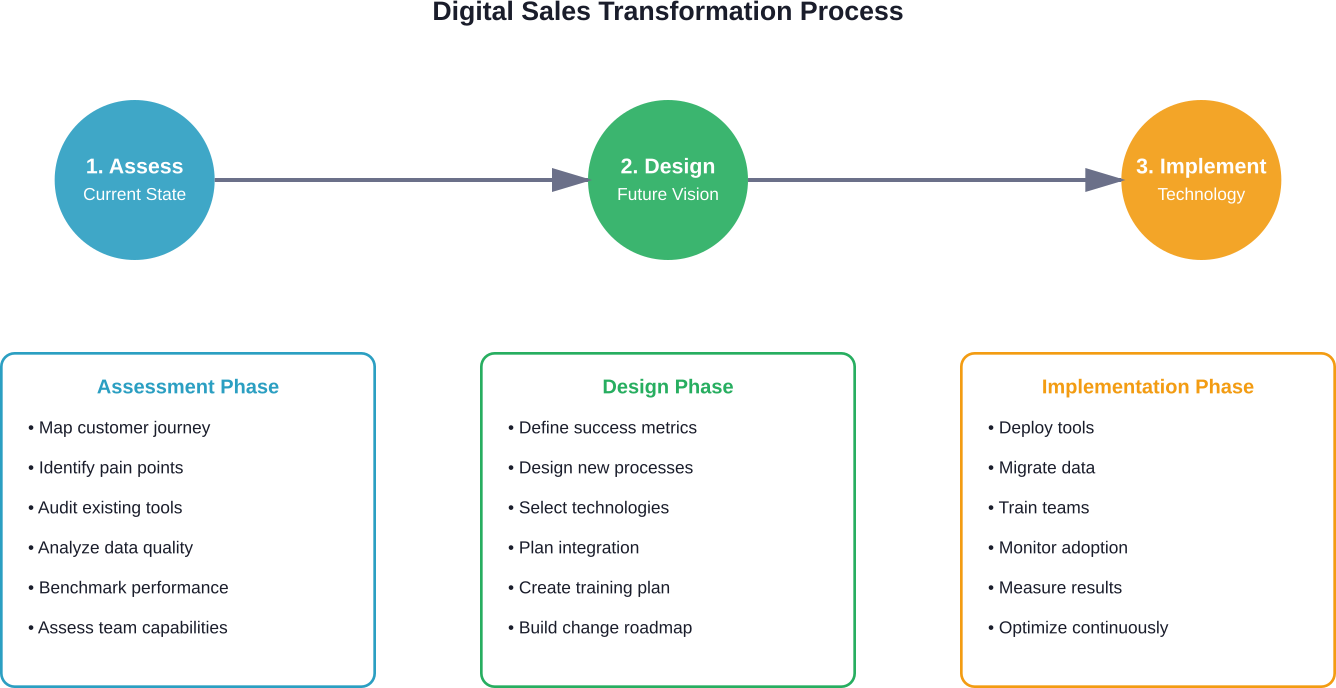

Data Quality and Integration

Sustainability initiatives often require integrating data from disparate sources never designed to work together. Legacy manufacturing equipment, utility billing systems, transportation management platforms, and procurement databases all store relevant information in incompatible formats.

Solving this requires investment in data infrastructure—APIs, data lakes, integration platforms—before analytics can deliver insights. Organizations underestimate both the technical complexity and organizational change management required to establish quality data flows.

Skills and Capabilities

Effective sustainable digital transformation requires hybrid expertise: professionals who understand both technology and environmental science. These individuals remain scarce.

Building internal capabilities through training takes time. Partnering with consultants provides faster starts but risks leaving knowledge gaps when engagements end. Most organizations need balanced approaches combining external expertise for initial implementations with deliberate internal skill development.

Investment Justification

Sustainability investments face scrutiny around financial returns. While some initiatives deliver clear cost savings—energy efficiency, waste reduction—others generate primarily environmental and reputational benefits.

Framing matters enormously. Investments positioned purely as sustainability spending face tougher approval than those highlighting operational resilience, regulatory compliance, customer requirements, and competitive positioning alongside environmental benefits.

Technology Selection and Vendor Lock-in

The sustainability technology landscape evolves rapidly. Solutions that seem cutting-edge today may become obsolete quickly, and vendor consolidation creates lock-in risks.

Organizations should prioritize platforms with open APIs and standard data formats. Building on proprietary systems creates dependencies that become expensive to unwind as needs evolve or better alternatives emerge.

Emerging Standards and Frameworks

Standardization helps organizations navigate complexity and ensure credibility. Several new frameworks specifically address digital sustainability.

ISO/IEC TS 20125-1:2026 establishes ecopractices for digital services across life cycle stages. This Technical Specification provides guidance on ecodesign principles specifically tailored to information technology services, addressing how digital offerings can minimize environmental impact from conception through retirement.

These standards matter because they create common languages and expectations. Suppliers and customers can align on sustainability requirements without negotiating definitions from scratch. Auditors can assess performance against established criteria rather than subjective claims.

Adoption remains voluntary in most jurisdictions, but regulatory trends suggest mandatory sustainability reporting will expand. Organizations building capabilities now position themselves advantageously for future compliance requirements.

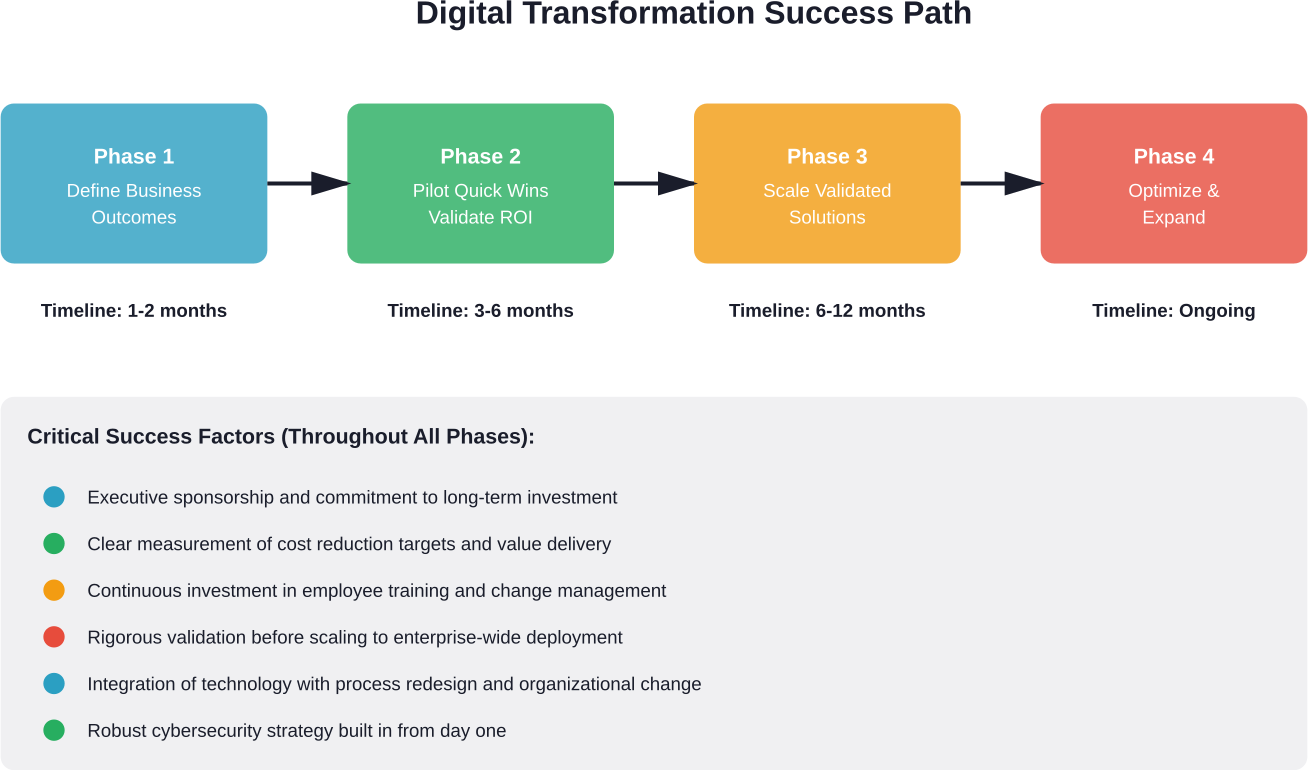

Best Practices for Success

Organizations achieving meaningful results share common approaches that distinguish successful implementations from failed initiatives.

Start with Business-Aligned Use Cases

The most successful digital sustainability initiatives solve real business problems while delivering environmental benefits. Energy optimization reduces costs. Predictive maintenance prevents downtime. Supply chain transparency manages reputational risk.

Starting with these dual-benefit opportunities builds momentum and secures ongoing support. Pure sustainability plays a struggle when budget pressures mount unless they’re embedded in core operations.

Invest in Data Infrastructure Early

Organizations that defer data infrastructure investments in favor of quick application deployments often regret it. Fragmented point solutions create integration nightmares, and rebuilding foundations while maintaining operational systems proves difficult.

Upfront investment in sensors, data platforms, and integration capabilities enables faster iteration on analytics and applications. The infrastructure becomes an asset supporting multiple use cases over time.

Combine Internal Expertise with External Partnerships

No organization possesses all necessary capabilities internally. Technology vendors, sustainability consultants, industry consortia, and academic researchers all bring valuable perspectives.

The key is maintaining strategic direction internally while leveraging external expertise tactically. Organizations that outsource strategic thinking lose control of their sustainability transformations.

Communicate Progress and Setbacks Transparently

Stakeholders increasingly value honest sustainability reporting over polished marketing. Organizations that acknowledge challenges and share learnings build credibility that purely promotional communications don’t achieve.

Transparency also creates accountability that drives results. Public commitments with regular progress reporting make backsliding more difficult and keep initiatives prioritized during competing pressures.

Build Sustainable Digital Systems With A-listware

Sustainability initiatives often fail because the technology behind them is fragmented or outdated. Digital transformation helps fix that by connecting data, improving operational visibility, and reducing inefficiencies across the organization. A‑listware digital transformation services focus on analyzing existing systems, identifying process gaps, and implementing practical technology improvements that support long-term operational change.

A-listware works with companies that need to modernize infrastructure, digitize workflows, and build software systems that support real business operations. Their teams handle strategy, implementation, and ongoing support, helping organizations move away from legacy tools and adopt scalable digital platforms that improve efficiency and reduce operational waste.

Talk to A-listware about your digital transformation project and see where modernization can start delivering measurable results.

The Role of Digital in Achieving Development Goals

Sustainable development extends beyond environmental concerns to encompass social equity, economic opportunity, and governance. Digital transformation influences all these dimensions.

Access to information empowers marginalized communities to participate in decisions affecting them. The World Resources Institute emphasizes that locally led adaptation—where local actors hold decision-making power—plays essential roles in achieving successful and sustainable adaptation. Digital tools facilitate the participation and knowledge-sharing that make locally led approaches possible.

Economic development increasingly depends on digital infrastructure and literacy. The digital divide represents not just a technology gap but a barrier to economic opportunity that perpetuates inequality. Sustainable digital transformation must address accessibility and inclusion alongside environmental concerns.

Generally speaking, the most impactful initiatives consider environmental, social, and economic sustainability together rather than optimizing one dimension at the expense of others.

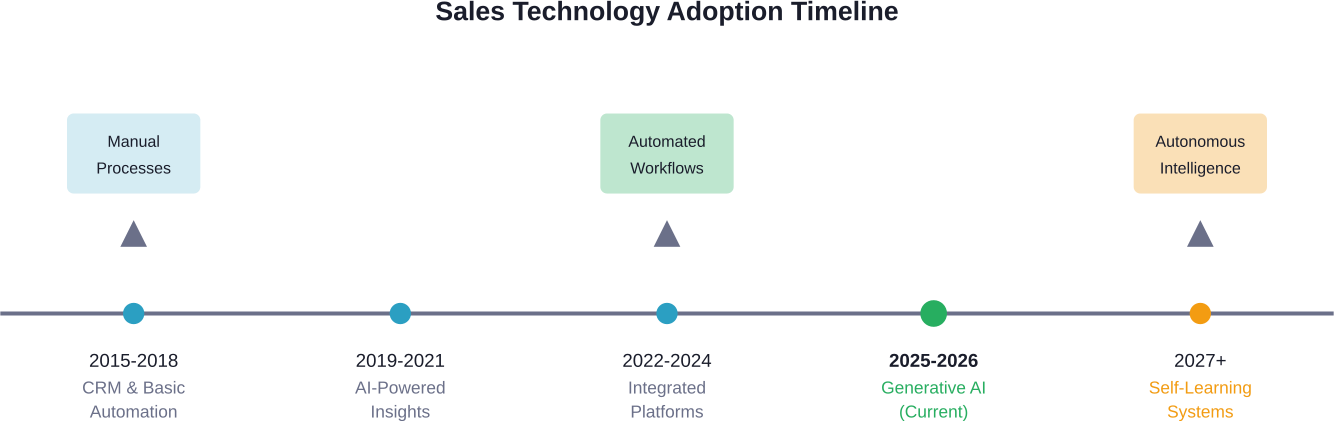

Looking Ahead: Trends Shaping the Future

Several emerging trends will shape how digital transformation and sustainability evolve together over coming years.

Regulatory Pressure and Mandatory Disclosure

Voluntary sustainability reporting is giving way to mandatory disclosure requirements in major markets. These regulations demand verified data, driving adoption of measurement and reporting technologies.

Organizations building robust sustainability data capabilities now will adapt more easily as requirements expand. Those treating reporting as compliance checkbox exercises will struggle with deeper scrutiny.

AI Ethics and Sustainable Computing

The environmental and social costs of AI are receiving increasing attention. Sustainable computing practices—optimizing algorithms for efficiency, using renewable energy, considering model necessity—will become standard expectations rather than nice-to-haves.

Frameworks for evaluating AI sustainability impacts are emerging. Organizations deploying AI for sustainability purposes must ensure the solutions themselves meet sustainability standards.

Circular Economy Business Models

Digital technologies enable new circular business models where companies maintain ownership of products and materials, providing services rather than selling goods. These models require sophisticated tracking, reverse logistics, and lifecycle management that digital platforms facilitate.

Product-as-a-service offerings align provider incentives with durability and recyclability rather than planned obsolescence. Digital connectivity and IoT sensors make monitoring and maintaining distributed assets economically feasible.

Ecosystem Collaboration Platforms

Complex sustainability challenges exceed individual organizational boundaries. Digital platforms that facilitate collaboration across supply chains, industries, and sectors will become increasingly important.

Shared data standards, interoperable systems, and collaborative governance models enable coordination that fragmented approaches can’t achieve. Success requires willingness to participate in ecosystems rather than controlling proprietary solutions.

| Trend | Timeline | Impact Level | Required Actions |

|---|---|---|---|

| Mandatory ESG disclosure | 2026-2028 | High | Implement verified data collection systems |

| Carbon pricing expansion | 2026-2030 | High | Deploy carbon accounting and optimization tools |

| Sustainable AI standards | 2027-2029 | Medium | Adopt energy-aware computing practices |

| Circular economy models | 2026-2032 | Medium | Develop product tracking and take-back systems |

| Ecosystem platforms | 2028-2035 | Medium | Participate in industry collaboration initiatives |

Frequently Asked Questions

- How does digital transformation reduce carbon emissions?

Digital technologies reduce emissions through multiple mechanisms: optimizing energy consumption with AI and IoT sensors, enabling remote work that eliminates commuting, improving logistics efficiency to reduce transportation fuel use, and facilitating renewable energy integration through smart grids. Manufacturing benefits from digital twins that reduce waste and predictive maintenance that prevents resource-intensive failures. The World Economic Forum estimates these technologies could reduce global emissions by up to 20 percent by 2050.

- What are the environmental costs of digital transformation itself?

Digital technologies carry their own environmental footprint. Data centers consume significant electricity, with carbon intensity depending on energy sources. Manufacturing hardware requires raw materials extraction and processing. The digital sector’s carbon footprint already exceeds aviation. Electronic waste presents disposal challenges. Organizations must evaluate net environmental impact, ensuring that sustainability solutions deliver benefits exceeding their own costs. Sustainable computing practices, renewable energy commitments, and circular hardware models help address these concerns.

- Which industries benefit most from digital sustainability initiatives?

Energy-intensive industries see particularly strong benefits: manufacturing, transportation, agriculture, and building operations. These sectors consume substantial resources where optimization delivers measurable impact. However, every industry faces sustainability pressures. Retail and consumer goods companies use digital tools for supply chain transparency. Financial services apply them to ESG risk assessment. Healthcare leverages telehealth to reduce facility energy use. The specific applications vary, but opportunities exist across all sectors.

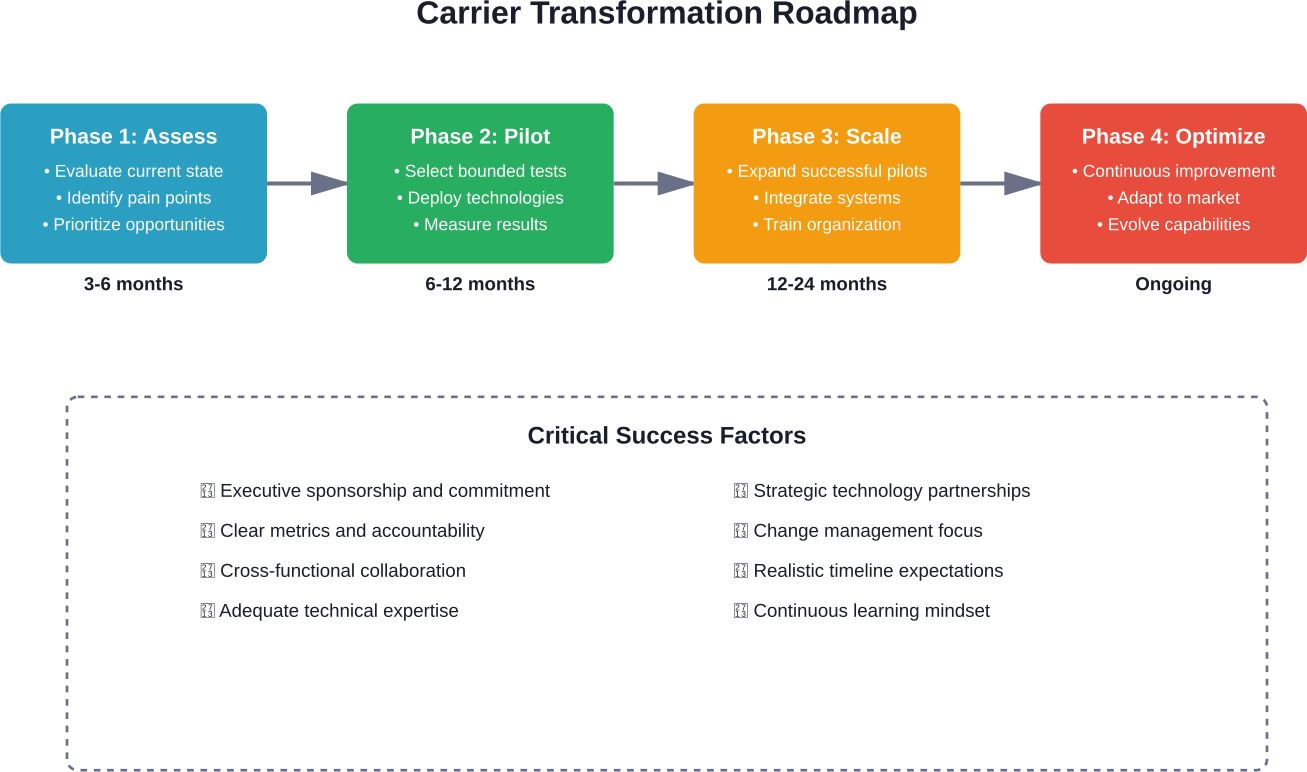

- How long does implementing digital sustainability transformation take?

Timeline depends on scope and starting point. Initial assessments and pilot projects typically require 6-12 months. Building data infrastructure and capabilities extends 12-24 months. Enterprise-wide transformation unfolds over 3-5 years. Organizations should expect phased implementation rather than instant results. Quick wins in energy management or waste reduction can deliver value within months, while comprehensive supply chain transparency or circular business models require multi-year commitments. Starting with focused initiatives that expand over time proves more successful than attempting everything simultaneously.

- What skills do teams need for sustainable digital transformation?

Success requires hybrid capabilities spanning technology and sustainability domains. Data scientists who understand environmental metrics, sustainability professionals comfortable with digital tools, and business leaders who integrate both perspectives into strategy. Specific technical skills include IoT deployment, data analytics, carbon accounting software, and integration platforms. Many organizations struggle finding individuals with complete skill sets, making training programs and cross-functional collaboration essential. Partnerships with specialized consultants and technology vendors can supplement internal capabilities during skill development.

- How do organizations measure ROI on sustainability technology investments?

Measuring return requires broader frameworks than traditional financial metrics alone. Direct cost savings from reduced energy, materials, and waste provide quantifiable returns. Risk mitigation value comes from regulatory compliance, supply chain resilience, and reputation protection. Revenue opportunities emerge from sustainable product differentiation and new market access. Employee attraction and retention benefits have measurable financial impacts. Leading organizations use balanced scorecards incorporating environmental KPIs alongside financial metrics, recognizing that some sustainability investments generate strategic value not captured in short-term ROI calculations.

- What standards should organizations follow for sustainability reporting?

Multiple frameworks guide sustainability disclosure. ISO 14019-4:2026 addresses validation and verification of sustainability information. The GHG Protocol provides carbon accounting standards widely used for emissions reporting. TCFD recommendations structure climate-related financial disclosures. SASB standards focus on financially material sustainability topics by industry. Organizations increasingly adopt multiple frameworks as stakeholders reference different standards. The ISO/IEC TS 20125-1:2026 Technical Specification specifically addresses ecodesign for digital services. Regulatory requirements in specific jurisdictions may mandate particular frameworks, making compliance landscape assessment important.

Conclusion

Digital transformation and sustainability aren’t separate initiatives competing for resources and attention. They’re complementary forces that organizations must integrate to remain competitive and responsible.

The technologies enabling digital transformation—AI, IoT, blockchain, cloud computing—provide the capabilities needed to measure, manage, and reduce environmental impact at scales previously impossible. Meanwhile, sustainability imperatives drive innovation in digital solutions, pushing development of energy-efficient computing, transparent supply chains, and circular business models.

Organizations that treat these as unified strategies position themselves for long-term success. Those that pursue digital transformation without sustainability considerations accumulate environmental debt and regulatory risk. Those that pursue sustainability without digital enablement lack the data, automation, and optimization capabilities that make ambitious targets achievable.

The path forward requires honest assessment of current capabilities, strategic investment in data infrastructure, focused pilots that deliver business and environmental value, and sustained commitment through inevitable challenges. Standards like ISO 14019-4:2026 and ISO/IEC TS 20125-1:2026 provide frameworks for credible implementation and reporting.

Start by identifying where digital technologies can solve real business problems while advancing sustainability goals. Build the data foundation that enables measurement and optimization. Partner strategically to access capabilities beyond internal expertise. Communicate progress transparently to build stakeholder trust.

The convergence of digital transformation and sustainability represents one of the defining business challenges and opportunities of this decade. Organizations that act decisively will shape their industries’ responses while building resilient, responsible operations positioned for long-term success.

Ready to begin your sustainable digital transformation journey? Start with a baseline assessment of your current environmental impact and identify the highest-value opportunities where digital technologies can drive measurable improvement.