Quick Summary: Creating an AI agent involves defining its purpose and tasks, selecting an appropriate framework (like LangChain, OpenAI’s AgentKit, or no-code platforms like n8n), connecting it to relevant tools and data sources, and iteratively testing its performance. According to OpenAI’s practical guide from 2026, successful agents use simple, composable patterns rather than complex frameworks, with clear orchestration and robust guardrails.

AI agents have moved from experimental prototypes to production systems transforming how organizations operate. But here’s the thing—most teams approaching agent development for the first time struggle with where to begin.

The landscape shifted dramatically in late 2024 and early 2025. According to Anthropic’s engineering team, the most successful agent implementations aren’t using complex frameworks or specialized libraries. Instead, they’re built with simple, composable patterns that prioritize control and reliability over automation.

This guide walks through the practical process of creating an AI agent, from initial concept to deployment, based on frameworks published by OpenAI, Anthropic, and LangChain in 2025-2026.

Understanding What AI Agents Actually Are

Before diving into creation steps, clarity on definitions matters. OpenAI defines agents as “systems that intelligently accomplish tasks—from simple goals to complex, open-ended workflows.”

The key distinction? Agents differ from standard LLM applications through their ability to make sequential decisions, use tools, and maintain context across multiple steps.

According to research published on arXiv in January 2026 (paper 2601.16648), effective autonomous agents require a cognitive framework inspired by human decision-making processes. This includes perception, reasoning, planning, and action execution as distinct components.

Agents vs. Workflows: Where Does Your Use Case Fit?

LangChain’s framework documentation from April 2025 introduces a useful spectrum. On one end sit deterministic workflows where every step is predefined. On the other end live fully autonomous agents making independent decisions at each stage.

Most production systems fall somewhere in between. Real talk: fully autonomous agents sound exciting but introduce reliability challenges that many teams aren’t prepared to handle.

| Characteristic | Workflow | Agent |

|---|---|---|

| Decision-making | Predetermined sequence | Dynamic, context-driven |

| Predictability | High | Variable |

| Tool use | Fixed integration points | Runtime tool selection |

| Error handling | Explicit paths defined | Recovery strategies needed |

| Best for | Defined processes | Open-ended tasks |

Step 1: Define Agent Purpose and Scope

OpenAI’s guide from March 2026 emphasizes starting with a clear, realistic task definition. Not an aspirational vision of what agents might someday do—what specific problem needs solving right now?

According to LangChain’s blog (published July 10, 2025), teams should build an MVP first. The team illustrated this with an email agent example. They didn’t start with “automate all email.” They defined: “Draft responses to customer inquiries about order status using our shipping database.”

Questions to Answer Before Building

What specific task will the agent handle? Who are the end users? What data sources must it access? What actions can it take? What are the failure modes, and how critical are they?

According to MIT Press research (published January 30, 2026), enterprises implementing agent-centric architectures see productivity gains of 2-10x. Those capturing material productivity gains from agents start with narrow, well-defined use cases. One global industrial firm cut audit reporting time by 92% by scoping an agent to specific document analysis workflows.

The short answer? Start small. Expand once the foundation proves reliable.

Step 2: Choose Your Development Approach

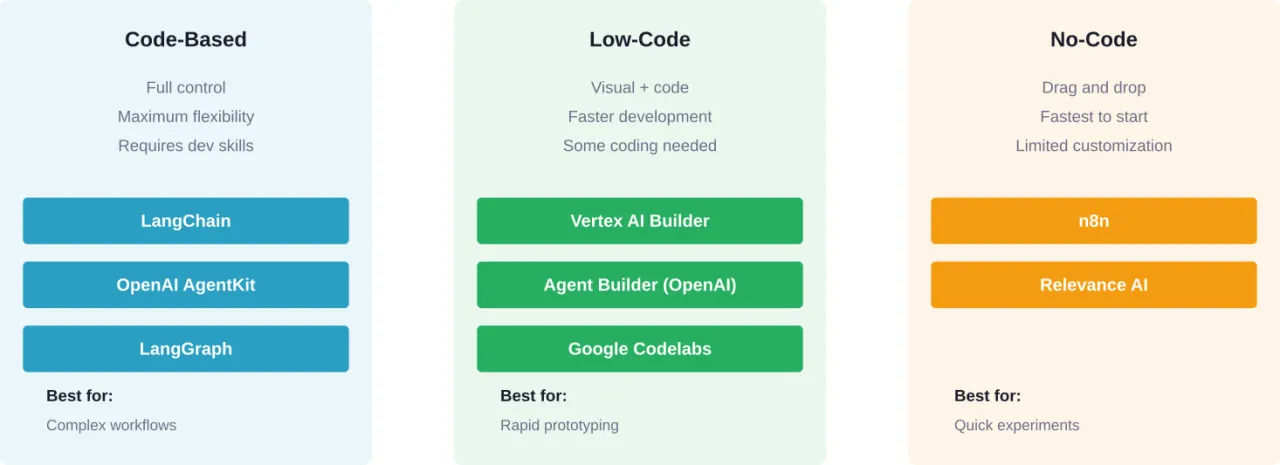

Three primary paths exist for building agents in 2026: code-based frameworks, low-code platforms, and no-code tools.

Code-Based Frameworks: Maximum Control

LangChain remains the most widely adopted open-source framework for agent development. According to LangChain’s documentation, the framework provides pre-built agent architectures with 1000+ integrations for models and tools.

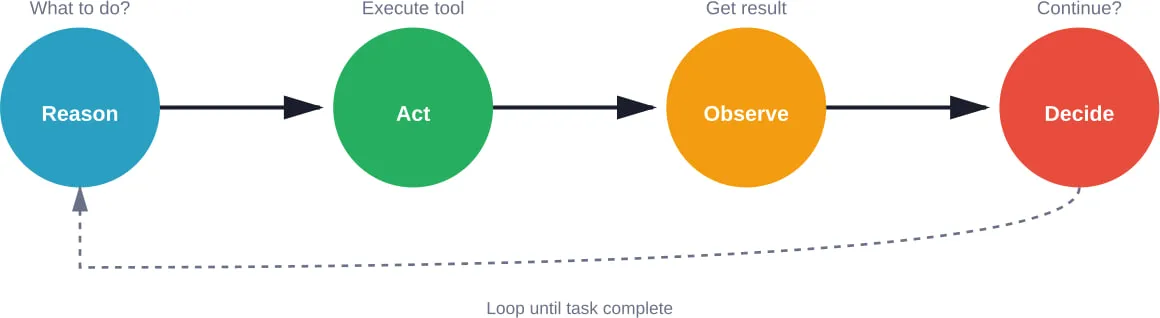

The framework’s create_agent function implements a proven ReAct (Reasoning + Acting) pattern on LangGraph’s durable runtime. This pattern has agents reason about what to do, take an action, observe the result, and repeat.

OpenAI’s AgentKit, announced in their API documentation, offers a modular toolkit for building, deploying, and optimizing agents. It includes Agent Builder (a visual canvas) and ChatKit for embedding workflows.

No-Code Platforms: Speed Over Flexibility

For teams without dedicated engineering resources, no-code platforms offer a faster path to basic agents. n8n.io enables agent creation through visual workflow builders with a free tier available and paid plans starting at $20/month.

But wait. No-code tools excel at simple automation workflows. They struggle with complex decision trees, custom integrations, and sophisticated error handling.

Step 3: Design the Agent Architecture

Agent architecture consists of several core components working together. Understanding these building blocks helps regardless of which framework gets selected.

Core Components Every Agent Needs

Here they are:

- The LLM brain: The language model handling reasoning and decision-making. Model selection matters—OpenAI’s guide emphasizes matching model capabilities to task complexity.

- Tool access: Mechanisms allowing the agent to perform actions beyond text generation. This includes APIs, databases, search engines, or custom functions.

- Memory systems: Context retention across conversation turns or workflow steps. This can be simple (conversation history) or complex (vector databases for semantic search).

- Orchestration logic: The control flow determining how the agent selects and executes tools. Anthropic’s December 2024 research shows successful implementations favor explicit orchestration over full autonomy.

The ReAct Pattern in Practice

The ReAct pattern structures agent behavior into clear phases. First, the agent receives a task. Second, it reasons about what action to take. Third, it executes that action. Fourth, it observes the result. Finally, it decides whether to continue or return a final answer.

This loop continues until the agent determines the task is complete or hits a maximum iteration limit.

Step 4: Connect Tools and Data Sources

An agent without tools can only generate text. Tools transform agents into systems that take action in the world.

According to OpenAI’s practical guide, tool design significantly impacts agent reliability. Well-designed tools have clear descriptions, explicit parameter definitions, and predictable error messages.

Types of Tools Agents Use

API integrations connect agents to external services—payment processors, CRM systems, communication platforms. Database queries let agents retrieve or update structured information. Search capabilities enable agents to find relevant information across large document sets or the web.

Code execution environments allow agents to run Python scripts, perform calculations, or process data. Function calling turns any custom logic into an agent-accessible tool.

Tool Design Best Practices

Keep tool scope narrow. Instead of a single “database_query” tool, create specific tools like “get_customer_by_id” or “list_recent_orders.” This reduces ambiguity and improves reliability.

Write detailed tool descriptions. The agent relies entirely on these descriptions to understand when and how to use each tool. Include examples of appropriate use cases.

Handle errors gracefully. Tools should return structured error messages the agent can understand and potentially recover from. According to Anthropic’s engineering guide, robust error handling separates production agents from prototypes.

Step 5: Implement Context and Memory

Agents need memory to maintain coherence across multi-turn interactions. The memory strategy depends on the use case.

Short-term memory stores conversation history, typically passed to the LLM as part of each prompt. This works for brief interactions but becomes expensive and unwieldy for long sessions.

Long-term memory requires external storage—often vector databases for semantic retrieval. According to LangChain’s RAG agent tutorial, this pattern combines agent capabilities with retrieval-augmented generation.

The agent can query a knowledge base, retrieve relevant information, and incorporate it into reasoning. This approach scales to large document collections while keeping token usage manageable.

Step 6: Set Up Guardrails and Safety Measures

Autonomous systems require constraints. OpenAI’s March 2026 guide emphasizes guardrails as essential, not optional.

| Guardrail Type | Purpose | Implementation |

|---|---|---|

| Input validation | Prevent malicious prompts | Content filtering, prompt injection detection |

| Output filtering | Catch inappropriate responses | PII detection, content policy checks |

| Rate limiting | Control costs and abuse | Request quotas, timeout enforcement |

| Action approval | Human oversight for critical actions | Approval workflows, confidence thresholds |

| Monitoring | Track behavior and performance | Logging, alerting, audit trails |

Research from USC’s Institute for Creative Technologies published July 2025 outlines best practices for AI conversational agents in healthcare—principles that apply broadly. These include explicit consent mechanisms, transparent capability communication, and continuous safety monitoring.

The NIST AI Risk Management Framework (AI RMF 1.0), published in January 2023, provides additional guidance for trustworthy AI development. While not agent-specific, its principles around transparency, accountability, and testing remain relevant.

Step 7: Test and Iterate

Agent development is inherently iterative. According to LangChain’s blog (published July 10, 2025), teams should build an MVP first, then systematically test and improve.

Creating Test Cases

Start with realistic examples of the task the agent should handle. Include edge cases, error conditions, and ambiguous inputs. According to OpenAI, testing quality and safety requires diverse scenarios beyond the happy path.

Track key metrics: task completion rate, average steps to completion, tool usage patterns, error frequency, and response latency. These indicators reveal whether the agent actually works or just occasionally gets lucky.

Common Issues and Solutions

Agents often struggle with tool selection—choosing the wrong tool or failing to recognize when a tool is needed. This usually indicates poor tool descriptions or insufficient examples in prompts.

Infinite loops happen when agents can’t determine task completion. Setting maximum iteration limits prevents runaway execution. Better prompting around success criteria helps agents recognize when to stop.

Context overload occurs when agents receive too much information and lose focus. Improving retrieval relevance or implementing more selective context passing addresses this.

Step 8: Deploy and Monitor

Moving from prototype to production requires infrastructure decisions. Where will the agent run? How will users access it? What monitoring and logging systems are needed?

OpenAI’s Agent Builder allows embedding workflows via ChatKit or downloading SDK code for self-hosting. LangChain’s LangSmith provides tracing and monitoring for agents in production. According to their documentation, setting environment variables enables trace logging for debugging and optimization.

Production Considerations

Latency matters for user-facing agents. Multi-step agent workflows can take seconds or minutes depending on complexity. Setting clear user expectations about response time prevents frustration.

Cost management becomes critical at scale. Each agent invocation involves multiple LLM calls, tool executions, and data retrievals. Monitoring usage patterns and implementing caching strategies helps control expenses.

Versioning and updates require planning. Agents integrate multiple components—models, tools, prompts, and orchestration logic. Changes to any component can affect behavior. Maintaining version control and testing updates before deployment prevents production surprises.

Build the Strong System Behind Your AI Agent

Creating an AI agent is not just about the model. It depends on backend systems, APIs, integrations, and infrastructure that can run reliably in production. That’s where A-listware fits in. The company focuses on custom software development and dedicated engineering teams, covering architecture, development, testing, deployment, and ongoing support. This is the part that turns an AI concept into something that actually works inside a product.

If you’re building an AI agent, most of the work sits around it – connecting services, handling data flows, and keeping everything stable over time. A-listware supports the full development cycle, so you don’t have to split responsibilities across different vendors. Share your setup, define what needs to be built, and discover how A-listware can support the system around your AI agent.

Advanced Patterns: Multi-Agent Systems

Single agents handle discrete tasks. But complex workflows often benefit from multiple specialized agents collaborating.

According to the Agent² framework published on arXiv, the agent-generates-agent approach uses LLMs to autonomously design reinforcement learning agents. This meta-level automation shows promise for reducing the expertise required for agent development.

Multi-agent patterns include hierarchical structures where a coordinator agent delegates tasks to specialist agents, and peer collaboration where agents with different capabilities work together on shared goals.

OpenAI’s practical guide covers multi-agent orchestration, noting that coordination overhead increases system complexity. Teams should validate that multiple agents actually provide value over a single well-designed agent.

Real-World Applications and Results

According to MIT Press research (published January 30, 2026), enterprises implementing agent-centric architectures see productivity gains of 2-10x, but only when moving beyond superficial AI adoption.

McKinsey’s Global Survey on AI shows that while 78% of enterprises report using generative AI in at least one function, more than 80% report no material contribution to earnings. The difference lies in implementation depth.

One B2B sales organization cited in Harvard Data Science Review research automated prospecting and initial outreach using specialized agents, freeing sales teams to focus on relationship building and deal closing.

Common Mistakes to Avoid

Starting with fully autonomous agents before mastering structured workflows leads to unreliable systems. Anthropic’s guidance emphasizes building deterministic workflows first, then gradually introducing agentic decision-making where it adds value.

Neglecting error handling creates brittle systems that fail unpredictably. Production agents require comprehensive error detection, logging, and recovery mechanisms.

Over-engineering with complex frameworks when simple patterns would suffice wastes development time. According to Anthropic, the most successful teams use straightforward implementations with clear control flow.

Insufficient testing before deployment results in poor user experiences and potentially dangerous behavior. Systematic testing across diverse scenarios identifies issues before users encounter them.

Frequently Asked Questions

- What programming languages work best for building AI agents?

Python dominates agent development due to extensive library support. LangChain, OpenAI’s SDK, and most agent frameworks provide Python-first APIs. JavaScript/TypeScript work for web-based agents, with LangChain offering JavaScript libraries. For teams without coding expertise, no-code platforms like n8n eliminate language requirements entirely.

- How much does it cost to run an AI agent in production?

Costs vary dramatically based on usage patterns, model selection, and architecture. Each agent invocation involves multiple LLM API calls—costs scale with request volume and token usage. Development frameworks like LangChain are free and open-source, while hosting and API usage generate ongoing expenses. No-code platforms typically charge monthly subscription fees. For accurate estimates, check current pricing from the LLM provider and platform being considered.

- Can AI agents work offline or do they require internet connectivity?

Most agents require internet connectivity to access cloud-based LLMs via APIs. However, agents can be built with locally-run open-source models for offline operation, though this requires significant computational resources and technical setup. Hybrid approaches use local processing for some tasks while connecting to cloud services for others.

- What’s the difference between an AI agent and a chatbot?

Chatbots primarily handle conversation—responding to user messages based on predefined scripts or language model generation. AI agents go beyond conversation to take actions—querying databases, calling APIs, executing multi-step workflows, and making decisions based on observations. Agents use tools and maintain goal-directed behavior across multiple steps. Many conversational interfaces are actually agents underneath, even if users interact through chat.

- How long does it take to build a functional AI agent?

The timeline depends on complexity and approach. Simple automation agents using no-code platforms can be created in hours. Code-based agents handling specific tasks might take days to weeks for development and testing. Complex multi-agent systems with extensive integrations require months. According to OpenAI’s guide, teams should focus on narrow MVPs first—basic functionality implemented quickly, then expanded based on real-world performance.

- What are the biggest risks of deploying AI agents?

Agents might take unintended actions if prompts are ambiguous or tool descriptions unclear. Security vulnerabilities emerge if agents access sensitive data without proper controls. Cost overruns happen when agents make excessive API calls or enter loops. Reliability issues arise from inadequate error handling. User trust erodes if agents behave unpredictably. According to NIST’s AI Risk Management Framework, systematic risk assessment and mitigation strategies address these concerns.

- Do I need machine learning expertise to create an AI agent?

Not necessarily. Modern frameworks abstract away ML complexity—developers work with high-level APIs rather than training models from scratch. Understanding prompt engineering, API integration, and system design matters more than deep ML knowledge. No-code platforms eliminate even these requirements for simple use cases. However, optimizing agent performance, debugging complex behaviors, and implementing custom capabilities benefit from technical depth.

Getting Started With Your First Agent

The path from concept to working agent becomes clearer with structure. Start by defining one specific task the agent should handle. Choose a framework matching technical capabilities—LangChain for developers, no-code platforms for non-technical teams, or hybrid approaches for rapid prototyping.

Build the simplest version that could possibly work. One tool, minimal context, explicit control flow. Test it thoroughly against realistic scenarios. Only after this foundation proves reliable should expansion to additional capabilities begin.

According to research published across multiple authoritative sources in 2025-2026, this incremental approach separates successful agent deployments from abandoned experiments.

The agent ecosystem continues evolving rapidly. New frameworks emerge, existing tools add capabilities, and best practices solidify through real-world deployments. But the fundamental principles—clear purpose definition, appropriate tool design, systematic testing, and robust guardrails—remain constant.

Organizations capturing value from agents share common patterns: starting narrow, prioritizing reliability over autonomy, and treating agent development as iterative engineering rather than one-time implementation.

Ready to build? The frameworks, documentation, and community resources exist today. The main barrier isn’t technical capability—it’s taking the first concrete step from exploration to implementation.