Короткий виклад: Створення агентів ШІ передбачає поєднання великих мовних моделей з інструментами, пам'яттю та можливостями міркувань для побудови систем, які можуть автономно виконувати завдання. Сучасні фреймворки, такі як OpenAI Agents SDK, smolagents і n8n, дозволяють як розробникам, так і нетехнічним користувачам створювати функціональних агентів за допомогою коду або візуальних інтерфейсів. Процес вимагає визначення чітких цілей, вибору відповідних моделей, конфігурації інструментів і засобів захисту, а потім ітерацій на основі реальної продуктивності.

Агенти штучного інтелекту - одне з найбільш практичних застосувань великих мовних моделей на сьогоднішній день. На відміну від звичайних чат-ботів, які просто відповідають на запитання, агенти можуть міркувати, планувати, використовувати інструменти та виконувати складні робочі процеси.

Але що насправді потрібно для його створення? З початку 2025 року ландшафт стрімко розвивається, з'являються нові фреймворки та архітектурні патерни, які роблять розробку агентів набагато доступнішою.

У цьому посібнику розглядаються основи - від розуміння того, що робить щось агентом, до розгортання виробничих систем з правильними засобами захисту.

Розуміння архітектури агентів штучного інтелекту

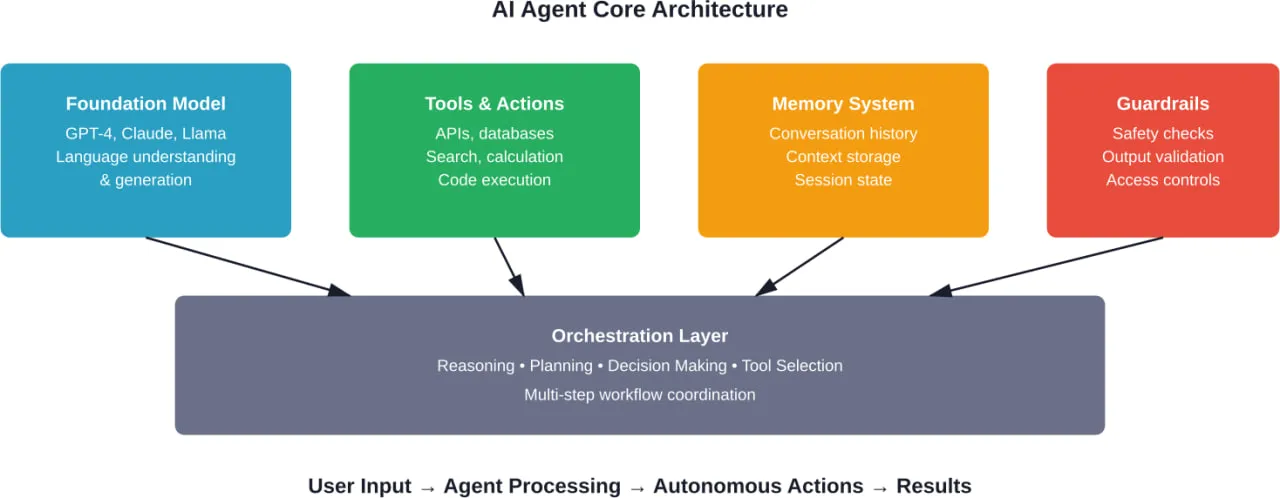

Згідно з нещодавнім дослідженням, опублікованим на arXiv, агенти штучного інтелекту поєднують базові моделі з чотирма основними можливостями: міркування, планування, пам'ять і використання інструментів. Таке поєднання створює системи, які можуть поєднувати природно-мовні наміри та обчислення в реальному світі.

Однак не кожна система штучного інтелекту може вважатися агентом. OpenAI визначає агентів як системи з трьома компонентами: інструкціями (що вона повинна робити), обмеженнями (чого вона не повинна робити) та інструментами (що вона може робити) для виконання дій від імені користувачів.

Якщо система просто відповідає на запитання, це не зовсім агент. Ця відмінність важлива, оскільки агенти вимагають принципово інших шаблонів дизайну, ніж діалогові інтерфейси.

Проблема оркестрування

Найскладніше - це не окремі компоненти, а те, як вони працюють разом. Агентам потрібно вирішити, коли використовувати інструменти, як розбивати складні запити на етапи і коли просити про роз'яснення, а не робити припущення.

Дослідження архітектури агентів штучного інтелекту показують, що сучасні системи справляються з цим за допомогою так званого рівня оркестрування. Він координує шаблони міркувань, керує багатокроковими робочими процесами та визначає стратегії вибору інструментів.

Без належної оркестровки агенти або не можуть виконати завдання, або виконують дії неналежним чином. Правильне налаштування відокремлює функціональних агентів від вражаючих демо-версій, які ламаються під час виробництва.

Вибір правильного фреймворку

Ландшафт фреймворків агентів значно розвинувся. З'явилися три категорії: корпоративні SDK, легкі бібліотеки та платформи без коду.

OpenAI Agents SDK - це готовий до використання інструментарій з вбудованою підтримкою багатоагентних робочих процесів, потокової передачі даних та комплексного трасування. Фреймворк обробляє складні патерни оркестрування та інтегрується безпосередньо з моделями OpenAI.

Смолагенти Hugging Face використовують мінімалістичний підхід, пропонуючи основні можливості агента без великих залежностей. Це особливо корисно при роботі з моделями з відкритим вихідним кодом або кастомними середовищами розгортання.

Для команд, які не мають ресурсів для кодування, такі платформи, як n8n, надають візуальні конструктори робочих процесів. Обговорення спільноти на форумах Hugging Face показують, що нетехнічні користувачі успішно створюють функціональних агентів за допомогою цих інструментів, хоча і з деякими обмеженнями щодо кастомізації.

| Фреймворк | Найкраще для | Крива навчання | Ключова перевага |

|---|---|---|---|

| OpenAI Agents SDK | Виробниче застосування | Помірний | Корпоративні функції, повне відстеження |

| смолисті речовини | Індивідуальні розгортання | Низький | Легкий, модельно-діагностичний |

| n8n | Робочі процеси без коду | Дуже низький | Візуальний інтерфейс, готові вузли |

| LangChain | Експеримент | Помірний | Широкі інтеграції |

| Microsoft Agent Builder | Лазурна екосистема | Низький | Інтеграція зі стеком Microsoft |

Як створити свого першого агента: Крок за кроком

Тут теорія зустрічається з практикою. Процес розбивається на шість чітких етапів, незалежно від того, який фреймворк використовується.

Визначте чіткі цілі

Невизначені цілі призводять до невизначених результатів. Агентам потрібні конкретні, вимірювані цілі з чіткими критеріями успіху.

Замість “допомагати з підтримкою клієнтів”, визначте: “Відповідайте на запитання щодо виставлення рахунків, використовуючи базу знань, перенаправляйте запити на відшкодування до людських агентів і надавайте статус замовлення з бази даних”. Ця специфіка впливає на кожне наступне рішення.

Згідно з документацією для розробників OpenAI, чітко визначені інструкції значно підвищують надійність агента. Система повинна знати, як виглядає успіх, перш ніж вона зможе його досягти.

Вибір та налаштування моделі

Не всі моделі однаково добре справляються із завданнями агентів. GPT-4 та Claude 3.5 Sonnet демонструють сильні міркування та можливості використання інструментів, тоді як легші моделі, такі як GPT-3.5, мають проблеми з багатокроковим плануванням.

Вибір моделі впливає на затримку, вартість і можливості. Для агентів, що працюють з клієнтами, де час відповіді має велике значення, швидші моделі з простішими робочими процесами часто перевершують більш продуктивні, але повільні альтернативи.

Тестування показує, що структуровані результати значно підвищують надійність. Обмеження моделі конкретними схемами JSON забезпечує послідовний виклик інструментів і зменшує кількість помилок синтаксичного аналізу.

Реалізувати доступ до інструментів

Інструменти перетворюють агентів з чат-ботів на людей, які діють. Кожен інструмент потребує чіткого опису, схеми параметрів та обробки помилок.

OpenAI Realtime API та Assistants API обробляють реєстрацію інструментів через визначення функцій, тоді як smolagents переважно використовує підхід Code-Agent, де інструменти є функціями Python, що викликаються безпосередньо у виконуваному середовищі. Обидва підходи вимагають явних визначень типів і перевірки.

Реальна розмова: почніть з 2-3 інструментів максимум. Складні набори інструментів створюють параліч прийняття рішень, коли агенти обирають невідповідні інструменти або пов'язують їх неефективно. Розширюйте набір інструментів лише після перевірки основних робочих процесів.

Побудова систем пам'яті та контексту

Пам'ять відокремлює чат-ботів з однією взаємодією від агентів, які зберігають контекст протягом сеансів. Куховарська книга OpenAI демонструє шаблони пам'яті сеансів, які зберігають історію розмов та уподобання користувача.

Короткострокова пам'ять зберігає нещодавні взаємодії в межах поточного сеансу. Довготривала пам'ять вимагає інтеграції з базою даних для відтворення інформації між сеансами.

Але зачекайте. Необмежена пам'ять створює проблеми з бюджетом токенів. Реалізуйте вибіркову пам'ять, яка надає пріоритет релевантному контексту над повною історією. Методи підсумовування допомагають стиснути тривалі взаємодії в зручний для сприйняття контекст.

Встановіть огородження

Огородження не дають агентам вчиняти неналежні дії. Рамки управління ризиками ШІ від NIST підкреслюють, що системи ШІ потребують чіткого контролю безпеки, а не лише розвитку потенціалу.

Перевірка вхідних даних відловлює шкідливі підказки, які намагаються замінити інструкції. Вихідна перевірка гарантує, що відповіді відповідають стандартам безпеки та якості, перш ніж потраплять до користувачів.

Відповідно до керівництва OpenAI з побудови агентів, структуровані результати забезпечують один рівень захисту, обмежуючи формати відповідей. Додаткові перевірки перевіряють, чи відповідають виклики інструментів дозволеним діям.

Широко тестуйте

Тестування агентів відрізняється від тестування традиційного програмного забезпечення. Детерміновані входи не гарантують детермінованих виходів, коли мовні моделі приймають рішення.

Створюйте набори тестів, що охоплюють граничні випадки: неоднозначні запити, багатокрокові робочі процеси, умови помилок і вхідні дані противника. Відстежуйте режими збоїв та ітеративно розширюйте тестове покриття.

Річ у тім, що агенти часто виходять з ладу несподівано. Один агент служби підтримки клієнтів успішно обробив тисячі запитів, перш ніж спробував повернути кошти, що перевищували вартість замовлення клієнта. Граничні випадки мають значення.

Потрібна допомога з агентом штучного інтелекту? Зверніться до A-listware

Більшість інструкцій для AI-агентів зосереджені на логіці та поведінці, але найскладнішим є все, що відбувається навколо - налаштування сервісів, обробка даних і забезпечення безперебійної роботи системи. A-listware працює над розробкою програмного забезпечення на замовлення і надає спеціальні команди інженерів, які займаються цими питаннями, від архітектури до розгортання та постійної підтримки.

Коли ви виходите за рамки ідеї, робота переходить до створення стабільної системи, яку можна запустити у виробництво. Замість того, щоб розподіляти роботу між різними постачальниками, все можна зробити в одному місці. Поговоріть з Програмне забезпечення списку А, поділитися своїми налаштуваннями та отримати чітке уявлення про те, як побудувати систему на основі вашого АІ-агента.

Робота з конструкторами агентів без коду

Платформи без кодів значно знижують бар'єр для входу на ринок. Такі платформи, як n8n та Vertex AI Agent Builder, дозволяють створювати робочі процеси за допомогою візуальних інтерфейсів.

Досвід спільноти, яким діляться на таких платформах, як форуми Hugging Face, свідчить про те, що нетехнічні користувачі успішно створюють функціональних агентів за допомогою цих інструментів. Платформа надає заздалегідь побудовані вузли для загальних операцій: HTTP-запити, запити до бази даних, виклики ШІ-моделі.

Обмеження стають очевидними зі складною логікою. Умовні розгалуження, обробка помилок і створення кастомних інструментів часто вимагають написання скриптів навіть у візуальних конструкторах. Для простих робочих процесів - пошуку даних, простих дерев рішень, тригерів сповіщень - добре підходять платформи без коду.

Коли обирати безкодовий режим

No-code має сенс для прототипування, внутрішніх інструментів та команд, які не мають інженерних ресурсів. Він особливо ефективний для автоматизації повторюваних завдань, які слідують передбачуваним шаблонам.

Але додатки виробничого масштабу зі складними вимогами врешті-решт наштовхуються на обмеження платформи. Перехід від безкодового прототипу до кодової реалізації відбувається часто, коли проекти стають зрілими.

Впровадження мультиагентних систем

Окремі агенти виконують фокусовані завдання. Складні робочі процеси виграють, коли кілька спеціалізованих агентів координують свої дії разом.

Кулінарна книга OpenAI містить приклади багатоагентної співпраці, де різні агенти виконують різні обов'язки. Один агент може шукати інформацію, інший аналізувати дані, а третій генерувати звіти.

Дослідження, що відрізняють автономних агентів від систем спільної роботи, показують, що мультиагентні архітектури краще справляються з декомпозицією складних проблем. Кожен агент розвиває експертизу у своїй галузі, в той час як оркестратор координує інформаційні потоки.

Не варто недооцінювати витрати на координацію. Мультиагентні системи вимагають ретельних протоколів передачі даних, спільного управління контекстом і стратегій вирішення конфліктів, коли агенти видають суперечливі результати.

| Архітектура | Варіанти використання | Складність | Схема координації |

|---|---|---|---|

| Одиночний агент | Сфокусовані завдання, прості робочі процеси | Низький | Н/Д |

| Послідовний мультиагент | Обробка трубопроводів | Помірний | Лінійні передачі |

| Ієрархічний мультиагент | Складні робочі процеси | Високий | Модель "менеджер-працівник |

| Колаборативний мультиагент | Вирішення проблем, аналіз | Дуже високий | Переговори за принципом "рівний-рівному |

Міркування щодо розгортання та виробництва

Запуск агента для локальної роботи суттєво відрізняється від розгортання на виробництві. Перш ніж випускати агентів для користувачів, слід звернути увагу на кілька факторів.

Затримка та продуктивність

Багатокрокові робочі процеси агентів накопичують затримки. Кожен виклик інструменту, крок міркувань та взаємодія з моделлю додає часу. Користувачі помічають затримки понад 3-5 секунд.

Потокові відповіді покращують сприйняття продуктивності. OpenAI SDK підтримує потокову передачу як для генерації тексту, так і для виконання інструменту, що дозволяє поступово виводити результати на екран.

Стратегії кешування зменшують надлишкові обчислення. Часто запитувану інформацію можна кешувати за допомогою відповідних політик виключення.

Управління витратами

Агенти споживають більше токенів, ніж прості чат-додатки. Цикли міркувань, описи інструментів та історія розмов швидко накопичують витрати.

Відстежуйте використання токенів на кожну взаємодію. Встановлюйте ліміти бюджету на користувача або сесію. Реалізуйте плавну деградацію при наближенні до лімітів, а не жорсткі відмови.

Вибір моделі суттєво впливає на вартість. GPT-4 забезпечує кращі міркування, але коштує значно дорожче, ніж GPT-3.5. Для багатьох робочих процесів дешевша модель працює адекватно.

Моніторинг та спостережливість

Виробничі агенти потребують комплексного моніторингу. Відстежуйте показники успішності, режими збоїв, моделі використання інструментів та задоволеність користувачів.

OpenAI Agents SDK містить вбудовану функцію трасування, яка записує повну історію взаємодії. Ця наочність виявляється важливою для налагодження несподіваної поведінки.

Згідно з дослідженням, телекомунікаційна компанія Vodafone впровадила систему підтримки на основі ШІ-агентів, яка обробляє понад 70% запитів клієнтів без втручання людини. Ця система досягла такого рівня продуктивності при збереженні високого рівня задоволеності клієнтів завдяки постійному моніторингу та вдосконаленню на основі реальних моделей використання.

Типові помилки та як їх уникнути

Певні помилки повторюються при розробці агентів. Навчання на чужому досвіді прискорює прогрес.

Надто широкі цілі

Агенти, які намагаються робити все, нічого не досягають. Вузька сфера застосування дає кращі результати, ніж універсальні системи.

Чітко визначте межі. Які завдання належать до компетенції агента? Що слід ескалувати або відкинути?

Недостатня обробка помилок

Інструменти не працюють. Таймаут API. Бази даних повертають помилки. Агенти потребують витончених стратегій деградації для кожної зовнішньої залежності.

Поведінка за замовчуванням для станів помилки запобігає галюцинаціям агентів, коли дані недоступні. Краще визнати обмеження, ніж вигадувати інформацію.

Нехтування огородженнями до початку виробництва

Міркування безпеки мають бути закладені в початковому проєкті, а не в останню чергу. Модернізувати огородження в існуючі засоби виявляється складніше, ніж вбудувати їх з самого початку.

Керівництво NIST підкреслює, що відповідальна розробка ШІ вимагає розуміння законодавчих вимог і управління задокументованими ризиками протягом усього життєвого циклу розробки.

Недооцінка вимог до тестування

Загалом, тестування агентів займає 40-50% часу розробки. Це не неефективність - це природа недетермінованих систем, які потребують великої перевірки.

Складіть відповідний бюджет і створіть комплексні набори тестів, що охоплюють реалістичні сценарії.

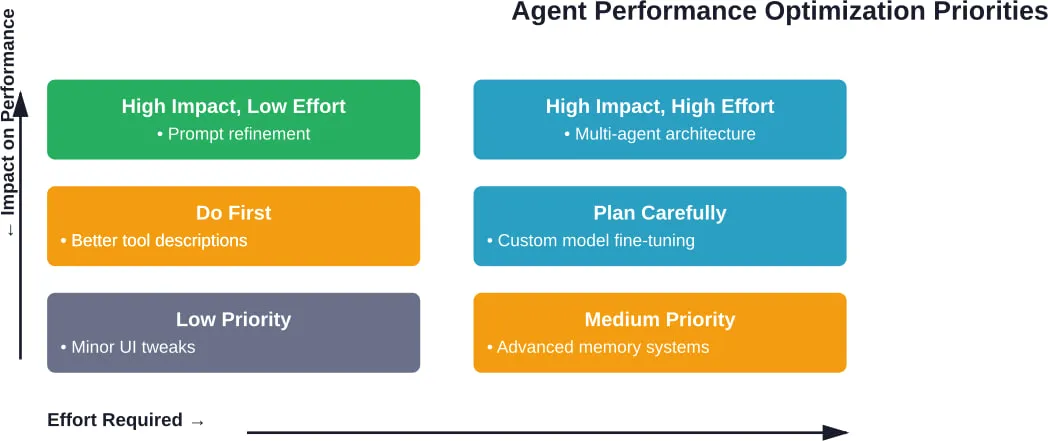

Передові технології та оптимізація

Після того, як базові агенти працюють надійно, кілька стратегій оптимізації покращують продуктивність і можливості.

Оперативний інжиніринг для агентів

Підказки агента відрізняються від підказок у чаті. Вони потребують чітких схем міркувань, зрозумілих описів інструментів та прикладів правильного прийняття рішень.

Підказка ланцюжка думок покращує багатокрокові міркування. Інструкція агентам пояснювати своє мислення перед тим, як діяти, зменшує імпульсивне використання інструментів.

Кілька прикладів демонструють бажану поведінку. Показ 2-3 прикладів правильного вибору інструментів значно покращує роботу агента на подібних завданнях.

Інтеграція бази знань

Агенти отримують вигоду від доступу до кураторських знань. Векторні бази даних уможливлюють семантичний пошук у документації, що дозволяє агентам динамічно отримувати релевантну інформацію.

Курс про агентів від Hugging Face охоплює приєднання бази знань до агентів. Шаблон передбачає вбудовування документів, зберігання векторів та реалізацію інструментів пошуку, які може викликати агент.

Тримайте бази знань сфокусованими. Масивні, розфокусовані бази знань створюють шум при пошуку, коли агентам важко знайти потрібну інформацію.

Адаптивні моделі навчання

Хоча агенти не навчаються в режимі реального часу, шаблони використання допомагають ітеративно вдосконалювати їх. Аналіз поширених типів помилок допомагає швидко вдосконалювати та покращувати інструмент.

Цикли зворотного зв'язку з користувачами виявляють прогалини в можливостях. Якщо агенти часто підвищують рівень певних типів запитів, це сигналізує про можливості для розробки нових інструментів або розширення знань.

Поширені запитання

- У чому різниця між АІ-агентом і чат-ботом?

Чат-боти відповідають на запитання, надаючи інформацію. Агенти виконують дії за допомогою інструментів - вони можуть запитувати бази даних, викликати API, виконувати код і автономно виконувати багатокрокові завдання. Ключова відмінність - здатність до дій, що виходять за рамки розмови.

- Чи потрібні мені навички кодування для створення АІ-агентів?

Не обов'язково. Платформи без коду, такі як n8n і Vertex AI Agent Builder, дозволяють створювати агентів за допомогою візуальних інтерфейсів. Однак складні агенти з власною логікою та розширеними функціями зазвичай вимагають знань програмування. Початок роботи з інструментами без коду забезпечує практичний шлях навчання.

- Який фреймворк використовувати для мого першого агента?

Для початківців з досвідом кодування smolagents пропонує м'яку криву навчання з вичерпною документацією. Для тих, хто віддає перевагу візуальній розробці, n8n надає найбільш доступну відправну точку. Для виробничих додатків OpenAI Agents SDK надає готові функції та підтримку для підприємств.

- Скільки коштує запуск АІ-агента?

Вартість залежить від вибору моделі, обсягу використання та складності. Агенти, що використовують GPT-4, споживають більше ресурсів, ніж ті, що використовують GPT-3.5. Використання токенів накопичується з інструкцій, описів інструментів, історії розмов і циклів міркувань. Перевірте поточні тарифи на офіційних сторінках з цінами - вони часто змінюються.

- Чи можуть агенти працювати з власними джерелами даних?

Безумовно. Агенти отримують доступ до власних даних завдяки інтеграції інструментів. Створюйте інструменти, які запитують внутрішні бази даних, викликають власні API або отримують інформацію з баз знань. Векторні бази даних дозволяють здійснювати семантичний пошук у користувацьких документах, роблячи знання організації доступними для агентів.

- Як запобігти небезпечним діям мого агента?

Впроваджуйте кілька рівнів захисту: перевірка вхідних даних для виявлення зловмисних підказок, перевірка авторизації перед запуском інструменту, перевірка вихідних даних для перевірки відповідей і обмеження швидкості для запобігання зловживанню. Система управління ризиками ШІ від NIST надає рекомендації щодо створення належних засобів контролю безпеки для систем ШІ.

- Який типовий графік створення продюсерського агента?

Прості агенти з вузькоспеціалізованими завданнями можуть вийти на рівень виробництва за 2-4 тижні. Складні мультиагентні системи з широкою інтеграцією інструментів зазвичай потребують 2-3 місяці. Тестування та доопрацювання займають 40-50% часу розробки. Ці часові рамки передбачають наявність попереднього досвіду - розробникам-початківцям слід очікувати довших циклів розробки, оскільки вони просуваються по кривій навчання.

Наступні кроки вашої агентської подорожі

Створення ШІ-агентів поєднує в собі технічну реалізацію та продуманий дизайн. Фреймворки існують, моделі працюють, а патерни добре задокументовані.

Почніть з малого. Створіть одноцільовий агент, який надійно виконує один робочий процес. Опануйте основи інтеграції інструментів, швидкого проектування та впровадження захисних систем.

Потім розширюйте поступово. Додавайте інструменти, коли виникають потреби. Реалізуйте пам'ять, коли контекст стає важливим. Розглядайте багатоагентні архітектури лише після того, як окремі агенти доведуть свою цінність.

Ландшафт агентів продовжує стрімко розвиватися. З'являються нові фреймворки, вдосконалюються моделі та розвиваються архітектурні патерни. Слідкуйте за документацією від OpenAI, Hugging Face та ширшої спільноти розробників.

Найважливіше - будувати речі. Читання про агентів дає розуміння, а їх створення - інсайт. Розрив між теоретичними знаннями та практичною реалізацією долається завдяки практичному досвіду.

Готові почати будувати? Оберіть фреймворк, визначте ціль і створіть щось функціональне. Найкращий спосіб навчитися розробляти агентів - це відправити працюючих агентів.